Connected Communities Infrastructure - General Solution Design Guide

Bias-Free Language

The documentation set for this product strives to use bias-free language. For the purposes of this documentation set, bias-free is defined as language that does not imply discrimination based on age, disability, gender, racial identity, ethnic identity, sexual orientation, socioeconomic status, and intersectionality. Exceptions may be present in the documentation due to language that is hardcoded in the user interfaces of the product software, language used based on RFP documentation, or language that is used by a referenced third-party product. Learn more about how Cisco is using Inclusive Language.

- Updated:

- November 8, 2021

Chapter: Connected Communities Infrastructure - General Solution Design Guide

- Scope of CCI Release 2.1

- References

- Document Organization

- Solution Overview

- Solution Architecture

- Solution Components

- CCI Switched Ethernet Access Network (PoPs)

- CCI Remote Point-of-Presence Design

- Ultra Reliable Wireless Backhaul

- CCI Wi-Fi Access Network

- CCI Wireless IoT Devices Networks

- Shared Network Services

- Conclusions

- Acronyms and Initialisms

Connected Communities Infrastructure General Solution Design Guide

Modernizing the technology landscape of our cities, communities, and roadways is critical. Efforts toward digital transformation will form the basis for future sustainability, economic strength, operational efficiency, improved livability, public safety, and general appeal for new investment and talent. These efforts can be complex and challenging. What we need is a different approach to address the growing number of connected services, systems, devices, and their volumes of data. Overwhelming options for connecting new technologies make decision-making more difficult and present risks that often seem greater than the reward. This approach will require a strategic and unified consideration of the broad needs across organizational goals and the evolving nature of the underlying technology solutions.

Typically, multiple connectivity solutions are traditionally created as separate and isolated networks. This leads to duplication of infrastructure and effort and cost, inefficient management practices, and less assurance for security and resiliency. Traditional networking also commonly manages on a per-device basis, which takes time, creates unnecessary complexities, and heightens exposure to costly human errors.

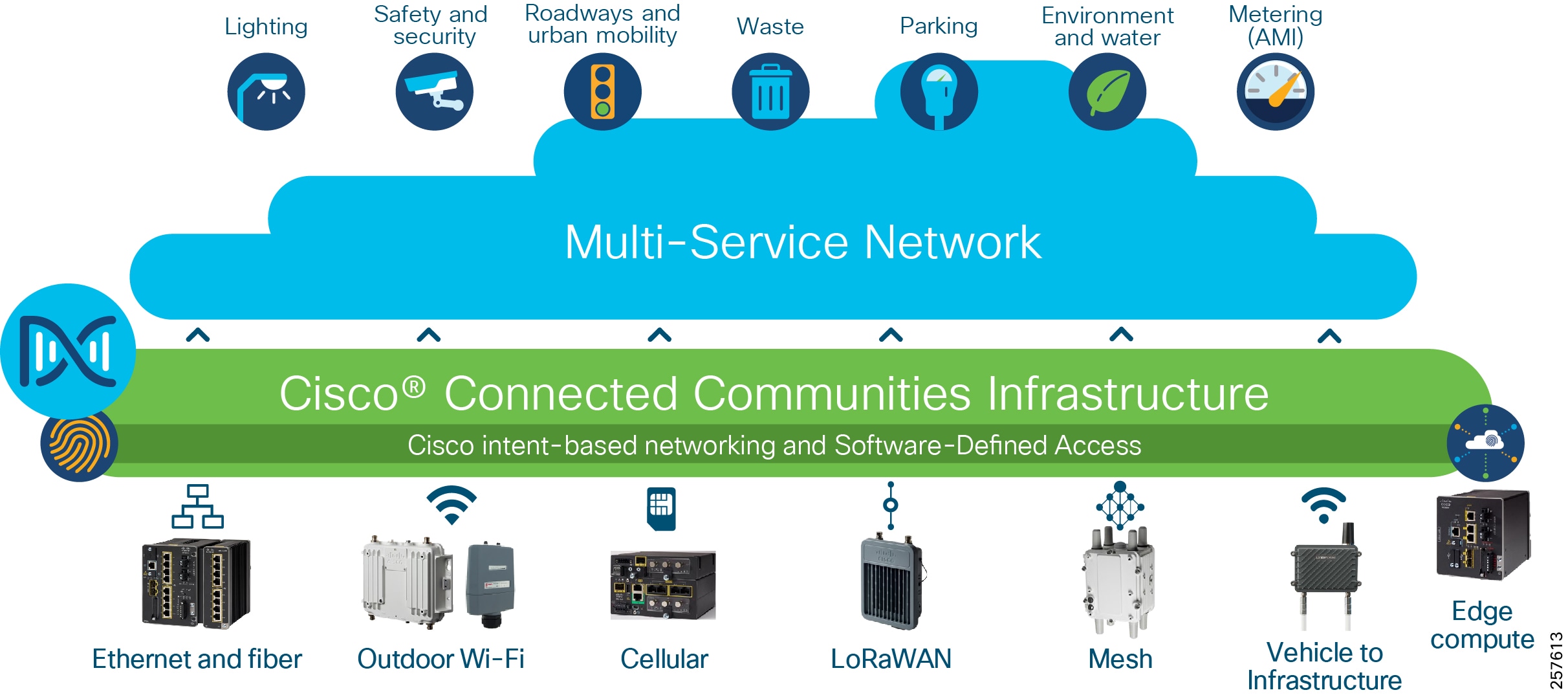

With Cisco Connected Communities Infrastructure (CCI), you can create a single, secure communications network to support all your needs that is simpler to deploy, manage and secure. Based on the market-defining Cisco Digital Network Architecture (Cisco DNA) and Intent-based Networking capabilities, this solution provides:

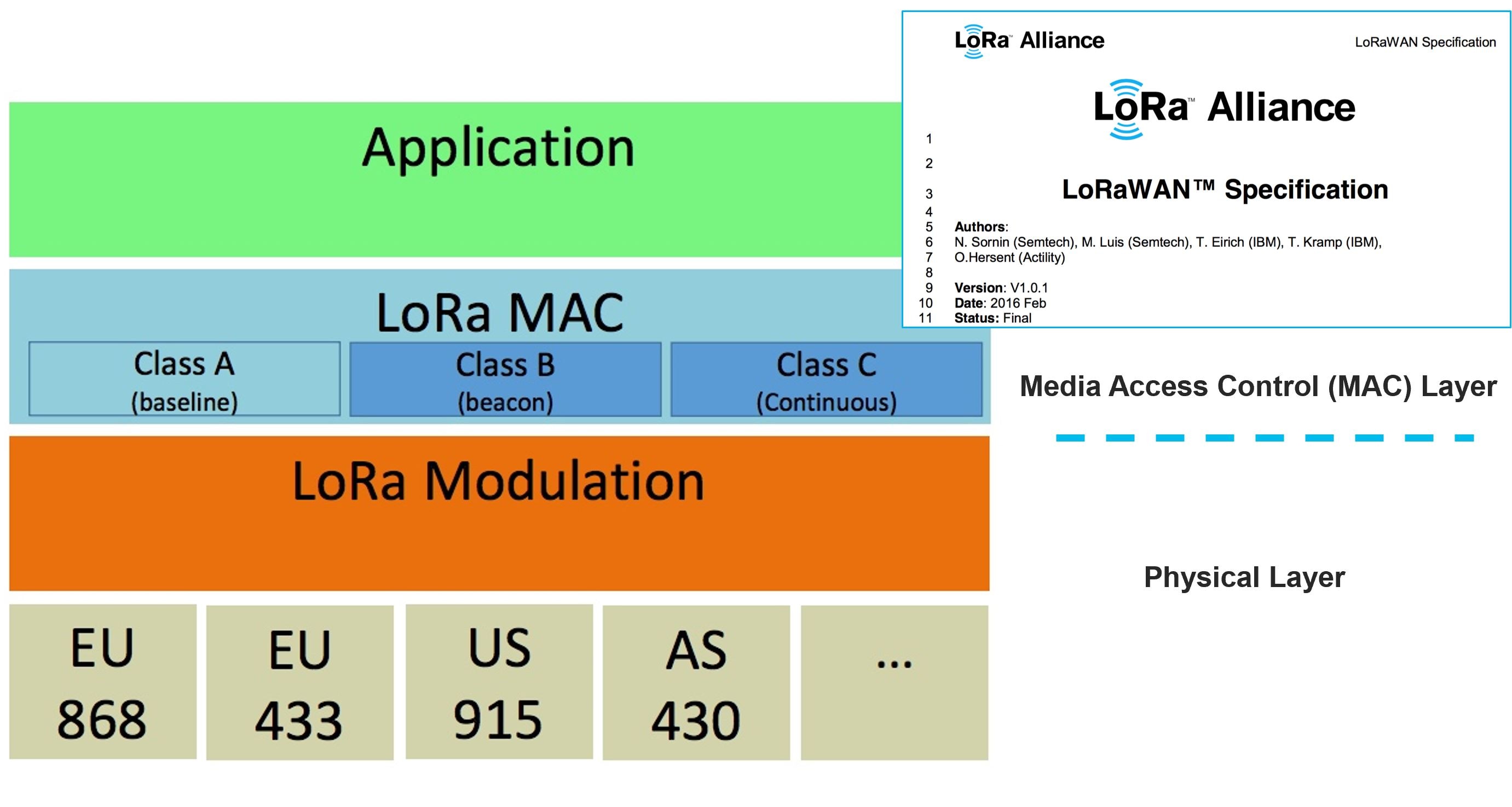

- A single, modular network with wired (fiber, Ethernet), wireless (Wi-Fi, cellular, and V2X) and Internet of Things (IoT) communications (LoRaWAN and Wi-SUN mesh) connectivity options for unmatched deployment flexibility

- Cisco Software-Defined Access (SD-Access) to virtually segment and secure your network across departments and services, each with its own policies, control, and management as needed

- Cisco DNA Center for network automation with unified management of communications policy and security that significantly lowers operational costs; Cisco DNA Center also provides assistance in security compliance, which is becoming a significant challenge for our customers to prove

- Highly reliable outdoor and ruggedized networking equipment with simplified zero-touch in-street and roadway deployment options

For additional overview materials, presentations, blogs and links to other higher-level information on Cisco’s Connected Communities Infrastructure solution please see: http://cisco.com/go/cci

Scope of CCI Release 2.1

This Design Guide provides network architecture and design guidance for the planning and subsequent implementation of a Cisco Connected Communities Infrastructure solution. In addition to this Design Guide, there are Connected Communities Infrastructure Cities Solution Design Guide, Connected Communities Infrastructure Roadways Solution Design Guide, Connected Communities Infrastructure Rail Solution Design Guide is and also a Connected Communities Infrastructure Implementation Guide that provides more specific implementation and configuration guidance and examples also exists.

For Release 2.1 of the CCI CVD, the horizontal scope covers all the access technologies listed in Cisco Connected Communities Infrastructure.

This Release 2.1 supersedes and replaces the CCI Release 2.0 Design Guide.

New capabilities in CCI Release 2.1

- Cisco Ultra Reliable Wireless Backhaul (CURWB) for CCI backhaul and wireless access networks

- Enhanced Ethernet Access Ring & Provisioning

–![]() IE-3300 10G Access Ring in CCI PoPs

IE-3300 10G Access Ring in CCI PoPs

–![]() Daisy Chaining Automation of Extended and Policy Extended Nodes using Cisco DNA Center

Daisy Chaining Automation of Extended and Policy Extended Nodes using Cisco DNA Center

–![]() REP Ring Automation using Cisco DNA Center

REP Ring Automation using Cisco DNA Center

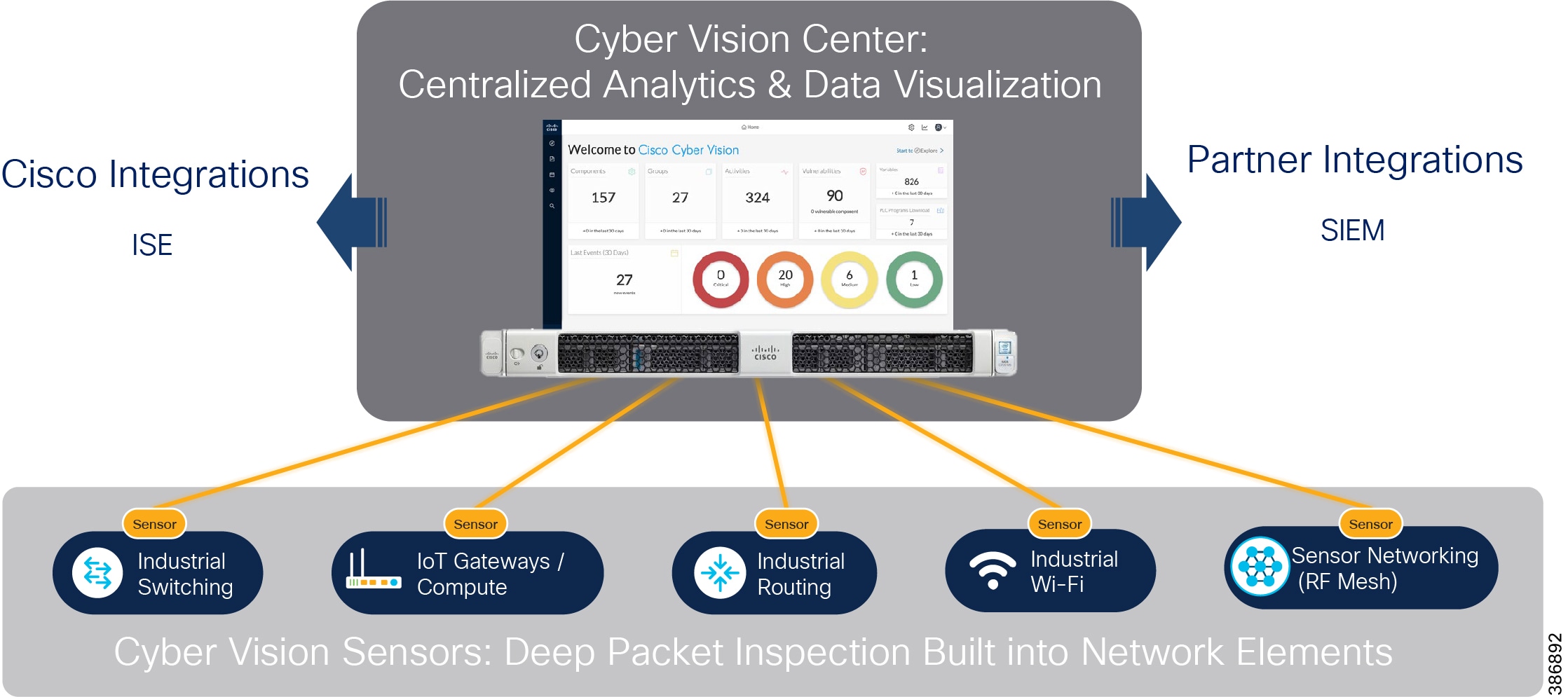

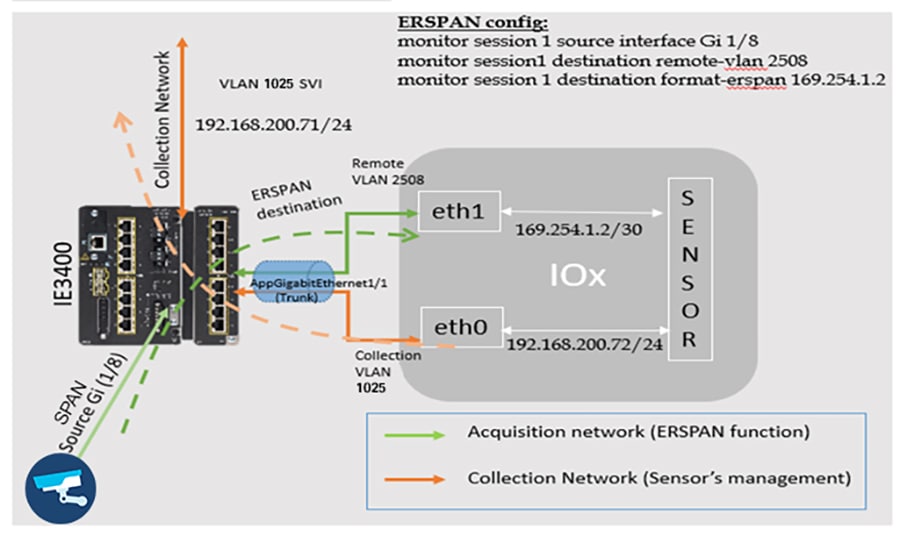

–![]() CyberVision Sensor deployment on IE-3400, IE-3300 10G and IR-1101 Platform

CyberVision Sensor deployment on IE-3400, IE-3300 10G and IR-1101 Platform

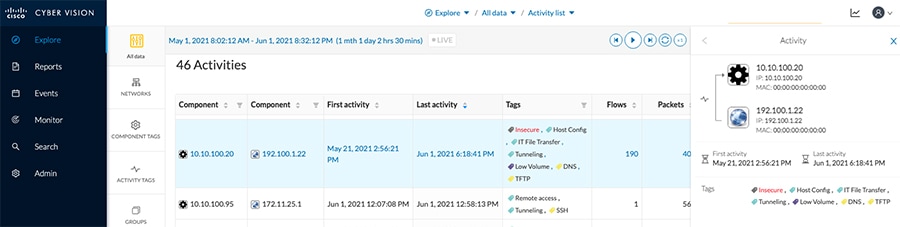

–![]() OT Device and Protocols (DNP3 and MODBUS) Flow Detection using Cisco Cyber Vision Center

OT Device and Protocols (DNP3 and MODBUS) Flow Detection using Cisco Cyber Vision Center

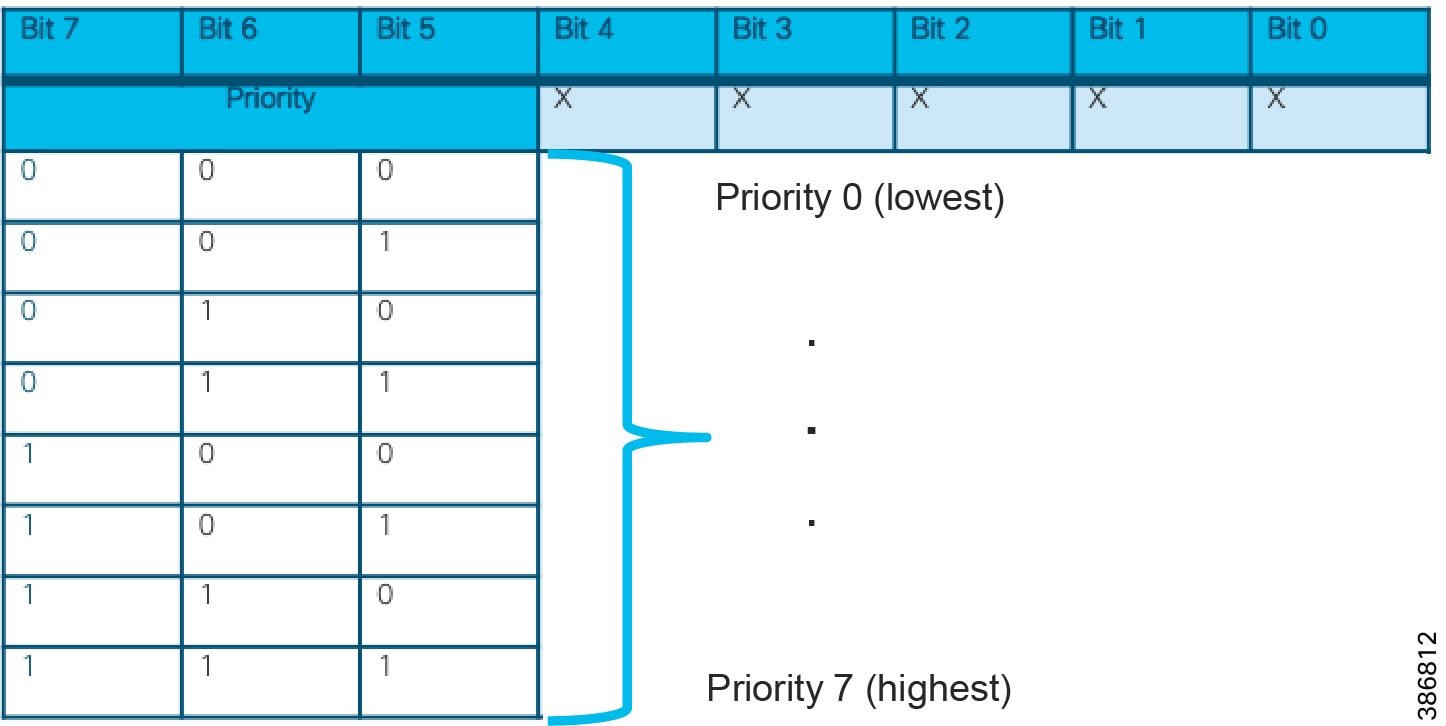

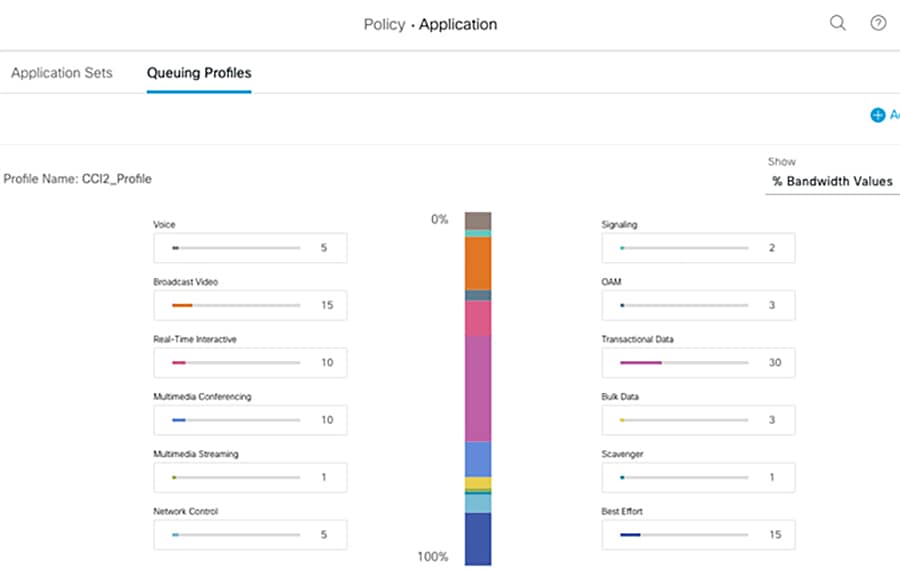

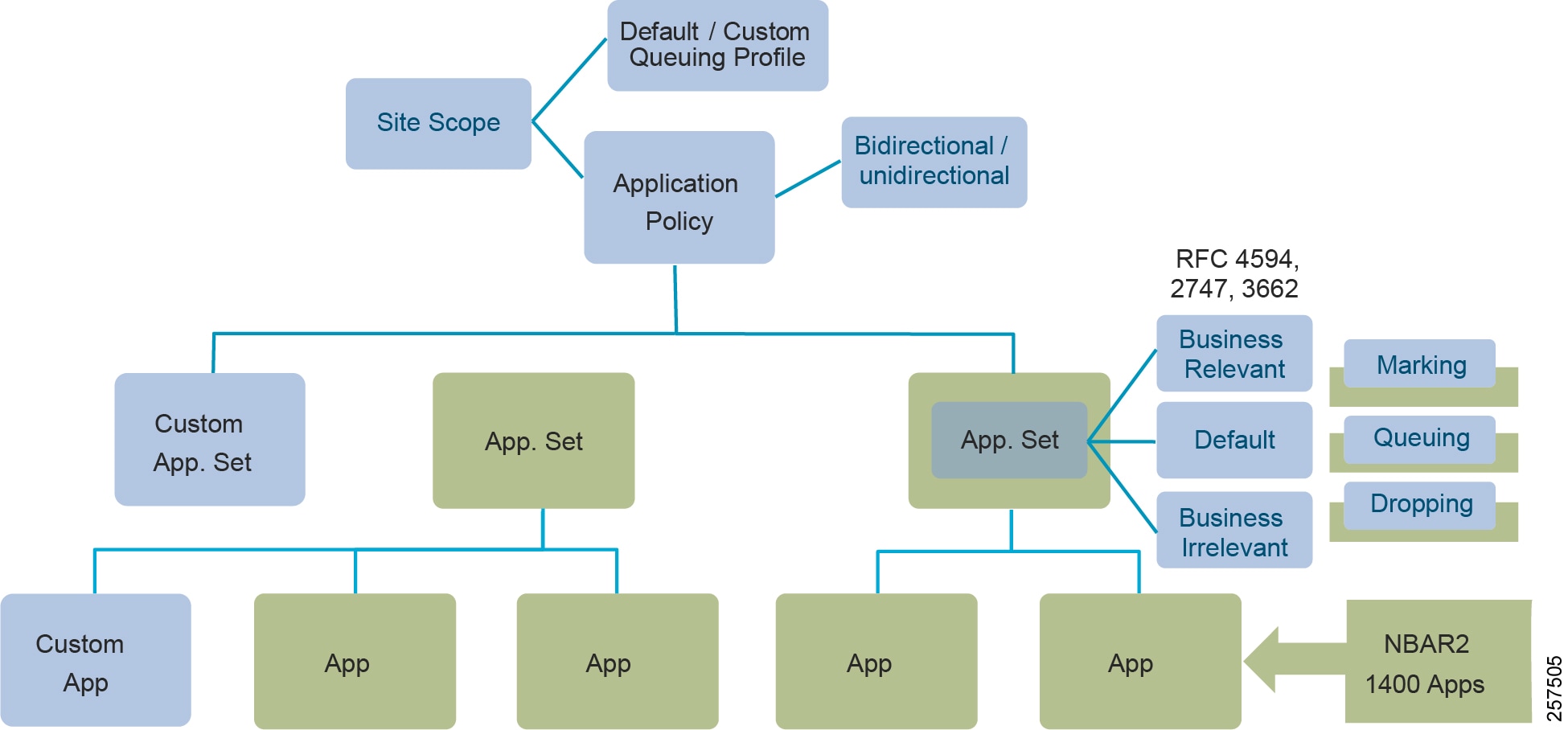

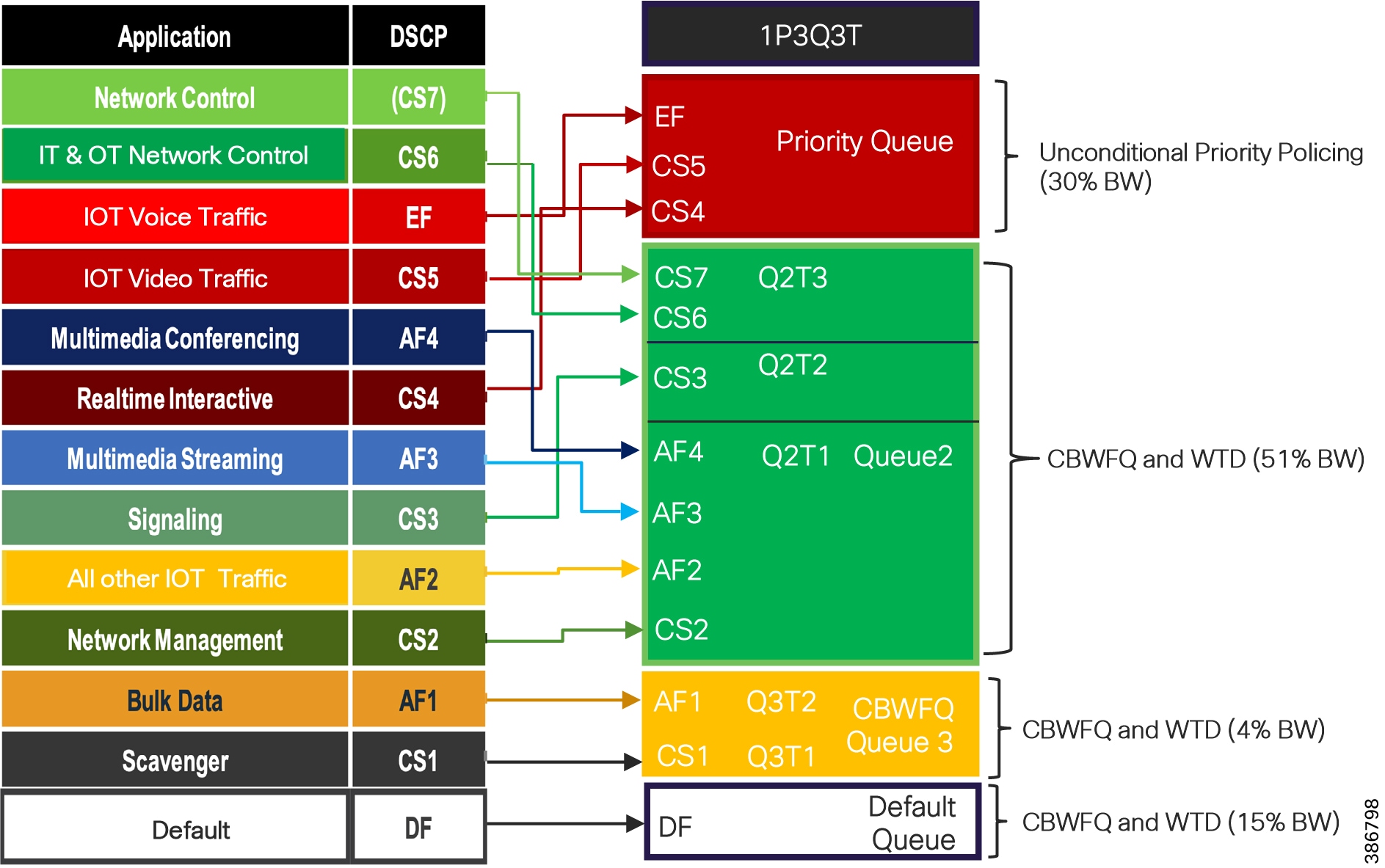

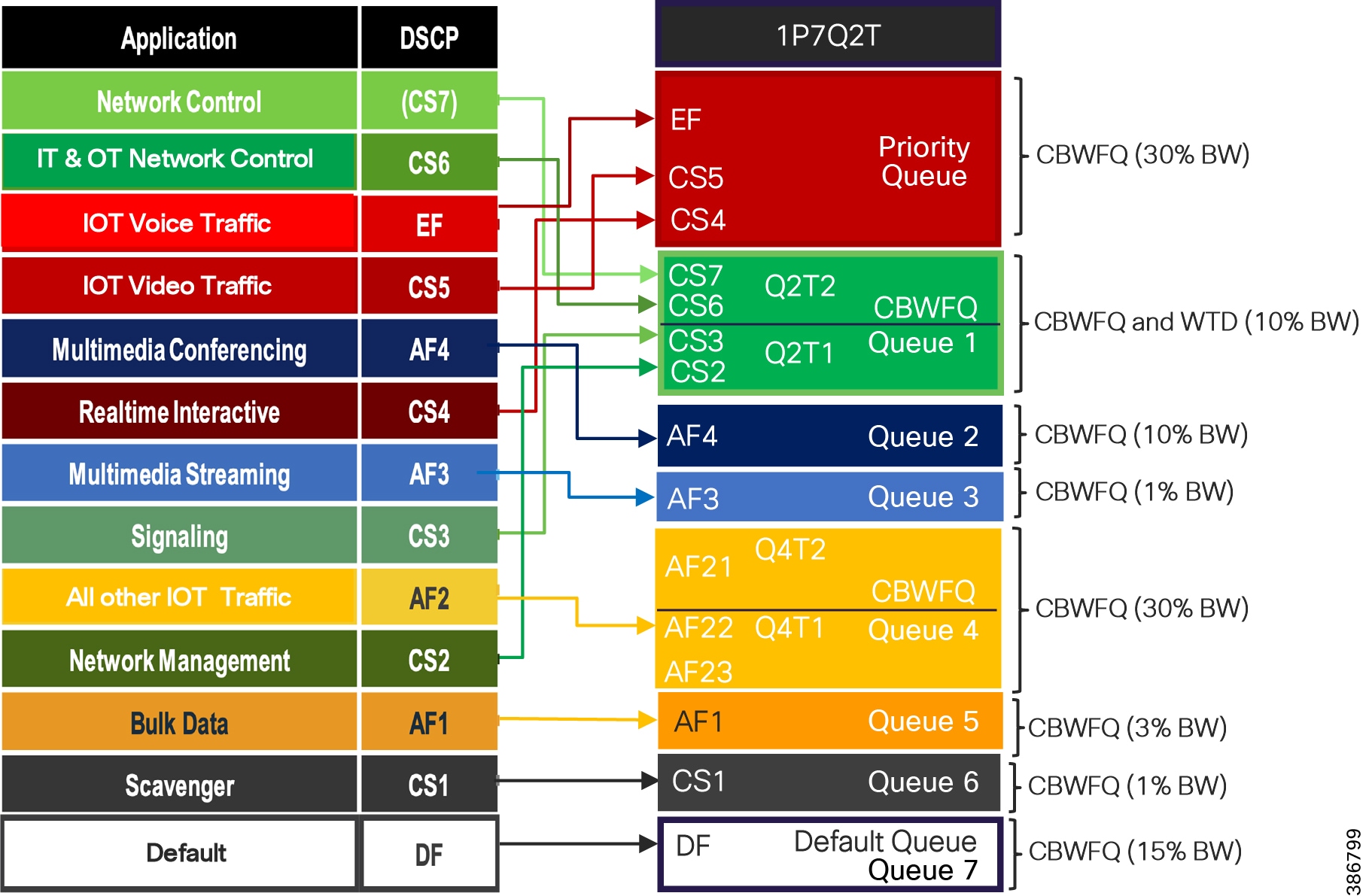

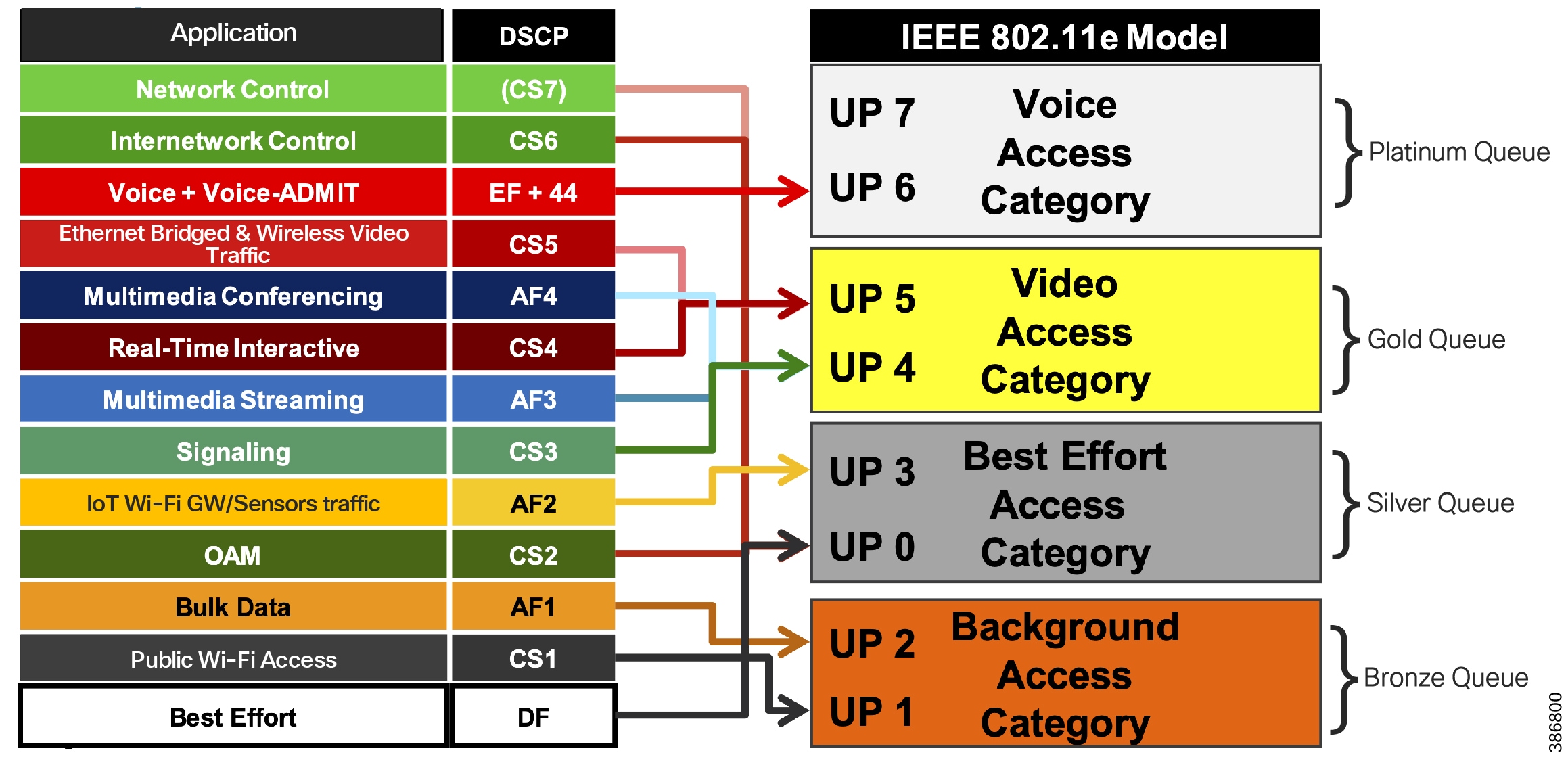

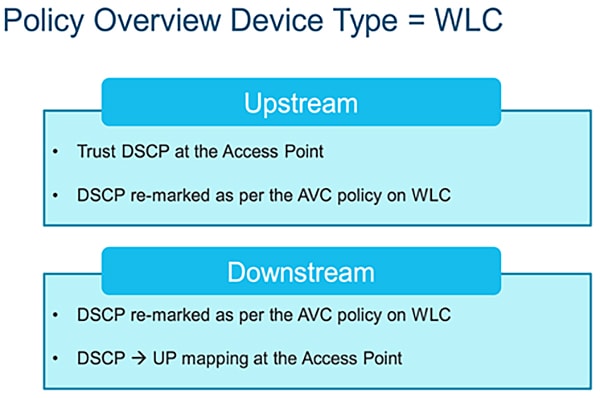

- Enhanced End-to-End QoS design on IE3400 and IE3300 10G

- Enhanced Remote Point-of-Presence (RPoP) Management design

–![]() IR-1800 as RPoP gateway with multi-service and macro-segmentation at RPoP

IR-1800 as RPoP gateway with multi-service and macro-segmentation at RPoP

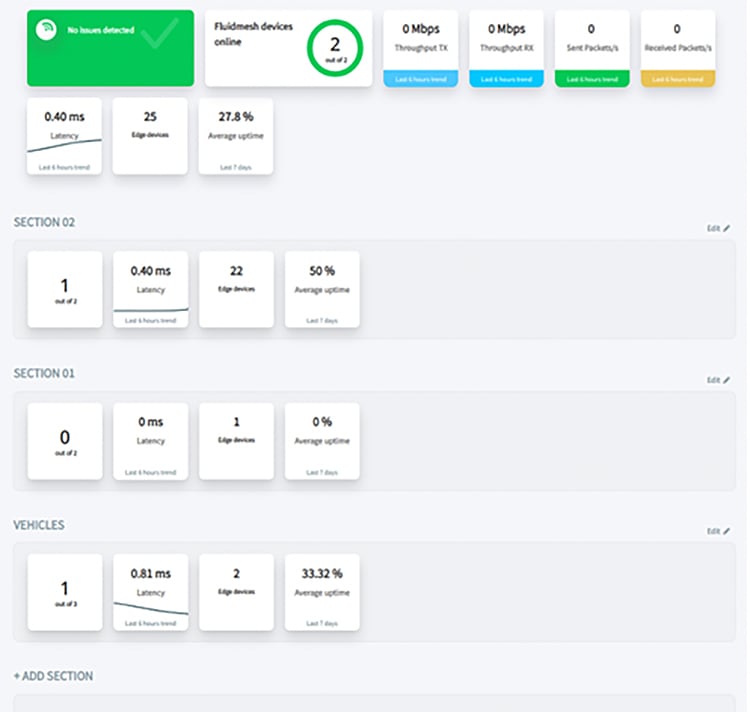

–![]() RPoP Management Design using Cisco DNA Center and Cisco IoT Operations Dashboard (IoTOD)

RPoP Management Design using Cisco DNA Center and Cisco IoT Operations Dashboard (IoTOD)

References

For associated deployment and implementation guides, related Design Guides, and white papers, see the following pages:

- Cisco Connected Communities Infrastructure - Cities Solution: www.cisco.com/c/en/us/td/docs/solutions/Verticals/CCI/CCI/DG/Cities/cci-dg/cci-dg.html

- Cisco Cities and Communities Infrastructure - Roadways Solution: www.cisco.com/c/en/us/td/docs/solutions/Verticals/CCI/CCI/DG/Roadways/cci-dg_roadways/cci-dg_roadways.html

- Cisco Connected Communities Infrastructure - Rail Solution: www.cisco.com/c/en/us/td/docs/solutions/Verticals/CCI/CCI/DG/Rail/cci-dg_rail/cci-dg_rail.html

- Cisco Cities and Communities: https://cisco.com/go/smartconnectedcommunities

- Cisco Connected Roadways: https://cisco.com/go/connectedroadways

- Cisco Connected Community Infrastructure Design Guides: https://www.cisco.com/go/designzone

- Cisco IoT Solutions Design Guides: https://www.cisco.com/go/iotcvd

Customers and partners with an appropriate Cisco Account (CCO account) can access additional CCI sales collaterals and technical presentations via the CCI Sales Connect hub: https://salesconnect.cisco.com/#/program/PAGE-15434.

Document Organization

The following table describes the chapters in this document:

Solution Overview

This chapter includes the following major topics:

Cisco Connected Communities Infrastructure

The Cisco CCI Cisco Validated Design (CVD) is a network for Campus/Metropolitan area/Geographic region/Roadways. It delivers an Intent-based Networking solution by leveraging Cisco's Software-defined Access (SD-Access) with the Cisco DNA Center management and Identity Services Engine (ISE), along with ruggedized edge hardware, to enable a scalable, segmented, and secure set of services to be deployed:

- Overlay network(s) for segmentation and policy enforcement

- Underlay network for basic IP forwarding and connectivity

- Access to the Overlay Fabric via Industrial Ethernet (IE) switches as Extended Nodes (EN) and Policy Extended Nodes (PEN)

- Services delivered are a mix of standard enterprise and IoT specialized

- Deployable in modules

- Incorporating multiple access technologies, specifically:

–![]() Wired Ethernet including Fiber, Copper, Copper with PoE, and Copper via CURWB

Wired Ethernet including Fiber, Copper, Copper with PoE, and Copper via CURWB

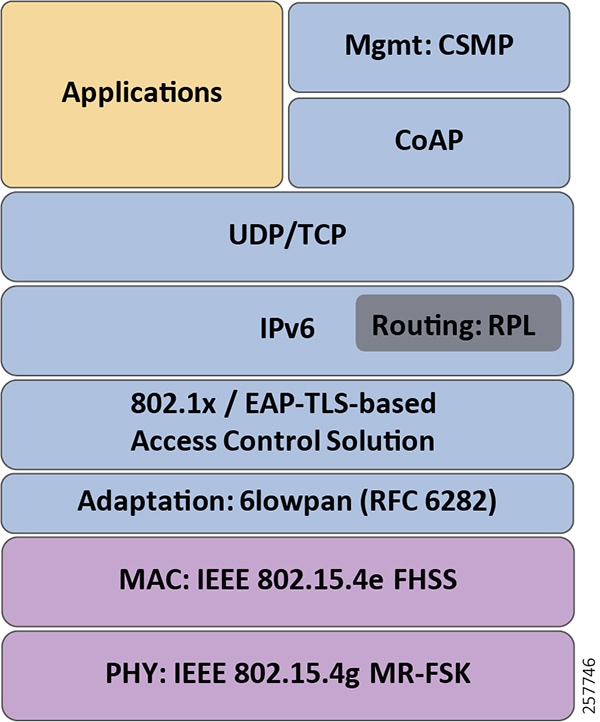

–![]() Cisco Resilient Mesh (CR-Mesh) / Wi-SUN

Cisco Resilient Mesh (CR-Mesh) / Wi-SUN

–![]() Vehicle-to-Infrastructure (V2X)

Vehicle-to-Infrastructure (V2X)

–![]() Multiprotocol Label Switching (MPLS)

Multiprotocol Label Switching (MPLS)

CCI Network Architecture

The CCI Network Architecture is a horizontal architecture. Instead of being in support of a specific, limited vertical set of use cases, CCI facilitates many different use cases and verticals. Some of these you will find examples in Connected Communities Infrastructure Cities, Roadways, and Rail Solutions Design Guides, but in general, CCI is non-prescriptive as to what applications and use cases customers can achieve using CCI.

The CCI Network Architecture helps customers design a multi-service network that can be distributed over a large geographical area with a single policy plane, offers multiple access technologies, and is segmented end-to-end.

CCI Unique Selling Points

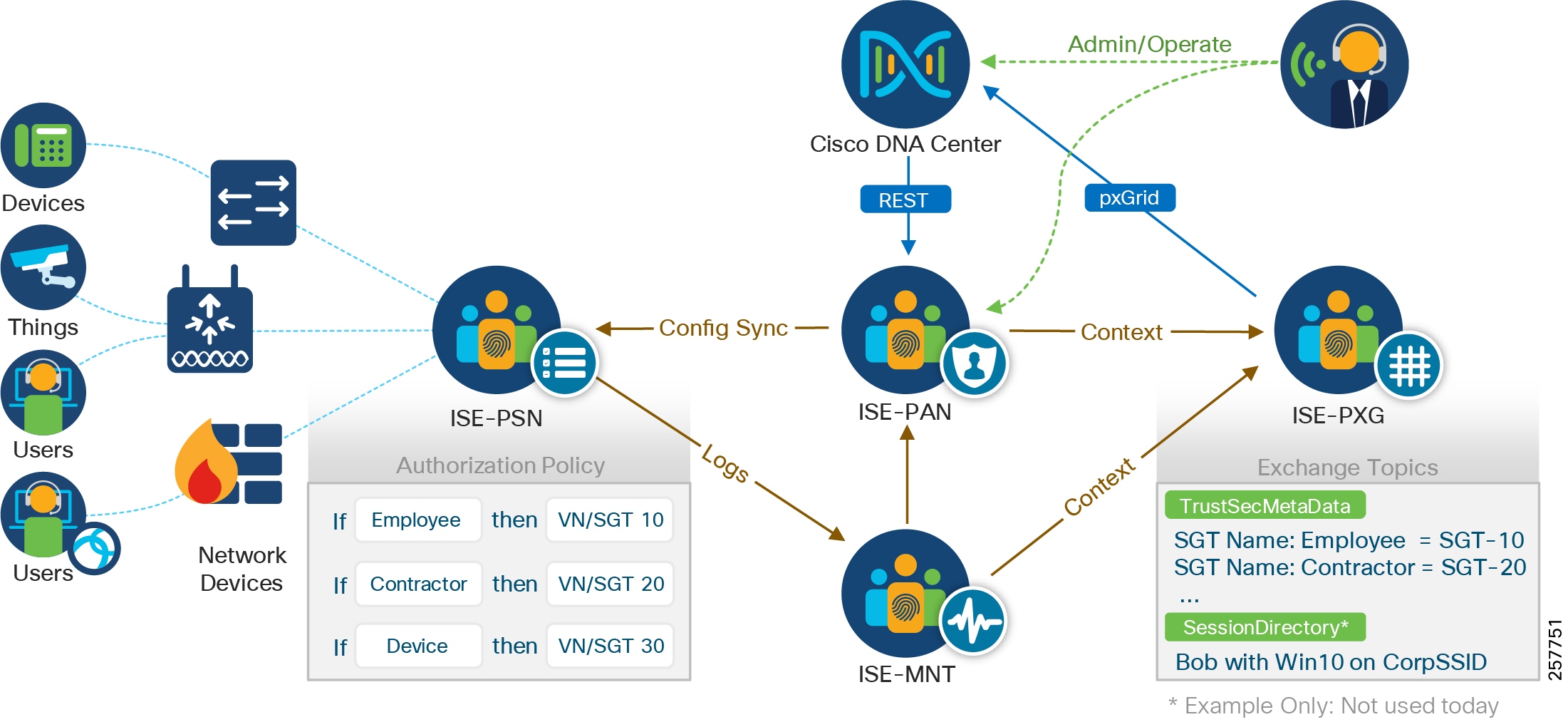

CCI leverages Cisco DNA Center to provide a next generation management experience: streamlining network device onboarding, providing security, and troubleshooting. In some use cases, additional management applications may also be used to provide a specialized management experience for example, Cisco Field Network Director (FND) or Actility ThingPark Enterprise.

CCI also leverages Cisco SD-Access and ISE with Scalable Group Tags (SGTs) to allow end-to-end network segmentation and policy control across multiple access technologies, various network devices, and physical locations. Cisco DNA Center and SD-Access together allow the customer to take an Intent-based Networking approach, which is to be concerned less with the IT networking and more with the operational technology/line-of-business (OT/LOB) requirements:

“I need to extend connectivity for smart parking to a different part of my city, but I want the existing policies to be used.” - CCI helps enable you to do this.

“I need to add a weather stations along my roadway, but they need to be segregated from the tolling infrastructure.” - CCI helps enable you to do this.

CCI gives you the end-to-end segmentation, made easy through Software-Defined Access, for provisioning, automation, and assurance at scale. Distributing IP subnets across a large geographical area is made simpler than ever before.

Solution Architecture

This chapter includes the following major topics:

- CCI Overall Network Architecture

- CCI Major Building Blocks

- CCI's Cisco Software-Defined Access Fabric

- Access Networks

CCI Overall Network Architecture

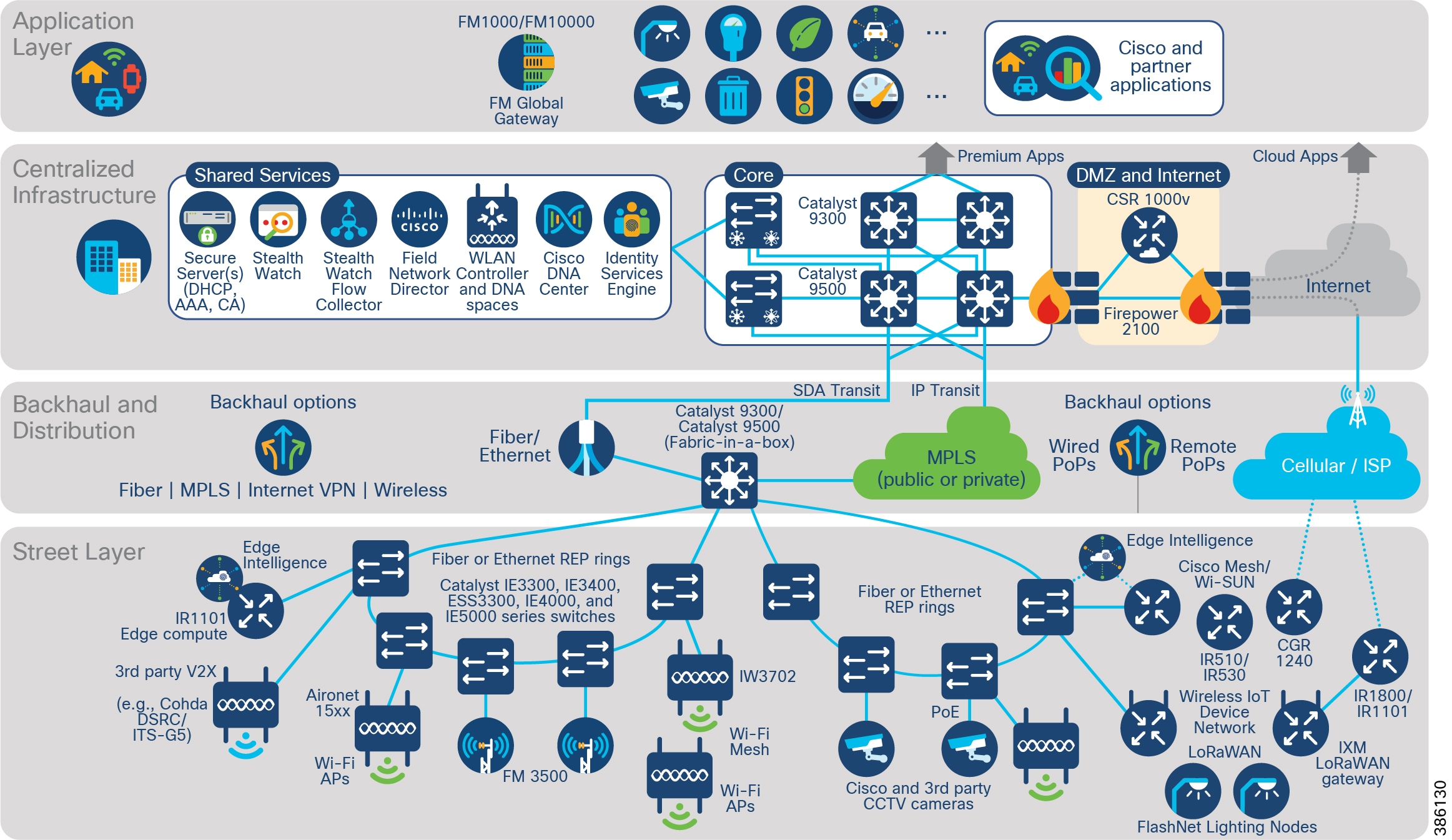

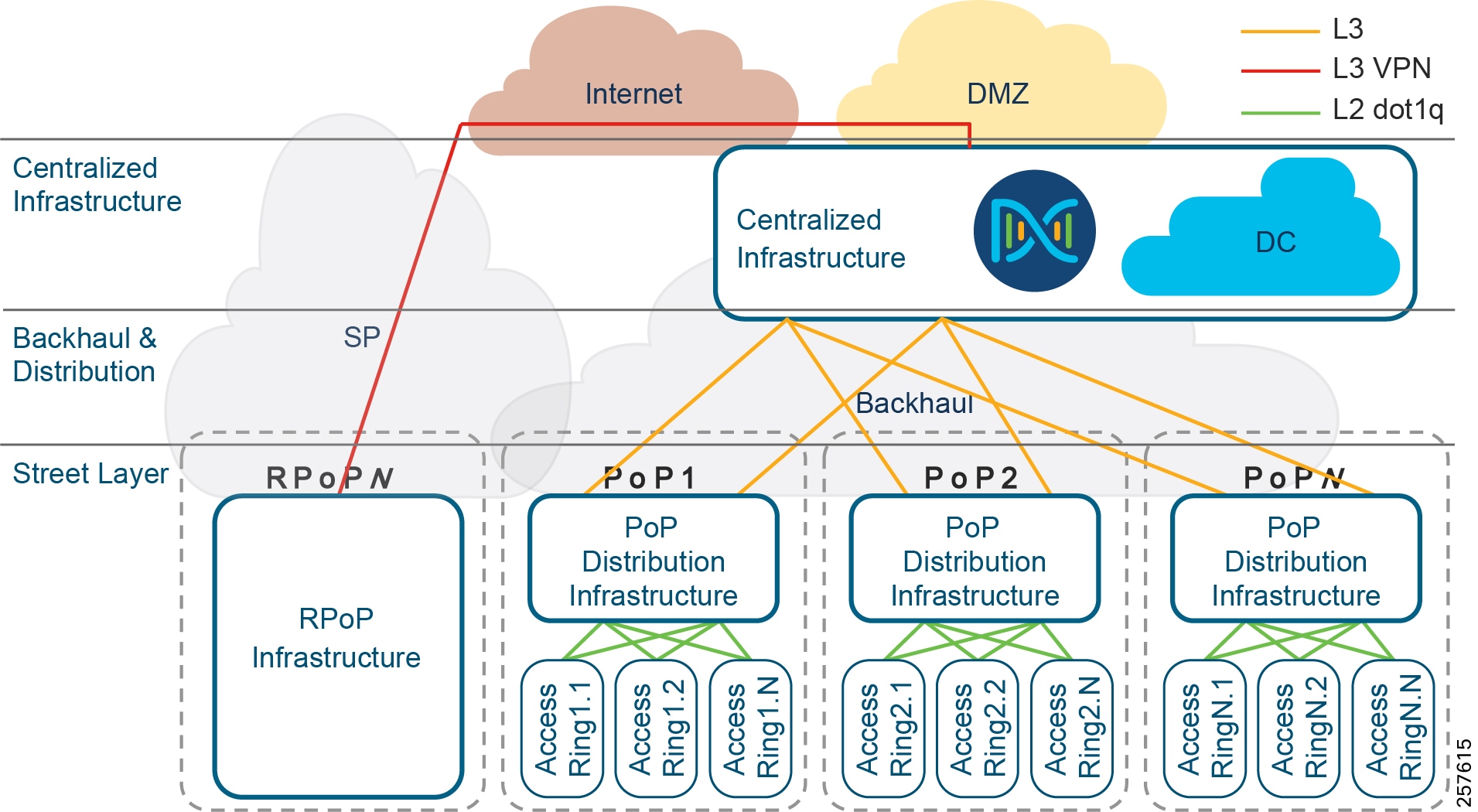

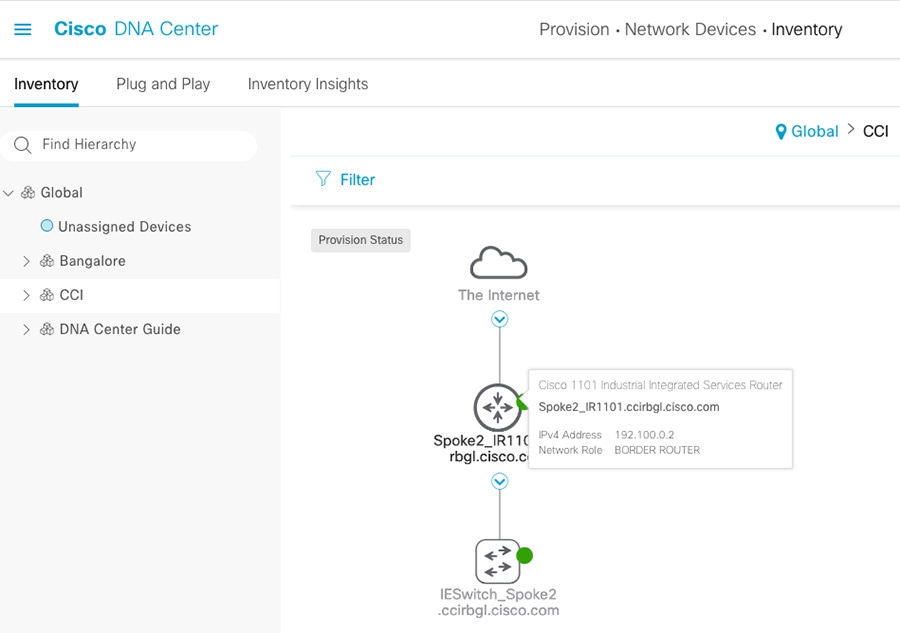

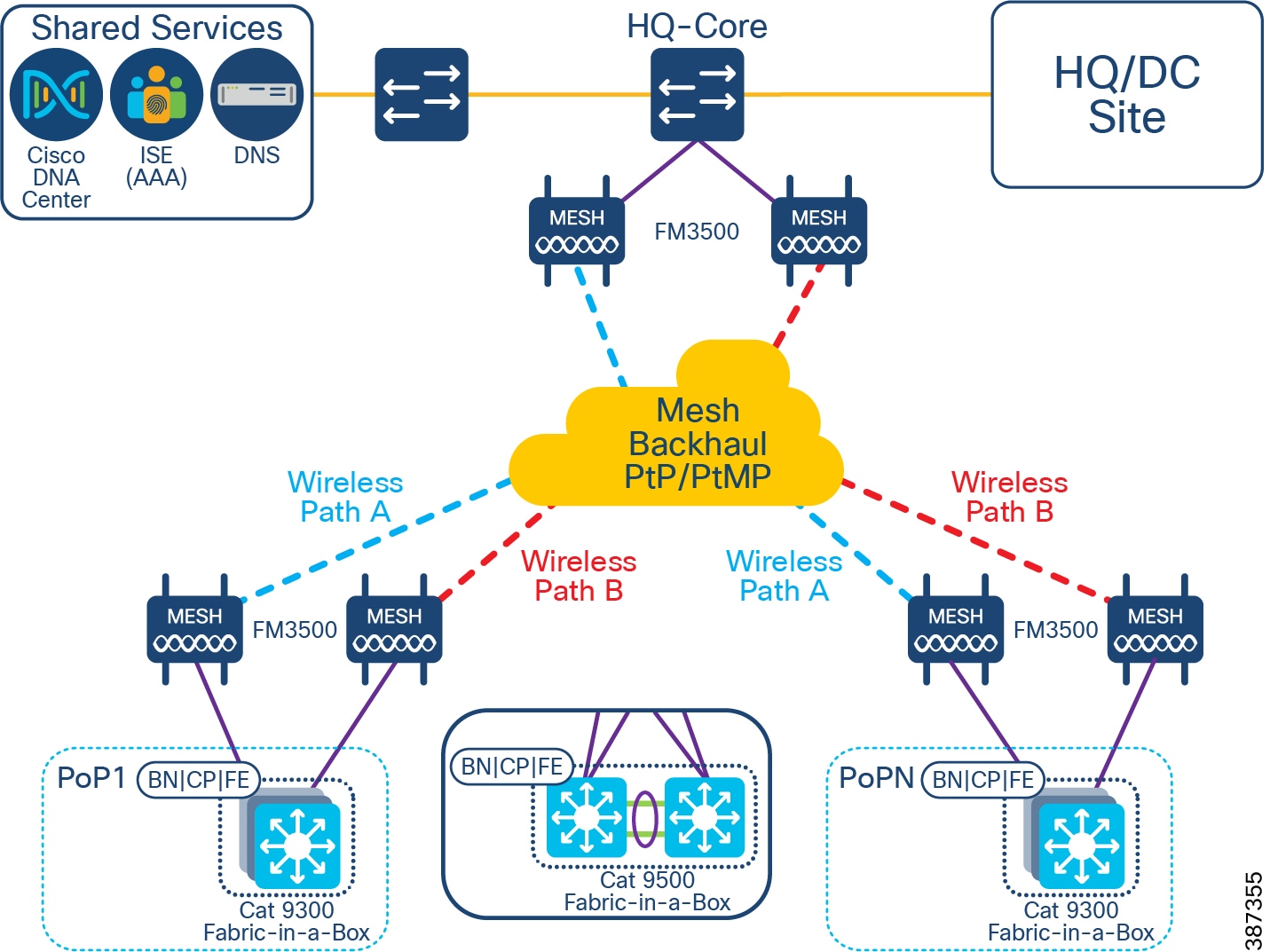

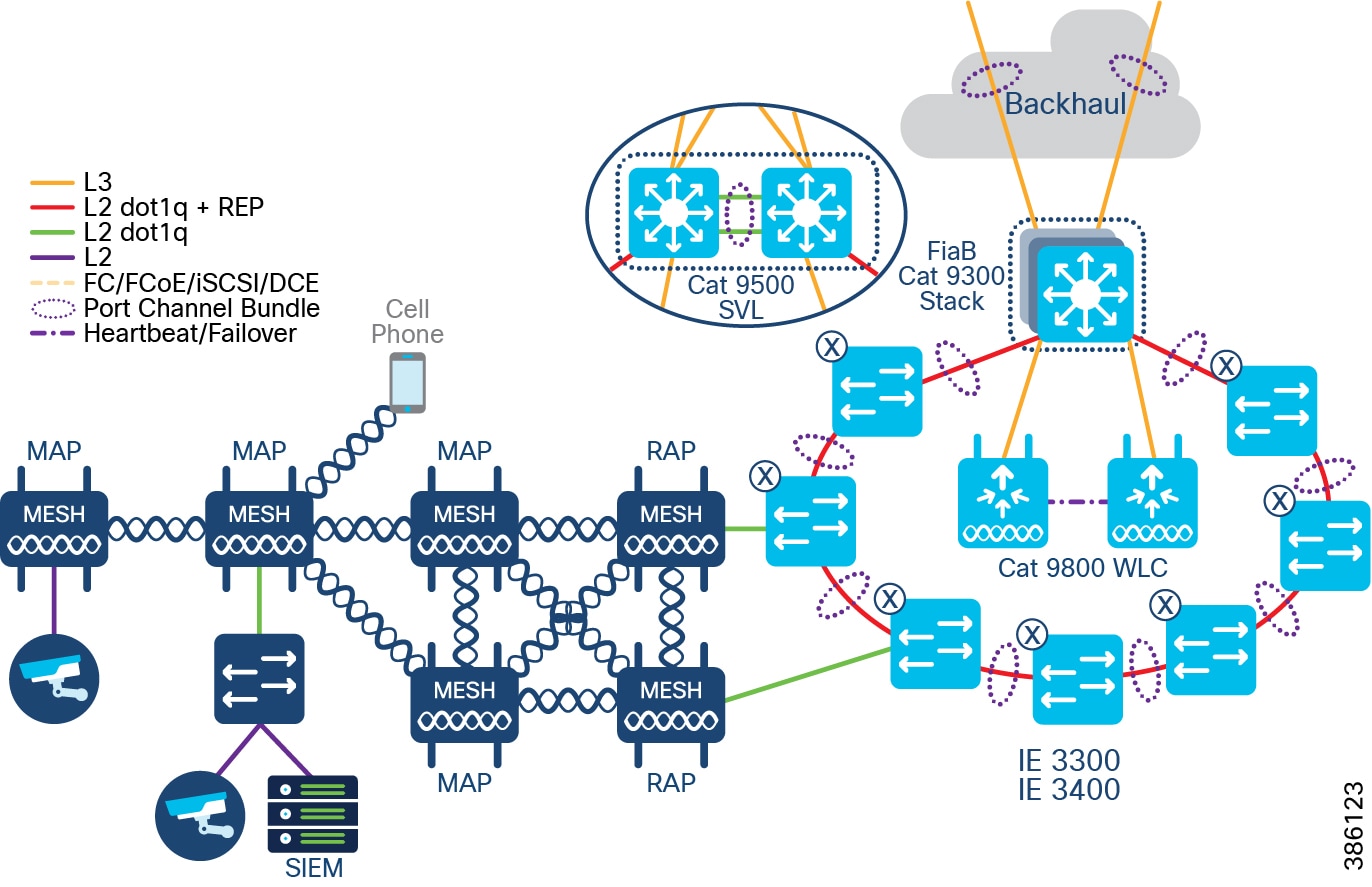

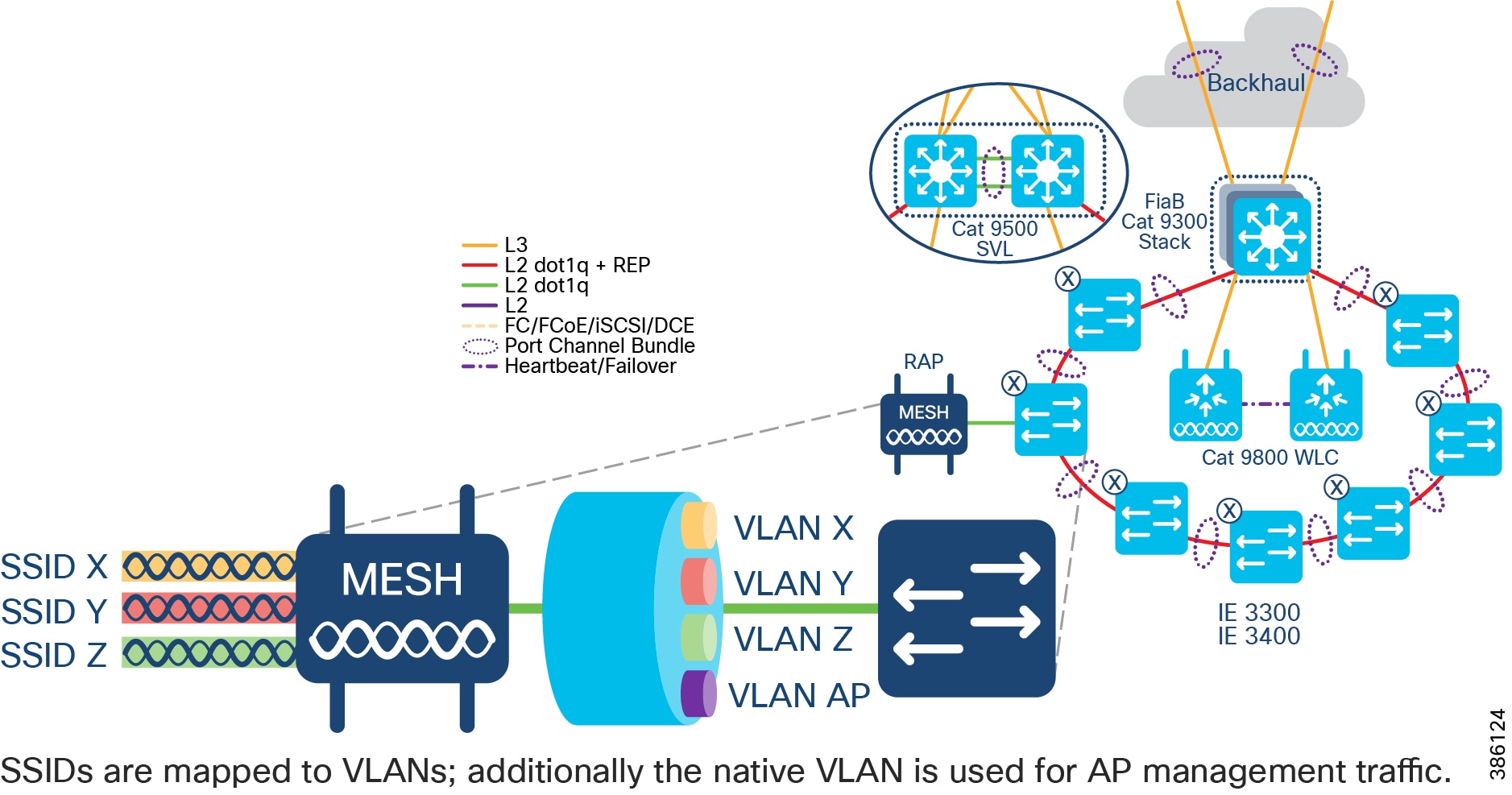

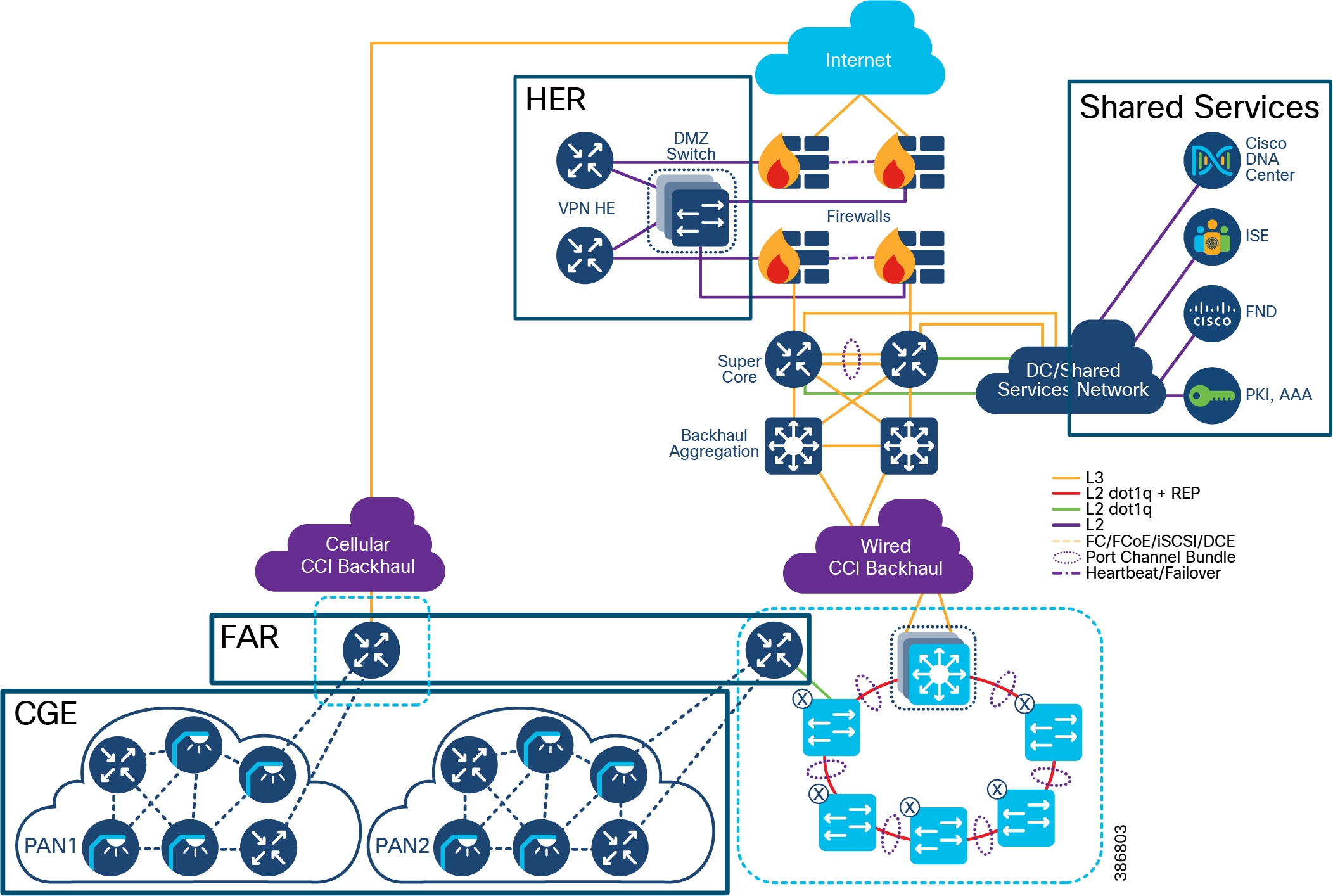

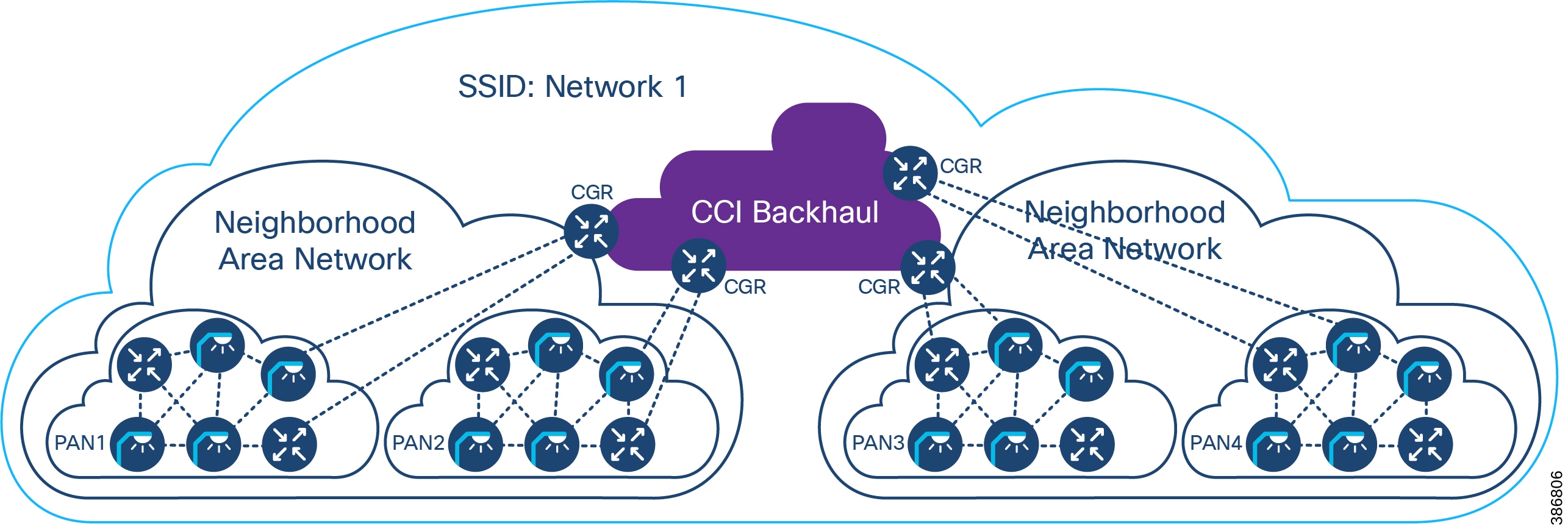

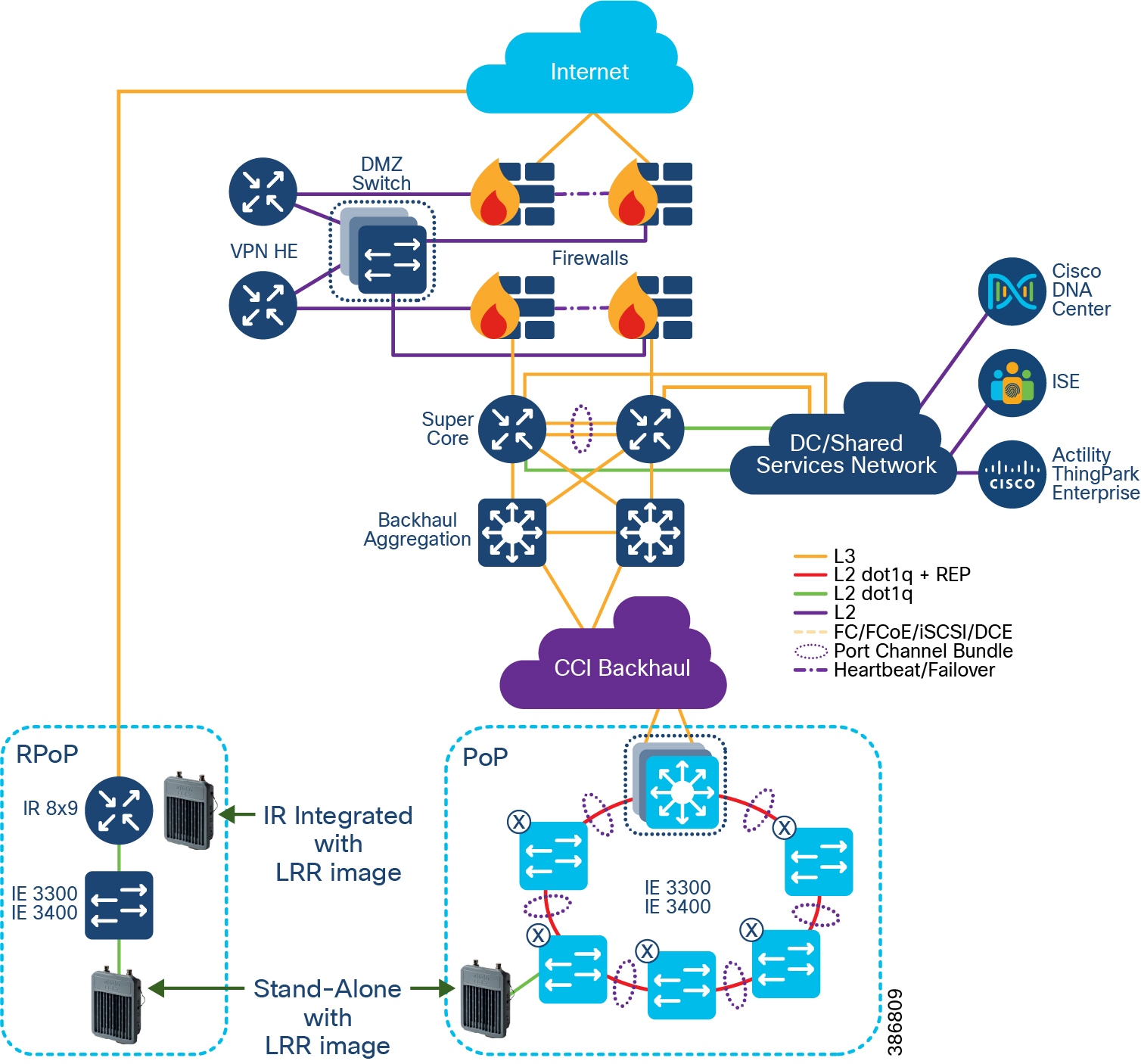

CCI comprises the building blocks shown in CCI Network Architecture and CCI PoP and RPoP.

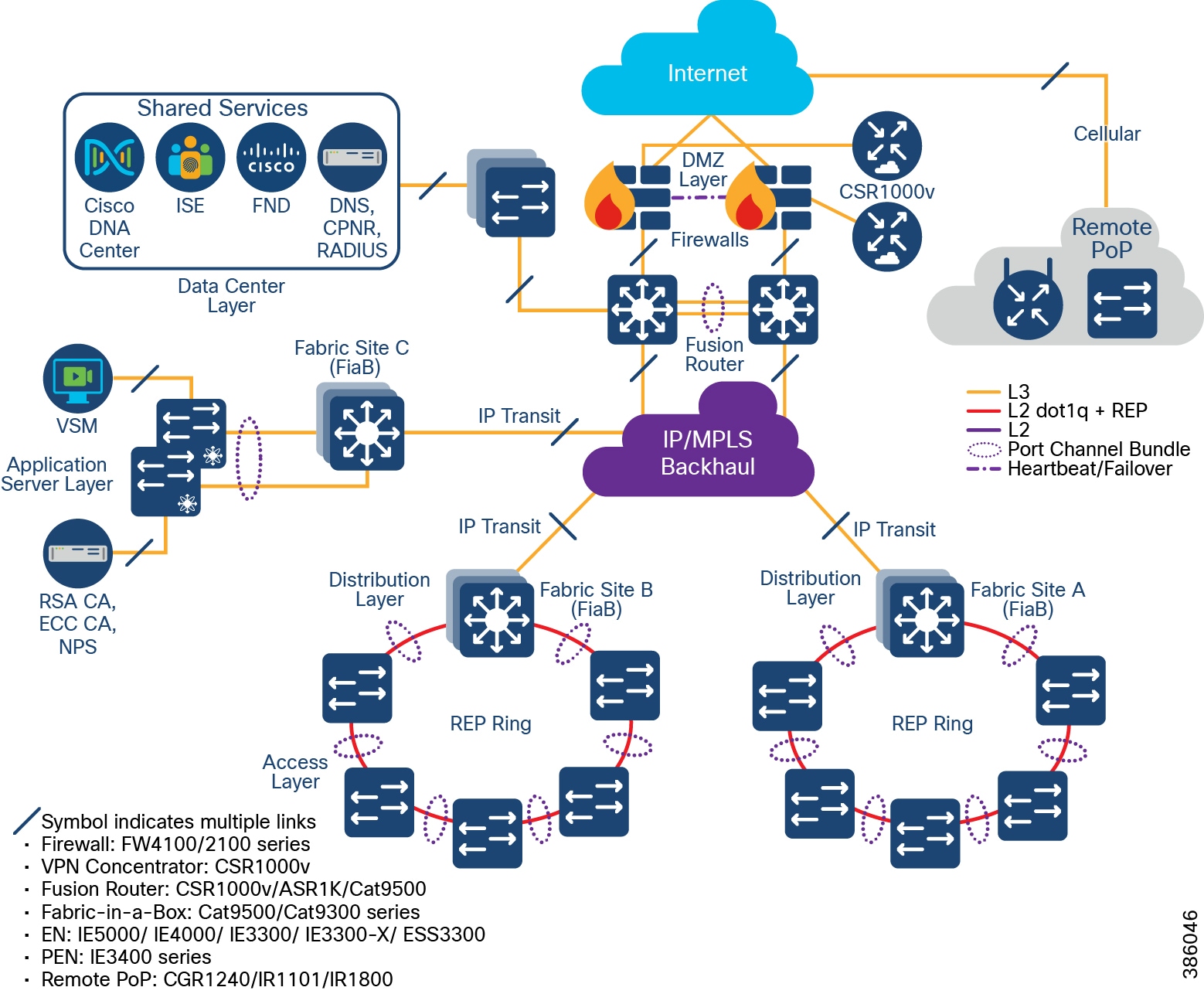

Figure 2 CCI Network Architecture

CCI Modularity

The intent of this CVD is to provide the reader with the best infrastructure guidance for where they are today. Each layer of the CCI architecture is designed to be consumed in modules. The reader only needs to deploy the access technologies that are relevant for them and can add other network access technologies as needed.

CCI brings intent-based networking out to fiber-connected locations (Points of Presence (PoPs)) and VPN-connected locations (Remote Points of Presence (RPoPs)); all of these locations connect back to some centralized infrastructure via a backhaul, which is where they also access the Internet.

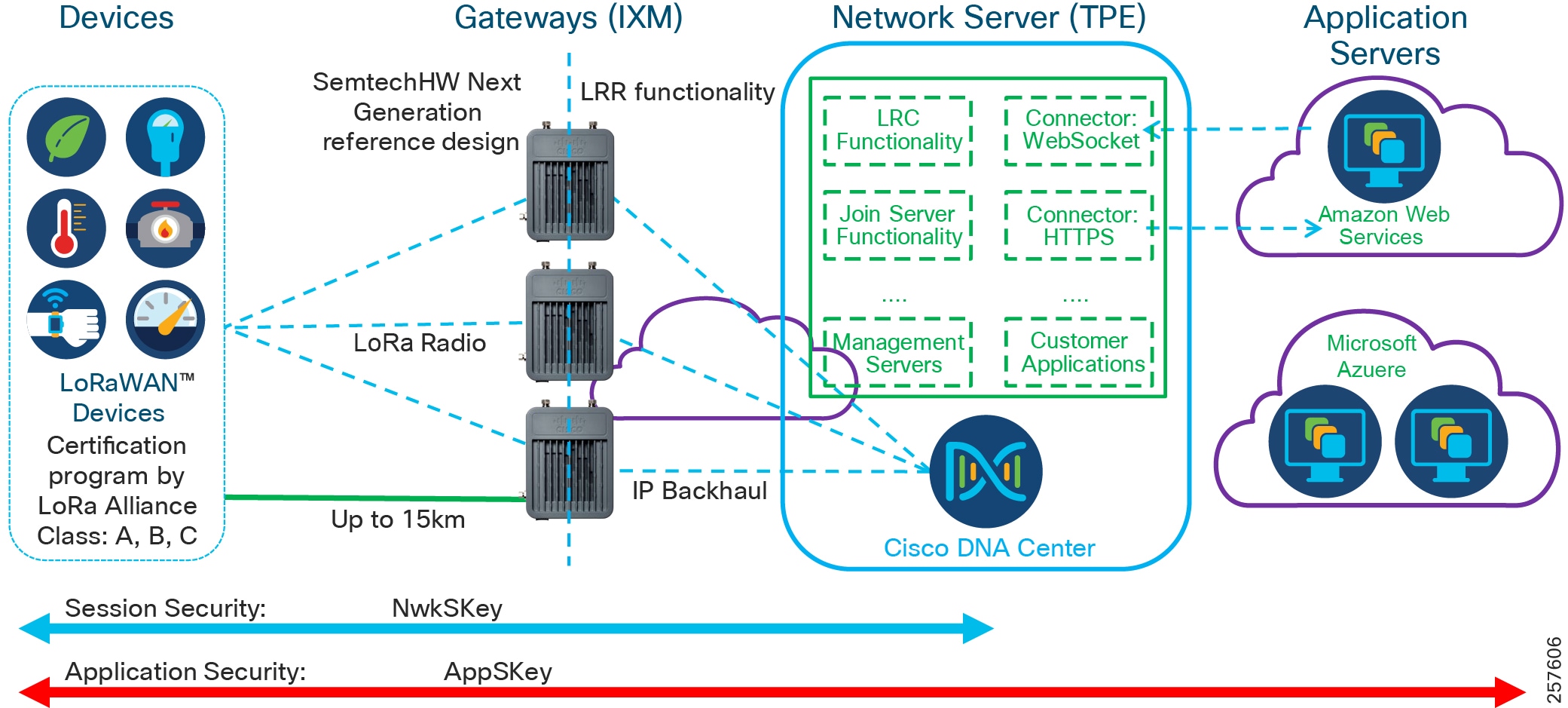

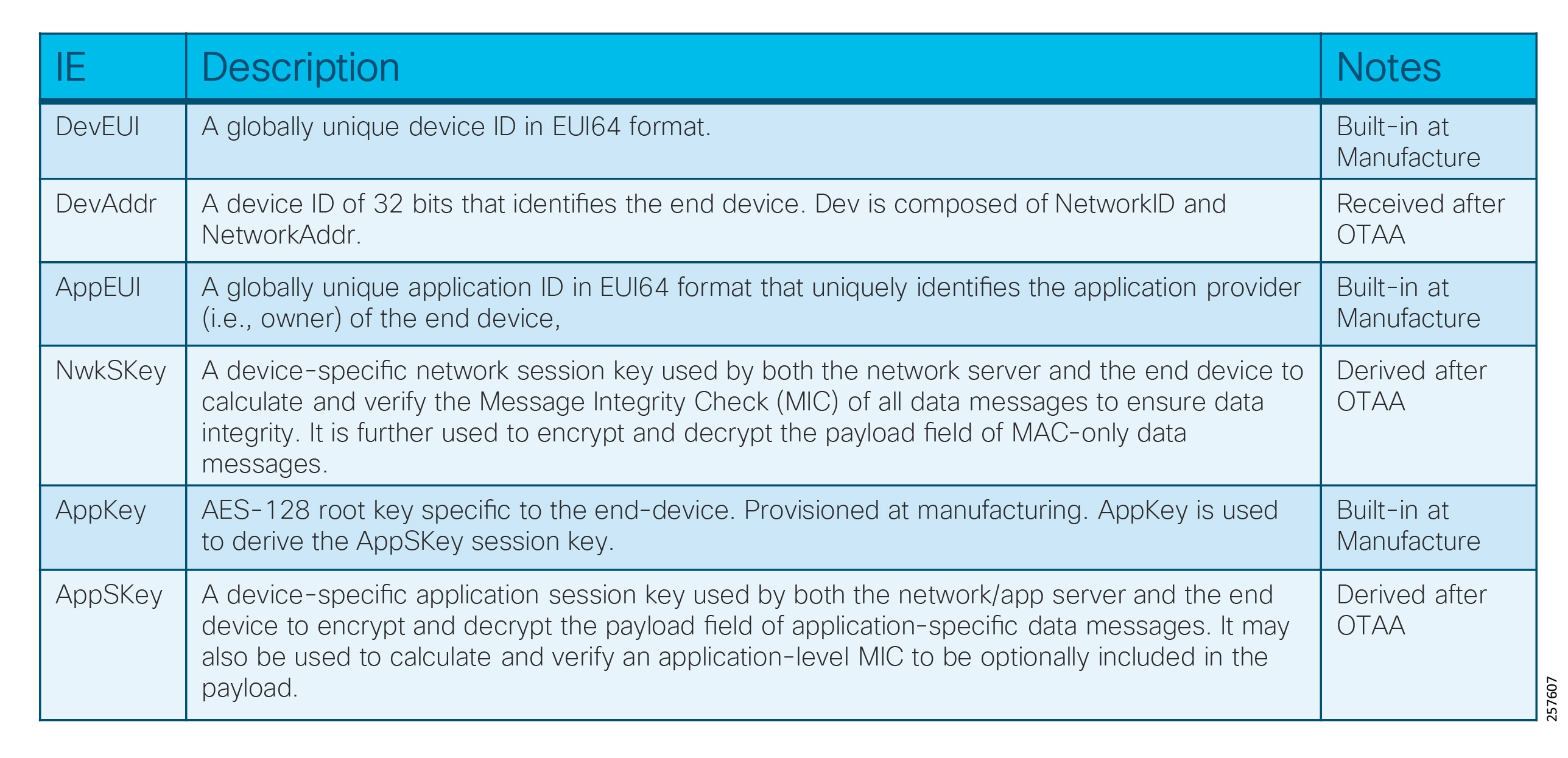

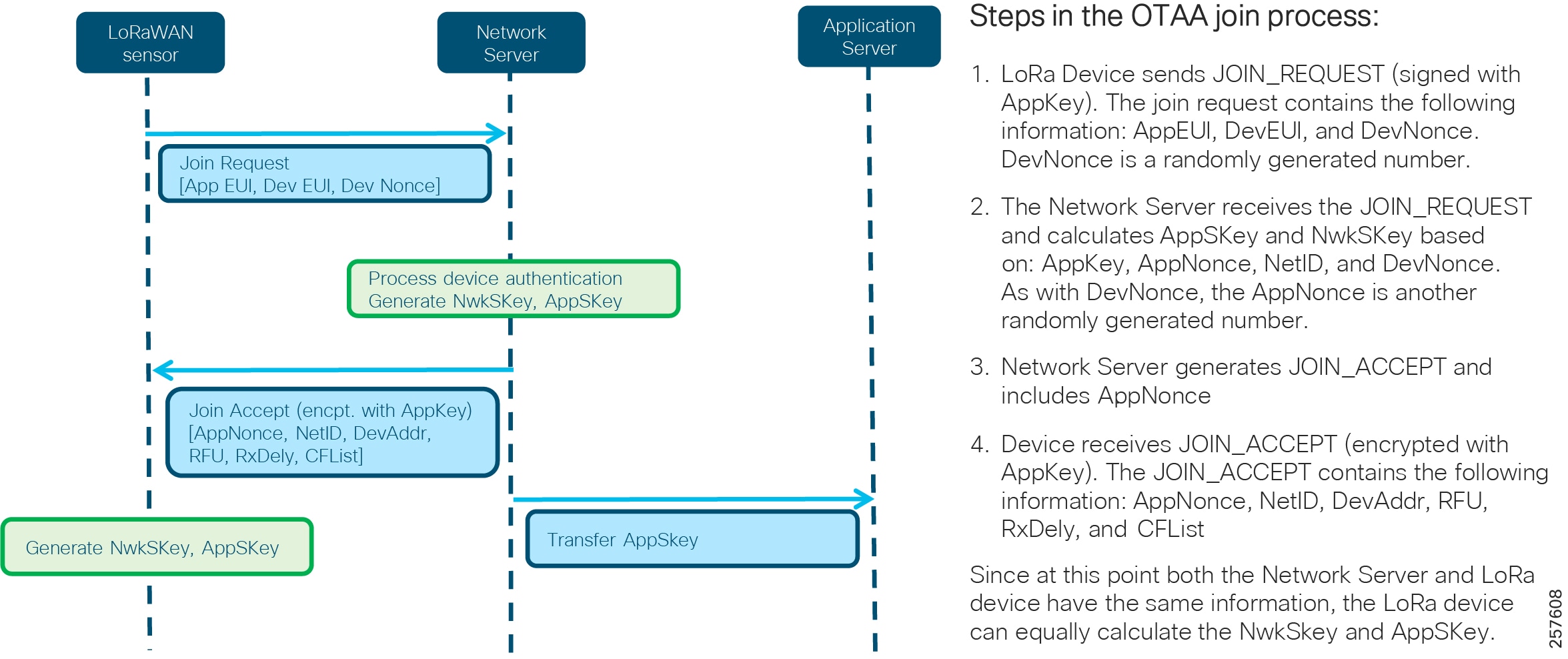

Additional access technologies, such as Wi-Fi, LoRaWAN, CR-Mesh and V2X, can similarly be implemented in a modular approach and will leverage the connectivity provided by CCIs PoPs and RPoPs.

CCI Major Building Blocks

With reference to CCI Network Architecture and CCI PoP and RPoP, what follows is a detailed description of the major building blocks of which CCI is comprised, in terms of the functions, the quantities, the hardware, and interconnection between blocks.

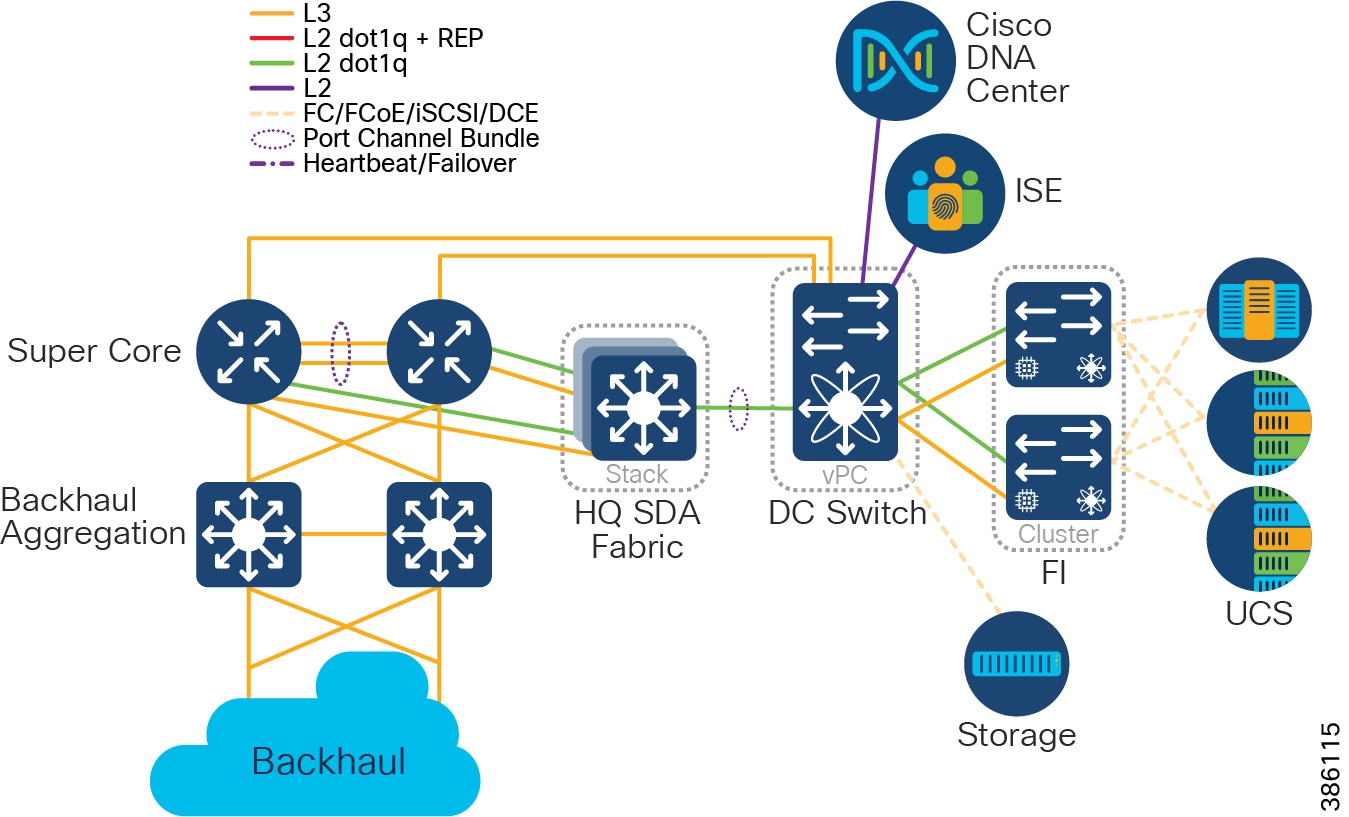

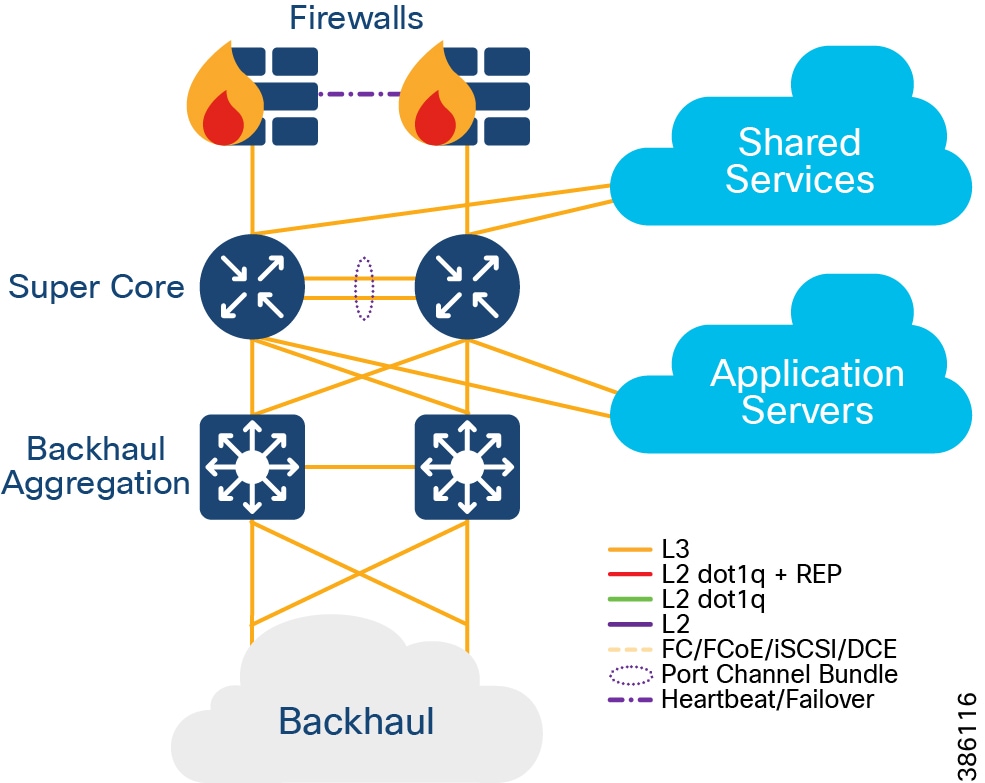

Centralized Infrastructure

Qty 1 of Centralized Infrastructure:

Designs are based on a centralized infrastructure at a single physical site/location. CCI 2.1 works within the boundaries and design rules for SD-Access 2.2.3.3. For more information, please refer to the Cisco Validated Design Software-Defined Access Design Guide at the following URL:

The Centralized Infrastructure is comprised of:

- Cisco UCS 6300 Series Fabric Interconnects (FI) (as resilient pair(s) to provide Data Communications Equipment (DCE) and management of Cisco Unified Computing System (UCS).

- Cisco Nexus 5600 converged DC switches to provide Fiber Channel (FC), Fiber Channel over Ethernet (FCoE), and IP.

- Cisco UCS B and C-series servers connected at a minimum of 10Gbps to FIs.

- Storage, connected at a minimum of 8Gbps to Nexus, via FC, FCoE, or Internet Small Computer Systems Interface (iSCSI).

Note: Application Layer may optionally be entirely delivered from the Public Cloud; if so, no on-premises Application Server infrastructure is required.

- Qty 1 of Super Core, which is comprised of a pair of suitably sized Layer 3 boxes, which provide resilient core and fusion routing capabilities; note that these may be switches even though they are routing.

–![]() The Super Core connects to multiple components, and this should be as resilient ≥ 10Gbps L3 links:

The Super Core connects to multiple components, and this should be as resilient ≥ 10Gbps L3 links:

- Shared Services

- DMZ and Internet

- Application Servers

- Point of Presence (PoP) Backhaul

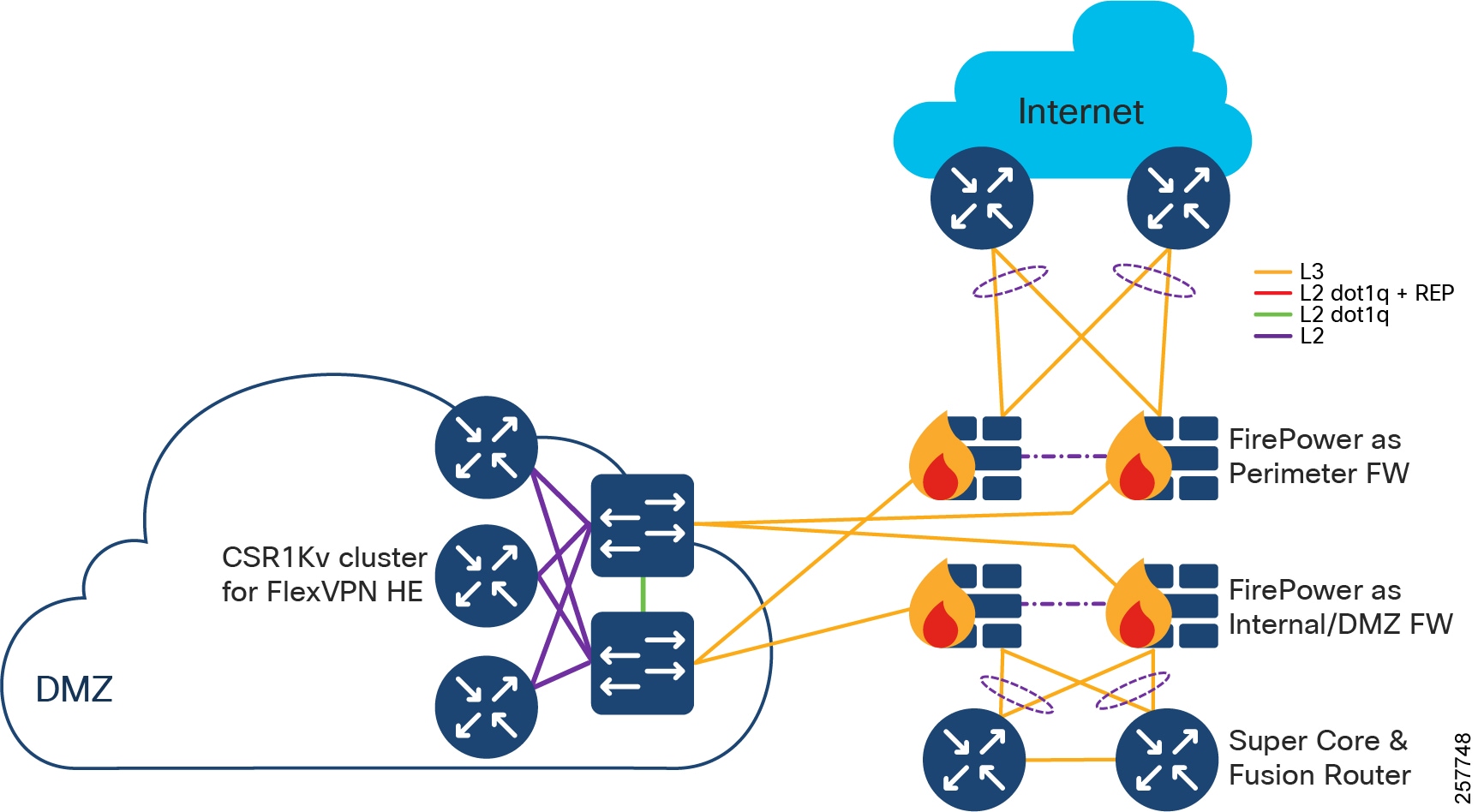

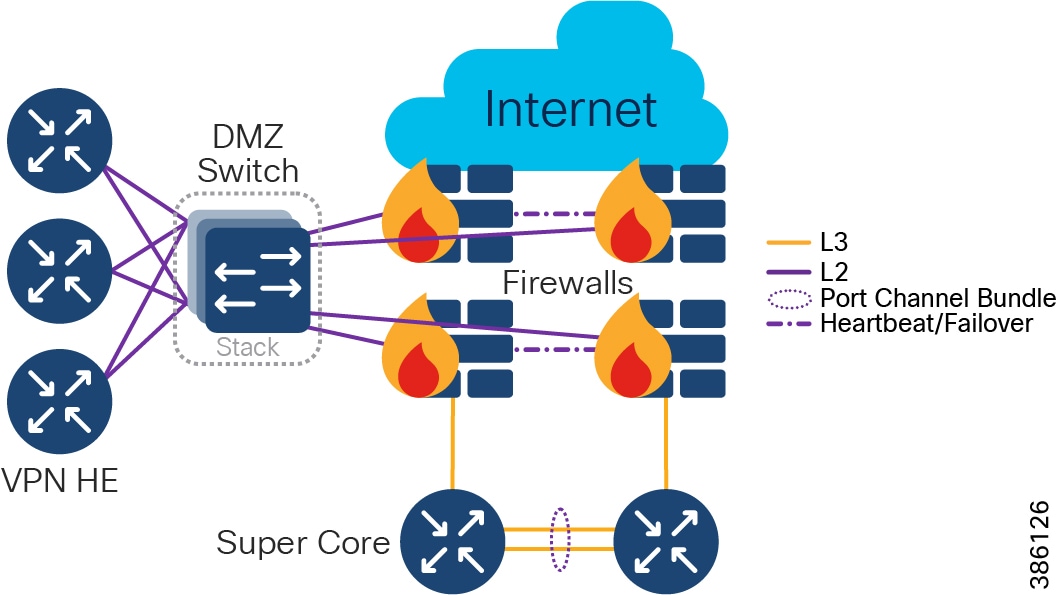

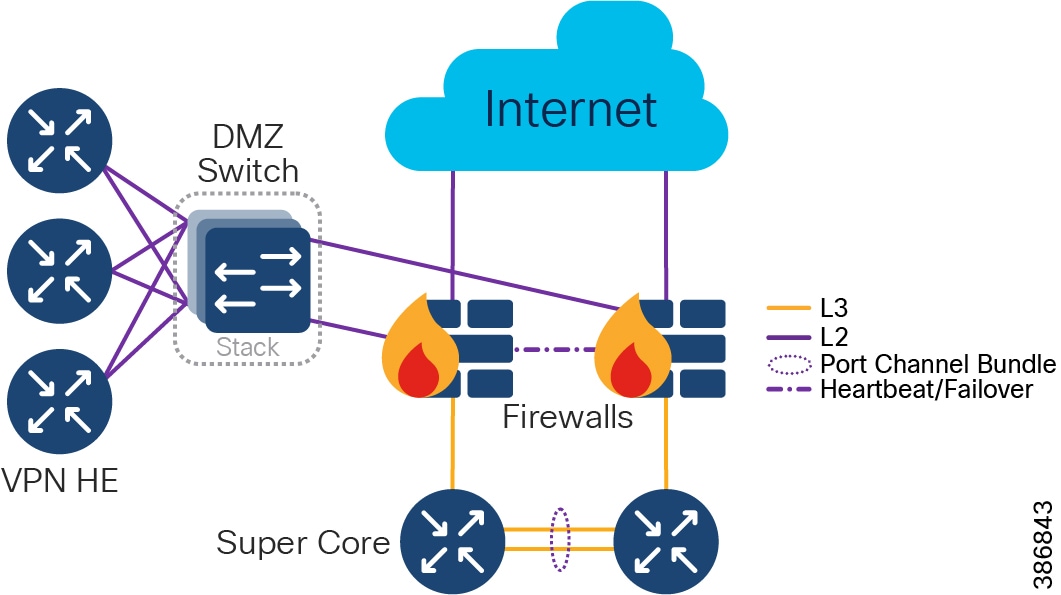

- Qty 1 of De-militarized Zone (DMZ) and Internet:

–![]() DMZ is comprised of resilient pairs/clusters of firewalls on both the Internet and DMZ sides, and also a resilient pair/cluster of IPSec headend routers for FlexVPN tunnel termination:

DMZ is comprised of resilient pairs/clusters of firewalls on both the Internet and DMZ sides, and also a resilient pair/cluster of IPSec headend routers for FlexVPN tunnel termination:

- DMZ can optionally contain other servers/appliances that are required by customer for various use cases.

–![]() Qty 1 of Internet connection:

Qty 1 of Internet connection:

- Internet should ideally connect from two different ISPs, or separate A and B connections from a single ISP.

- Qty 1 of Shared Services:

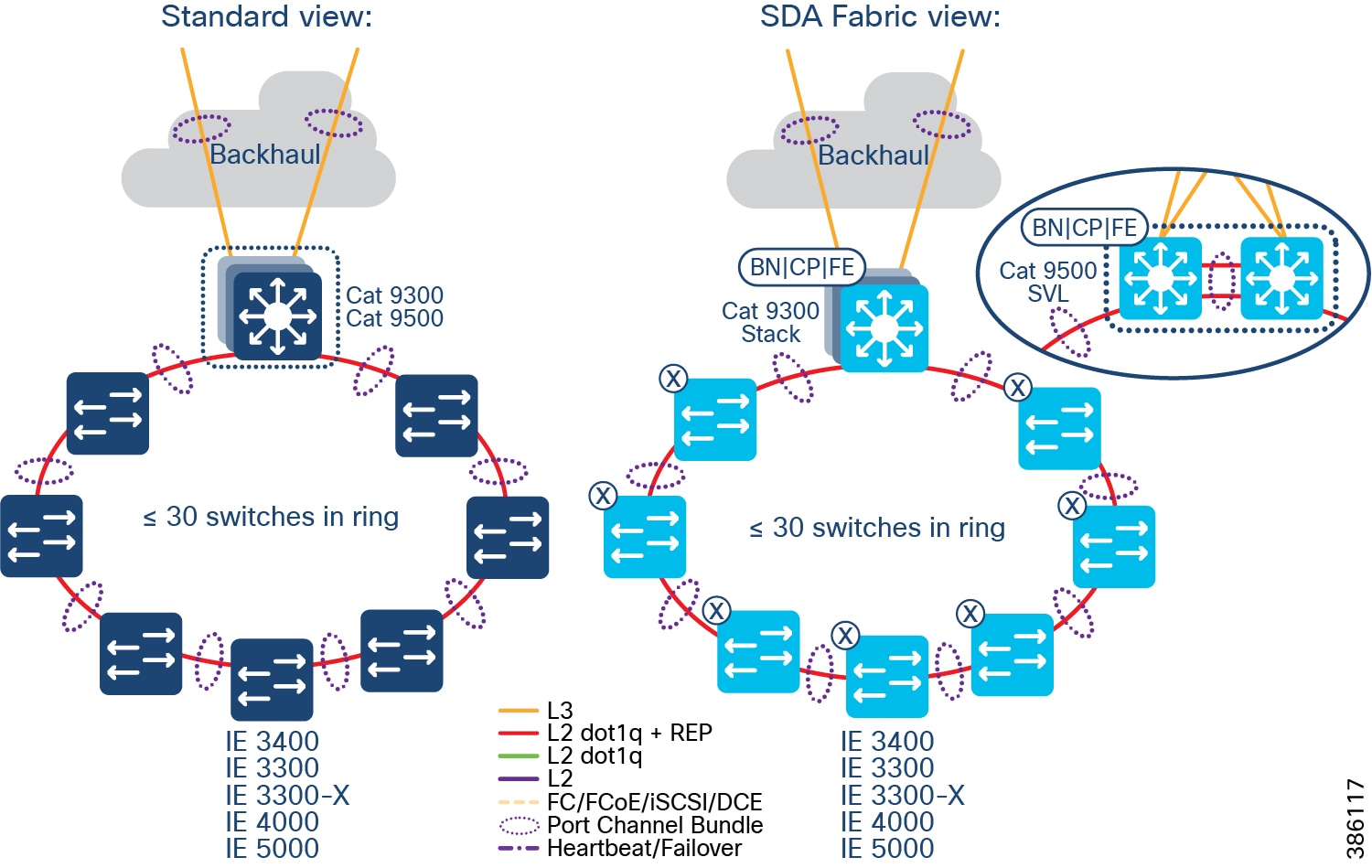

Point of Presence (PoP)

Qty ≤ 499 of Point(s) of Presence

PoPs are typically required, although in some deployments of CCI no PoPs may be required. Note that, a CCI deployment may consist entirely of Remote PoPs (RPoPs) if all-cellular connectivity is used for backhaul.

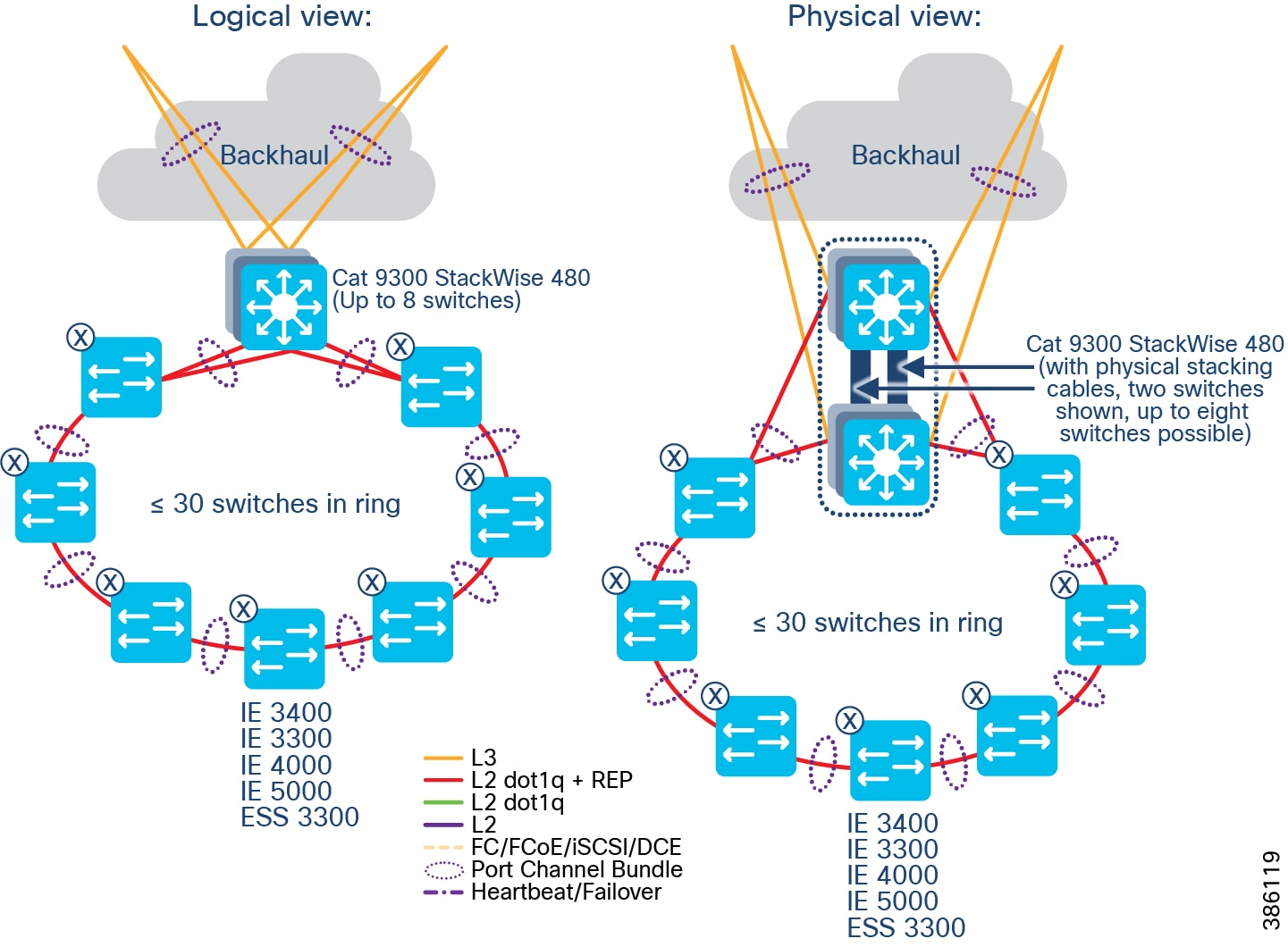

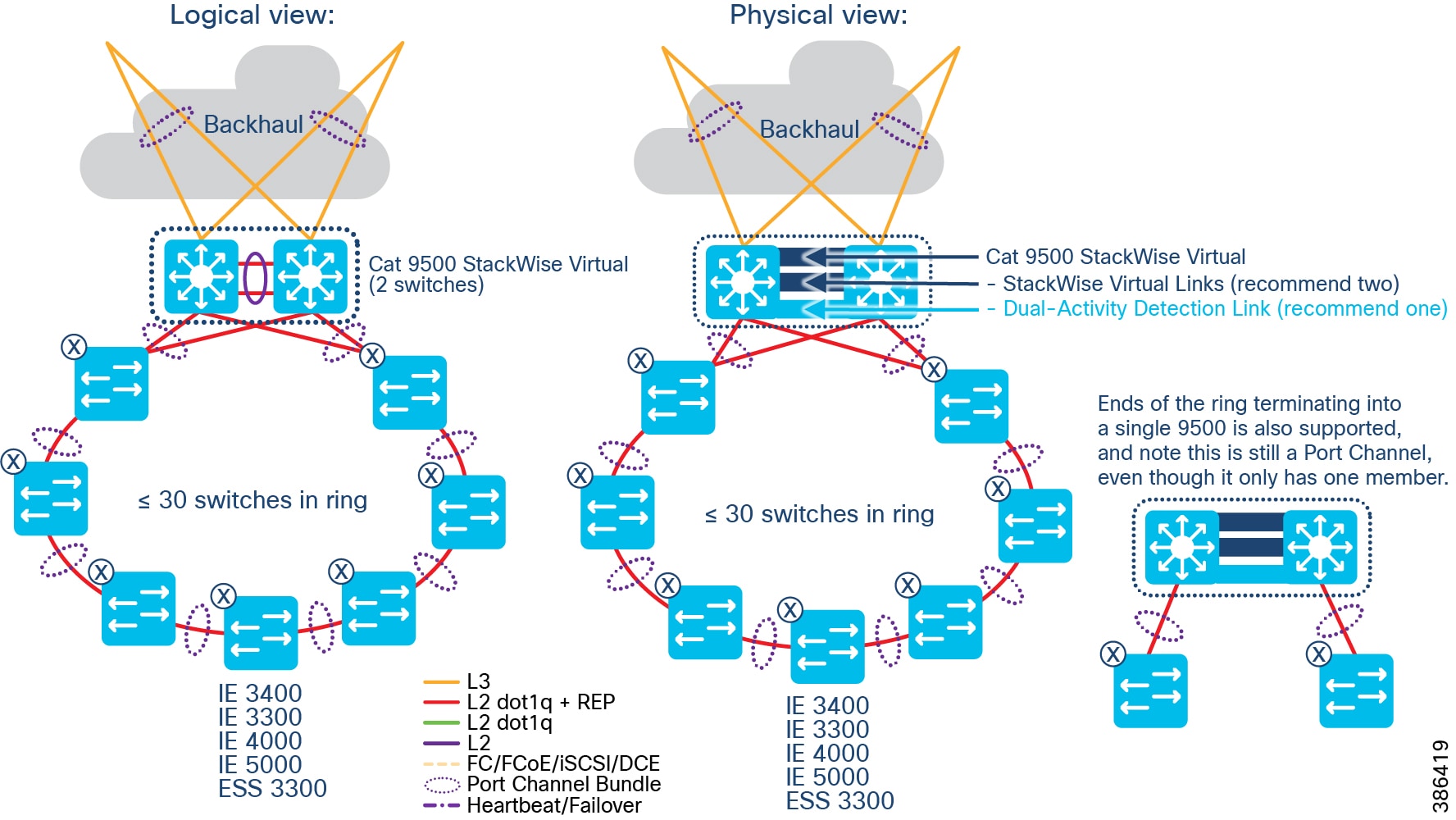

Points of Presence are comprised of:

–![]() Distribution Infrastructure is comprised of Cisco Catalyst 9000-series switches that are capable of being Fabric in a Box (FiaB); typically 2 x Catalyst 9300 in a physical stack or 2 x Catalyst 9500 switches in a virtual stack (n.b. only the non-High-performance variants of the Catalyst 9500 family are supported).

Distribution Infrastructure is comprised of Cisco Catalyst 9000-series switches that are capable of being Fabric in a Box (FiaB); typically 2 x Catalyst 9300 in a physical stack or 2 x Catalyst 9500 switches in a virtual stack (n.b. only the non-High-performance variants of the Catalyst 9500 family are supported).

–![]() Multi-chassis EtherChannel (MEC) is employed for downlinks to Extended Nodes (ENs) and Policy Extended Nodes (PENs)

Multi-chassis EtherChannel (MEC) is employed for downlinks to Extended Nodes (ENs) and Policy Extended Nodes (PENs)

–![]() Layer 3 P2P uplinks used for connection to the backhaul:

Layer 3 P2P uplinks used for connection to the backhaul:

- to PE routers, in the case of IP Transit (likely SP MPLS)

- to (likely) Catalyst 9500s, in the case of SD-Access Transit, over dark fiber (or equivalent)

- Qty ≥ 1 Access Rings, which are comprised of:

–![]() Qty 1<29 Cisco Industrial Ethernet (IE) switches as extended nodes or policy extended nodes; these switches are either end of a closed Resilient Ethernet Protocol (REP) ring, plus

Qty 1<29 Cisco Industrial Ethernet (IE) switches as extended nodes or policy extended nodes; these switches are either end of a closed Resilient Ethernet Protocol (REP) ring, plus

–![]() IE switches are connected together in a closed ring topology via fiber or copper Small Form-Factor Pluggables (SFP).

IE switches are connected together in a closed ring topology via fiber or copper Small Form-Factor Pluggables (SFP).

–![]() Extended nodes and/or Policy Extended Nodes are connected to uplink Catalyst 9300 stack or Catalyst C9500 StackWise Virtual switches via fiber or copper:

Extended nodes and/or Policy Extended Nodes are connected to uplink Catalyst 9300 stack or Catalyst C9500 StackWise Virtual switches via fiber or copper:

- A ring can be comprised uniformly of all IE-3300, Cisco Embedded Services 3300 Series switches (ESS 3300), IE-4000, or IE-5000 switches, or a mixture of these switches; each operating as an Extended Node

- A ring can alternatively be comprised exclusively of all IE-3400 switches, these operating as Policy Extended Nodes.

- Note: A mix PENs and ENs in the same access ring is not supported.

- Per Points of Presence, nodes of the ring are either daisy-chained Extended nodes or daisy-chained Policy Extended Nodes provisioned through Cisco DNA Center. Please note that it is not possible to mix PENs and ENs in the same access ring.

- SR or LR SFPs can be used, giving fiber distances of <100m to 70km, with RGD optics allowing deployment in the -40 degrees centigrade +85 degrees centigrade temperature range.

Note: Although the SFPs have this operating temperature range, the real-world operating temperature range will be determined by several factors, including the operating temperature range of the switches they are plugged into.

- Different segments of a ring can be different physical lengths/distances and fiber types.

- Qty 2 of 10 Gigabit Ethernet SFP per switch for a 10 Gbps ring:

–![]() Extended nodes connected to uplink Catalyst 9300 stack or Catalyst C9500 StackWise Virtual switches via 10G fiber:

Extended nodes connected to uplink Catalyst 9300 stack or Catalyst C9500 StackWise Virtual switches via 10G fiber:

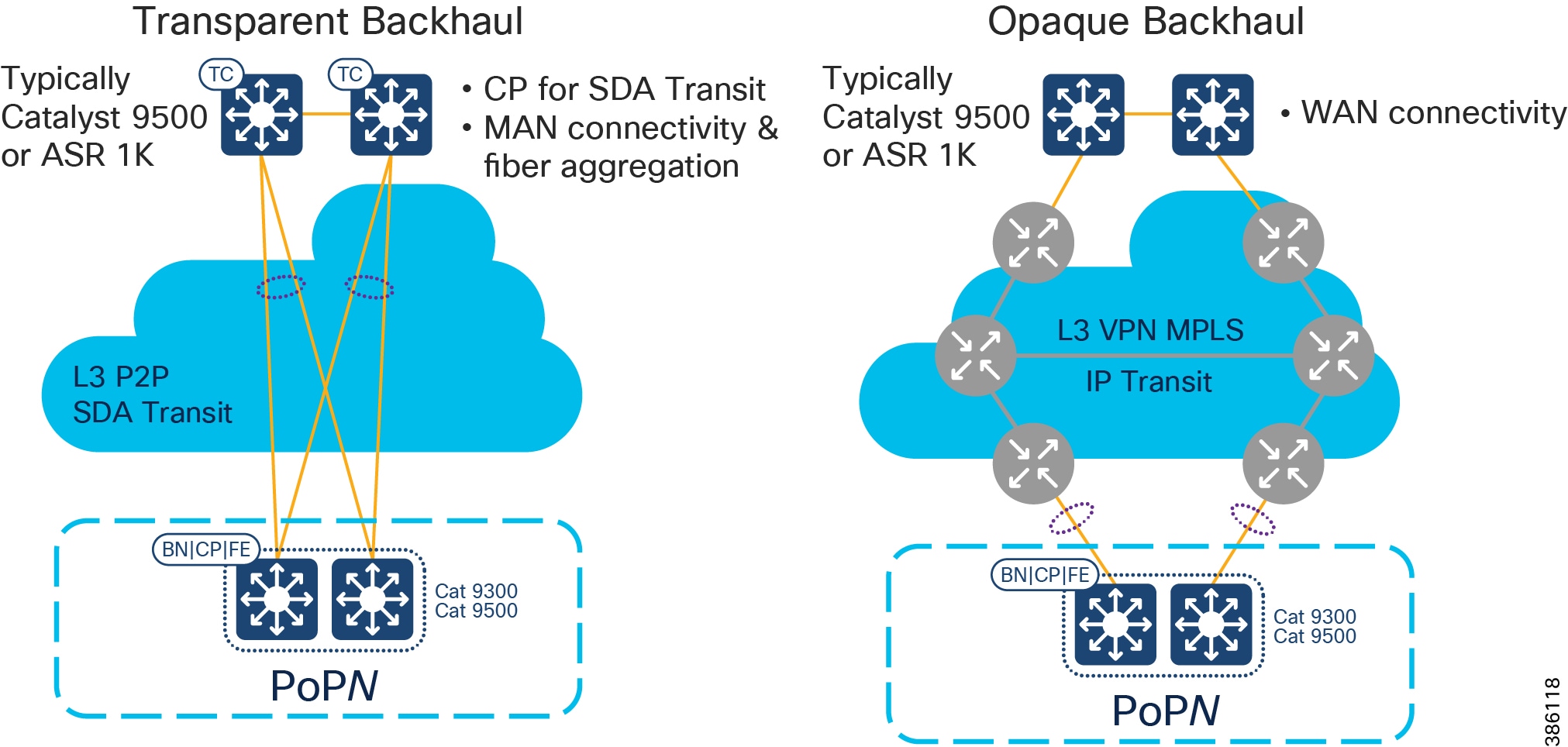

Backhaul for Points of Presence

To connect the PoPs back to the Centralized Infrastructure, a Metropolitan Area Network (MAN) is used.

Figure 8 Backhaul for Points of Presence

When deploying CCI, you may have access to dark fiber, in which case you can build your own MAN, which is a transparent backhaul entirely within the SD-Access fabric domain that uses SD-Access Transit. Alternatively, or additionally, an SP might be involved or you might have your own MPLS network; this is an opaque backhaul and the traffic must leave the SD-Access fabric domain on an IP Transit and come back into the SD-Access fabric domain at the far side.

Remote Point of Presence (RPoP)

- Qty ≤ 1000 of Remote Points-of-Presence (RPoPs); although in some deployments of CCI no RPoPs may be required.

- An RPoP is a Connected Grid Router (CGR) or Cisco Industrial Router (IR) and is typically connected to the Public Internet via a cellular connection (although any suitable connection can be used (such as xDSL or Ethernet), over which FlexVPN secure tunnels are established to the HE in the DMZ.

- The RPoP router may provide enough local LAN connectivity, or an additional Cisco Industrial Ethernet (IE) switch may be required.

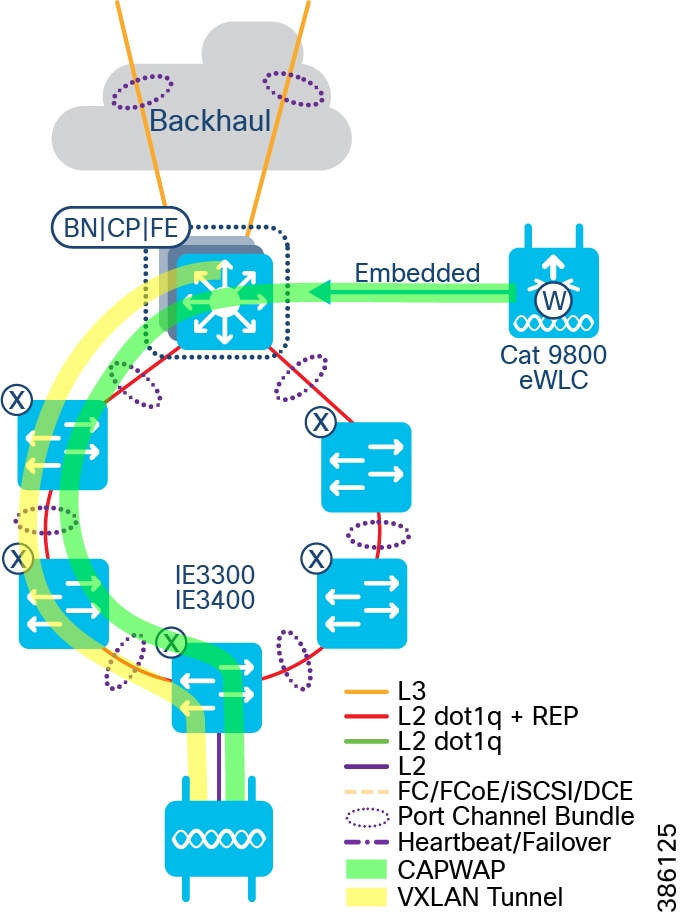

CCI's Cisco Software-Defined Access Fabric

The SD-Access Fabric Network Layers of CCI

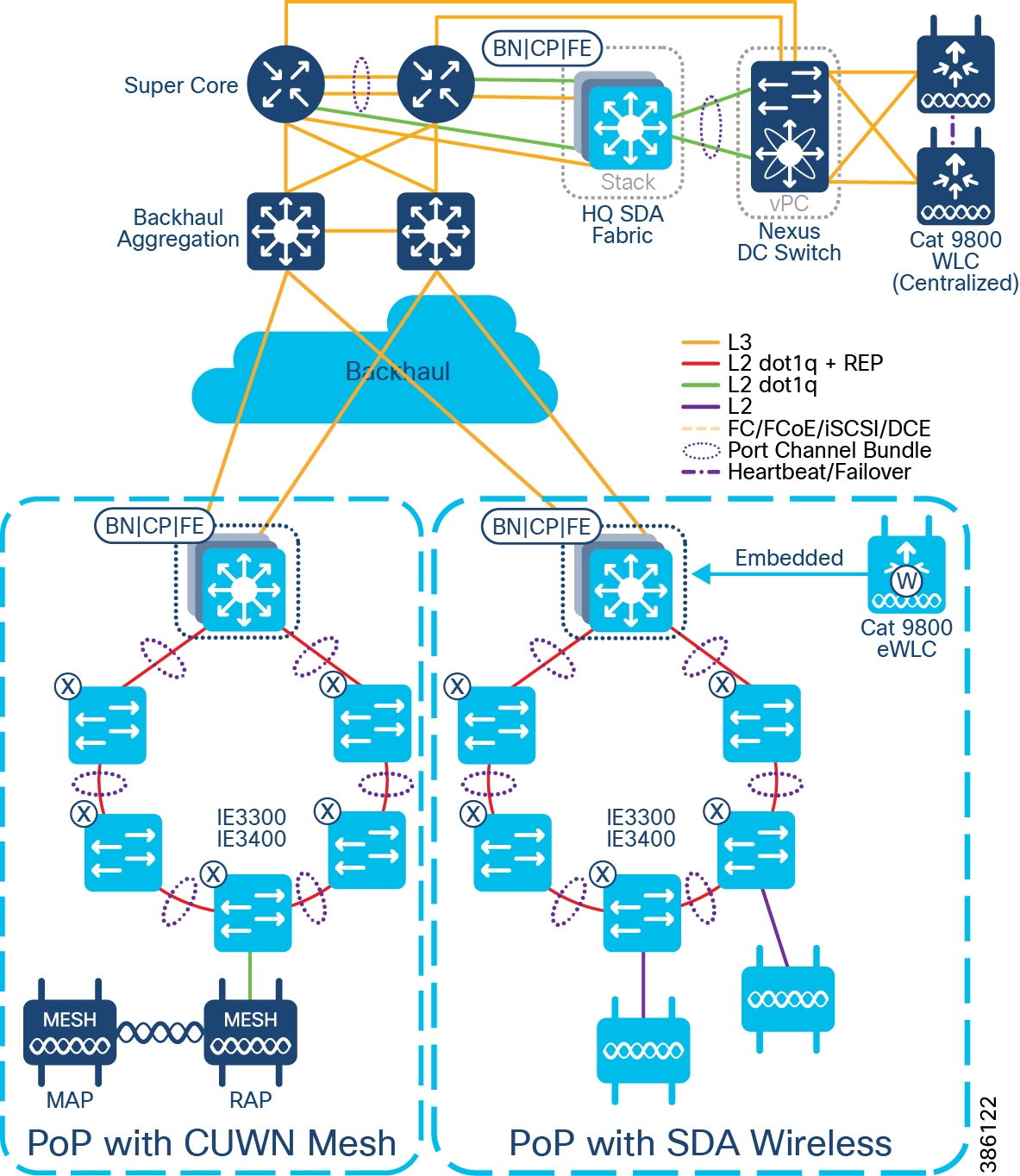

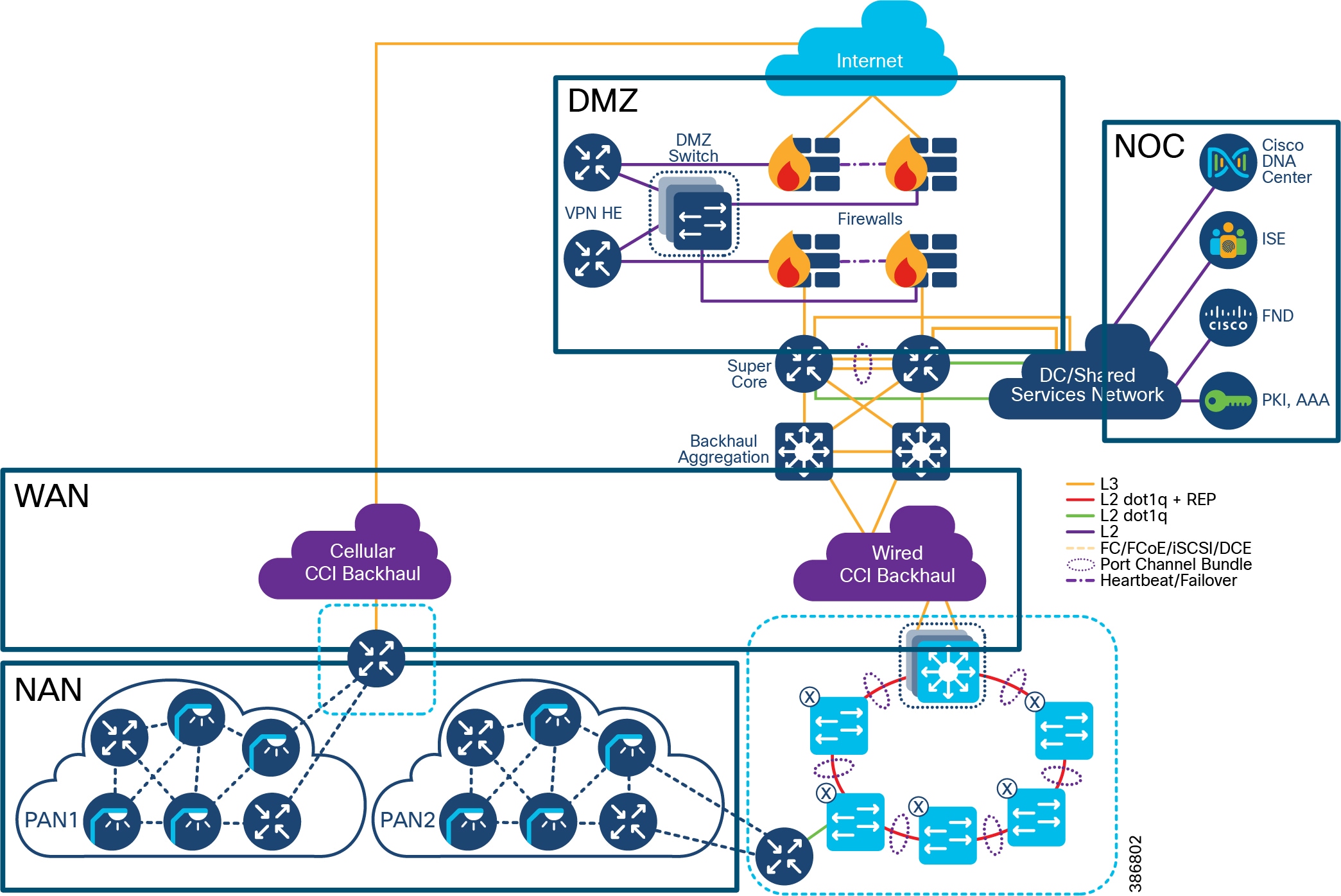

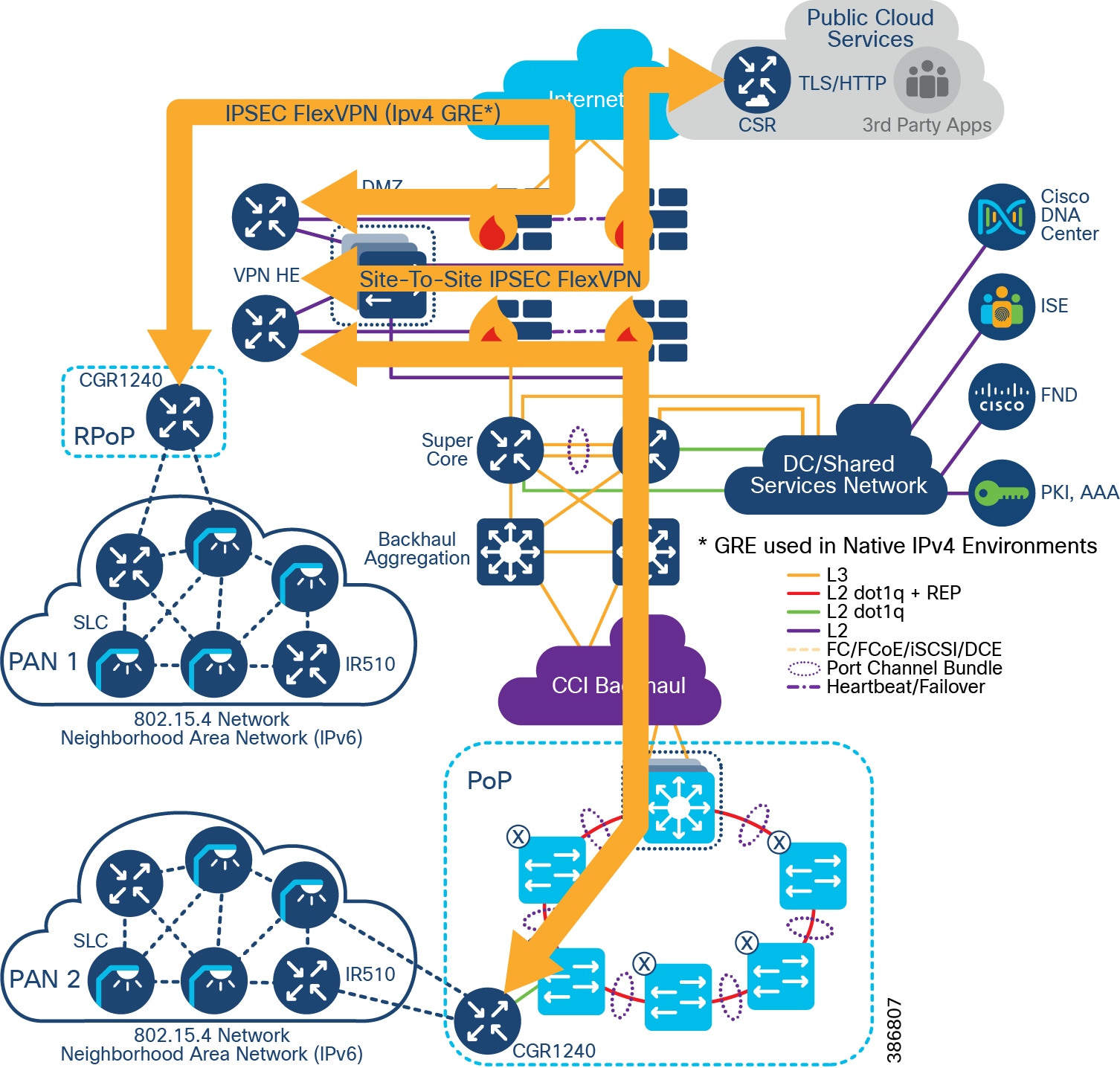

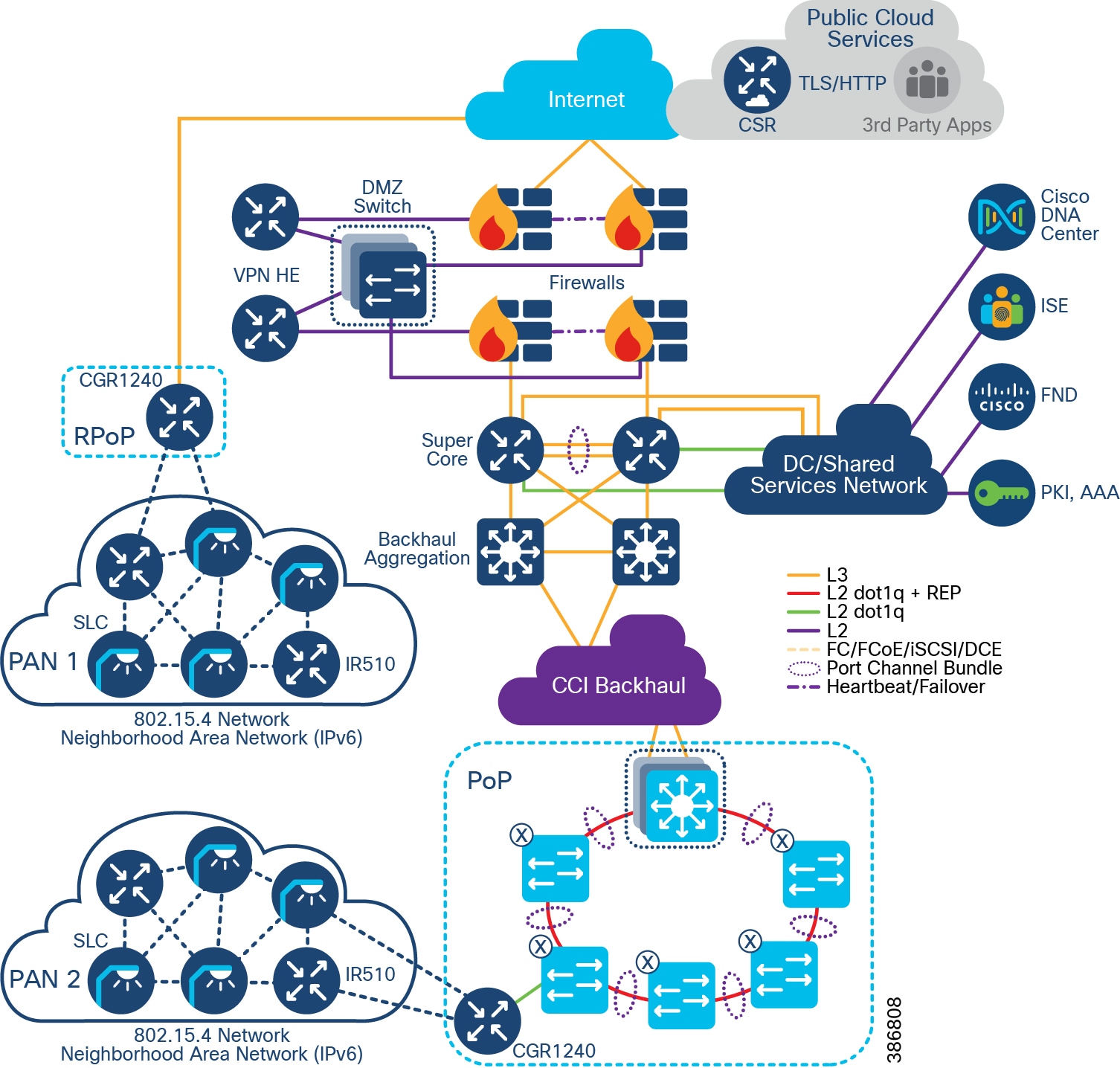

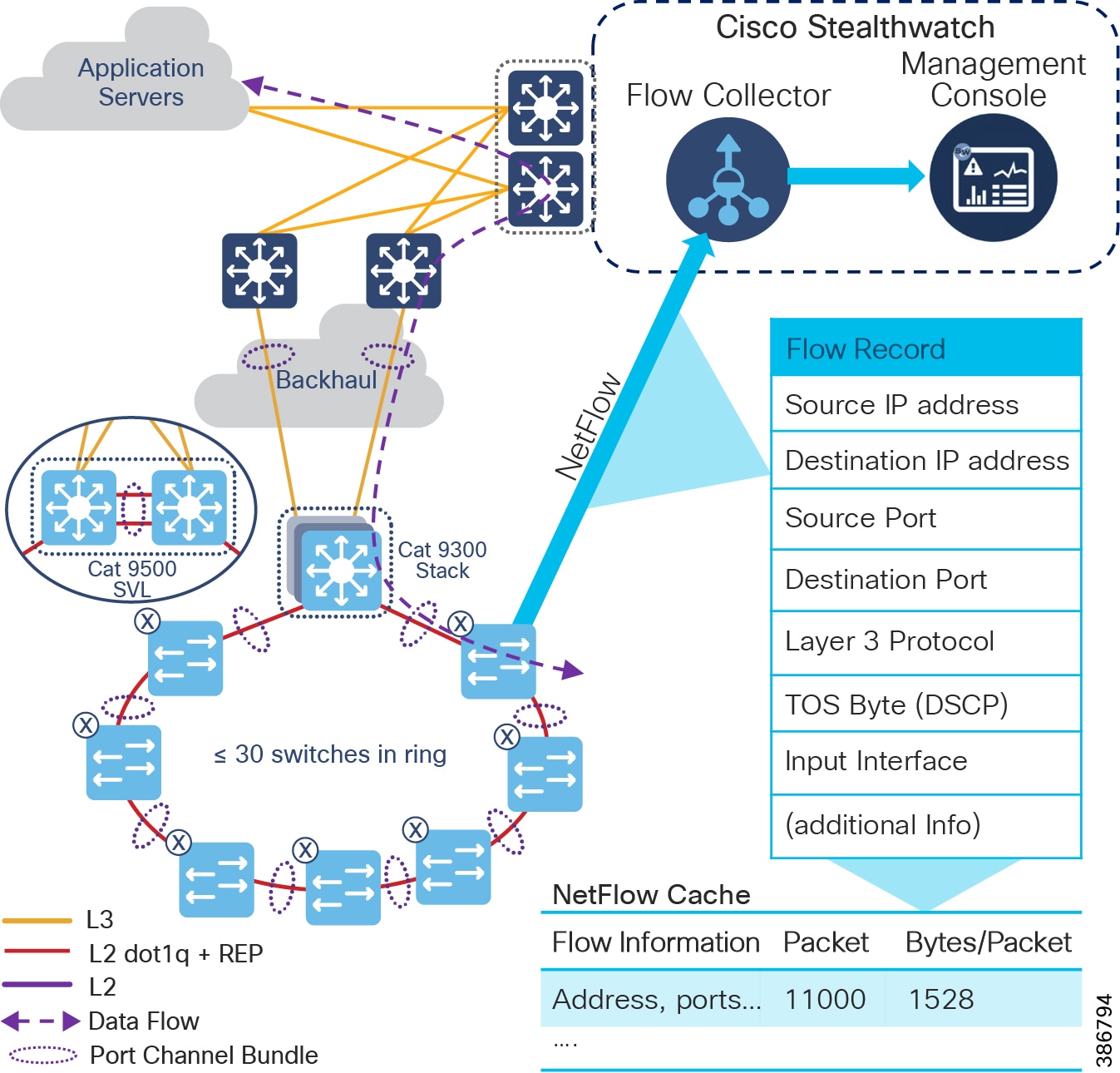

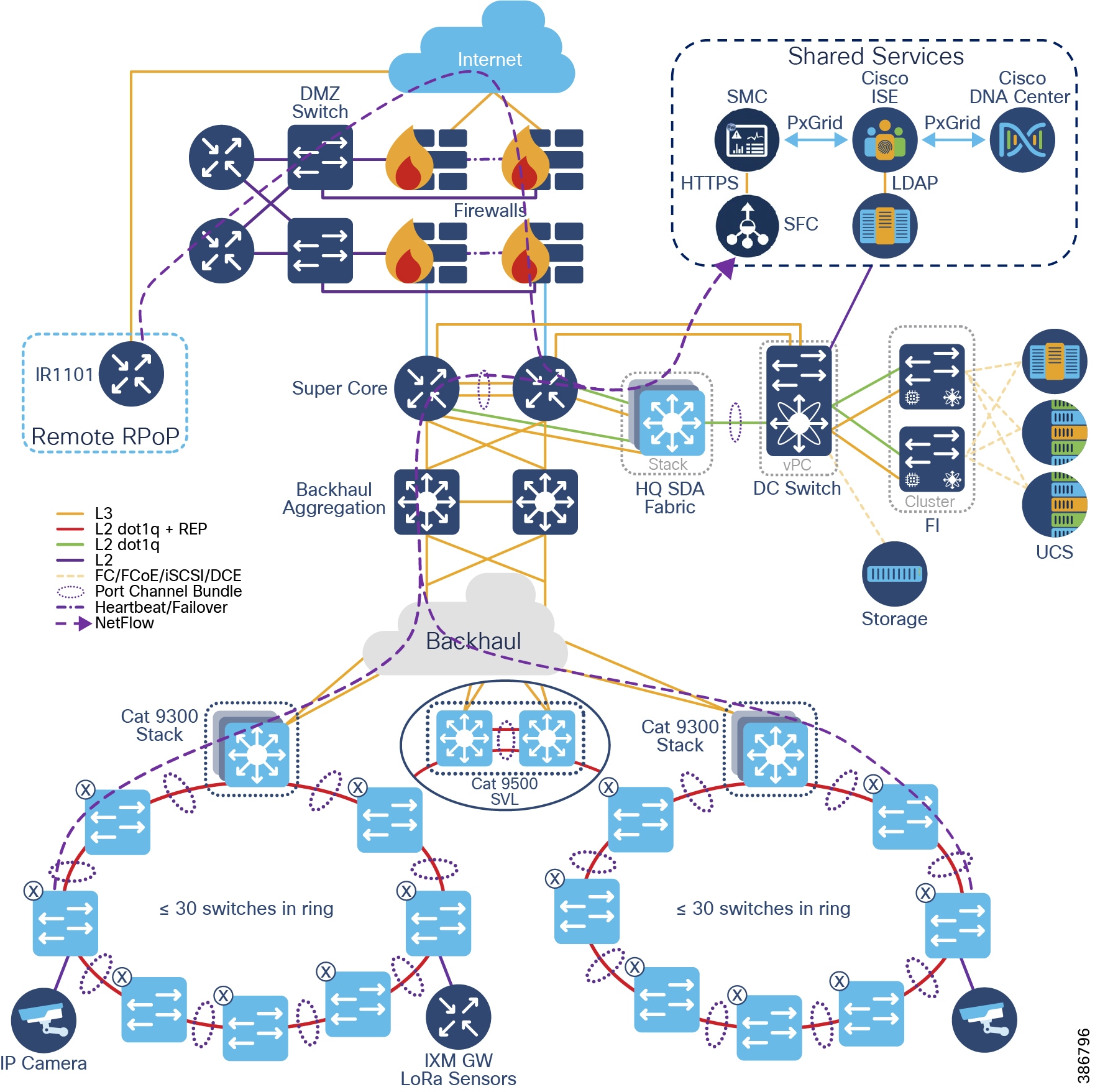

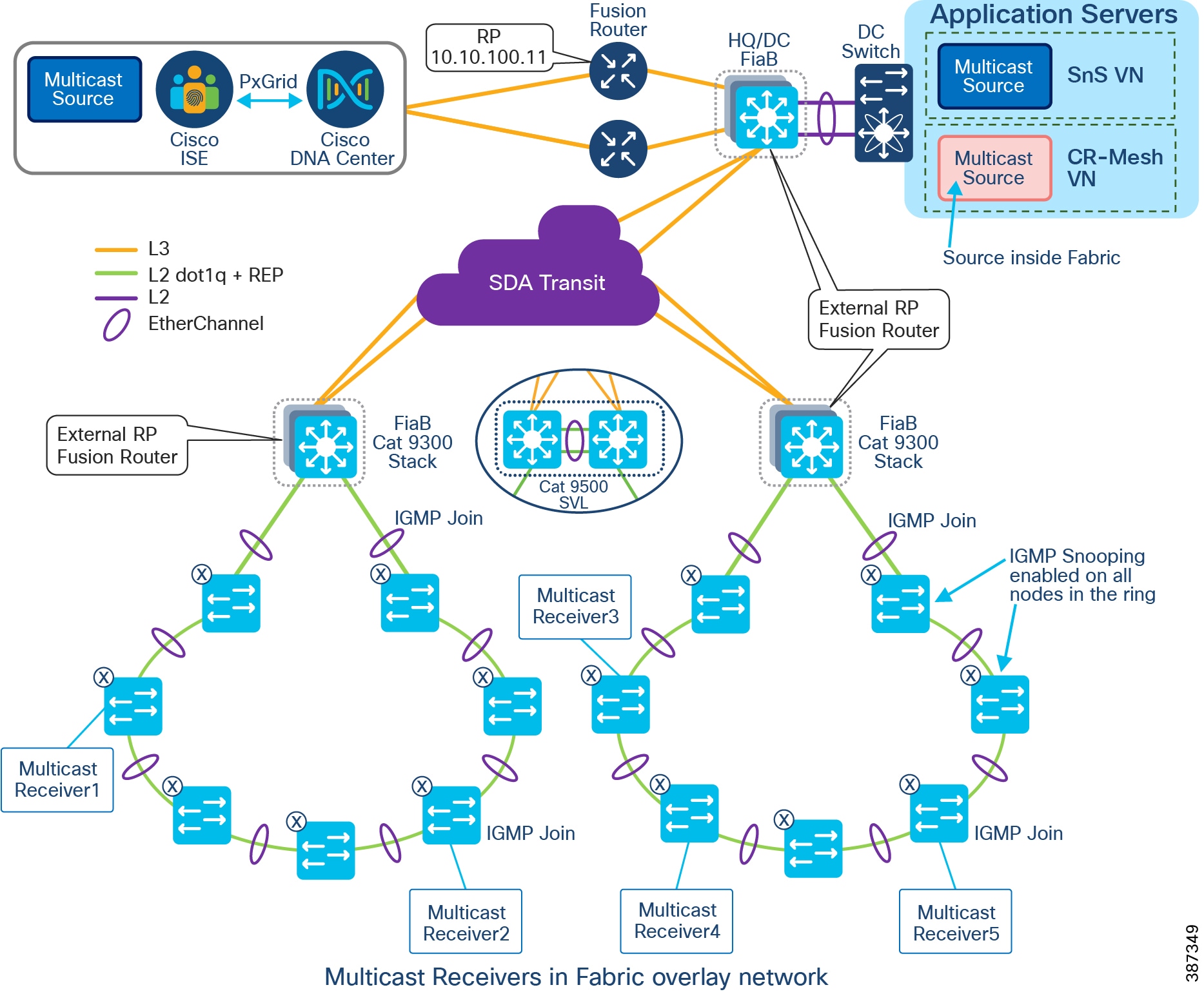

The CCI Network design based on the SD-Access framework follows the design principles and best practices associated with a hierarchical design by splitting the network into modular groups, as described in the Campus LAN and Wireless LAN Design Guide. The modular building blocks can be replicated, which makes it an optimal and scalable architecture. The network is a multi-tier architecture with access, distribution, core, data center, application server, DMZ, and Internet layers. The overall CCI network architecture with IP Transit is shown in CCI Network Diagram with IP Transit.

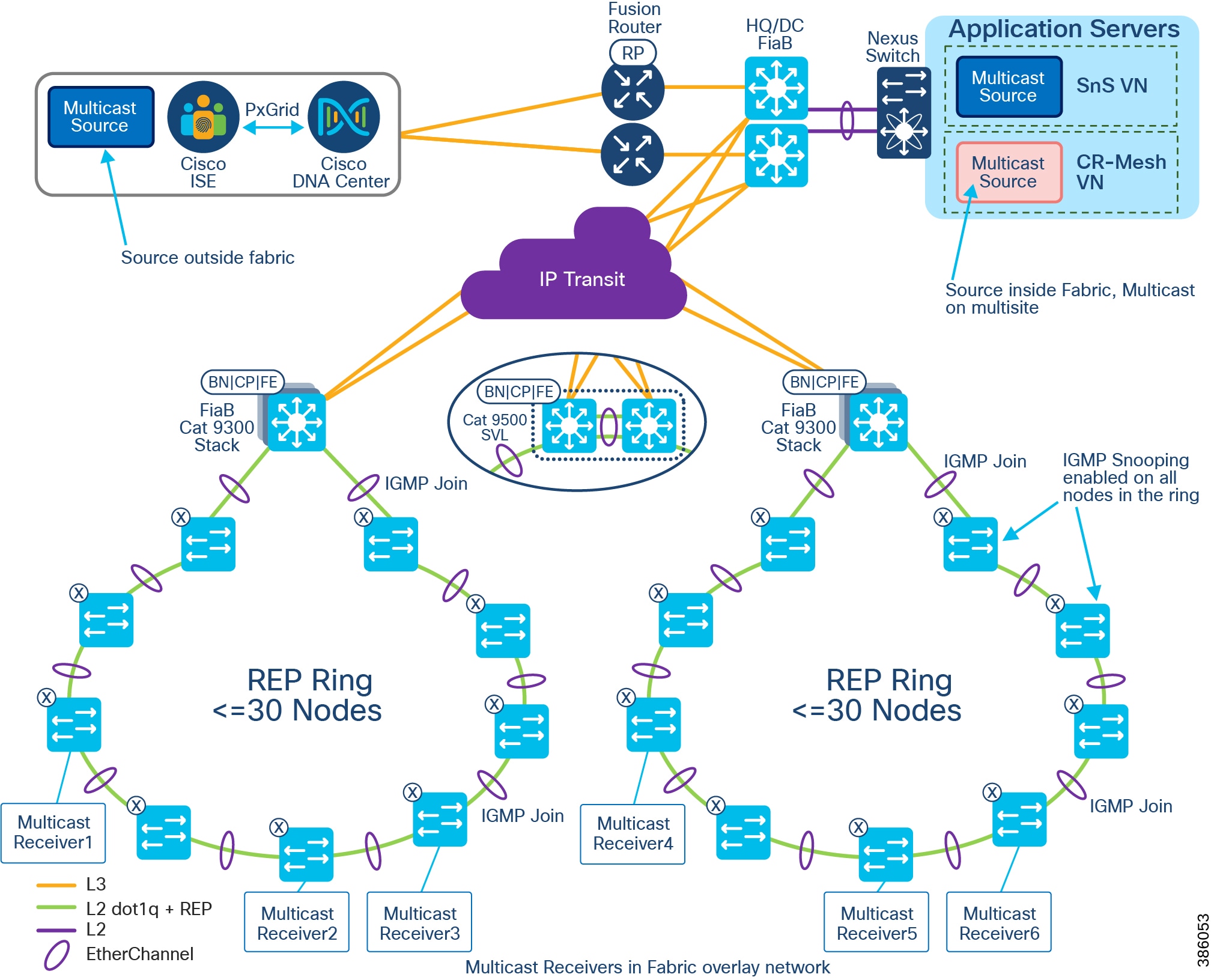

At the heart of the CCI network is the Cisco DNA Center with SD-Access, which is the single-pane-of-glass management and automation system. The CCI network spreads across a large geographical area, logically divided into several PoPs. Each PoP is designed as a fabric site.

Each fabric site (PoP) consists of the Fabric in a Box (FiaB), which is a consolidated fabric node. FiaB plays the role of a distribution layer by consolidating the access layer traffic and acting as the fabric site gateway to the core. The access layer consists of one or more REP rings of Cisco Industrial Ethernet Switches.

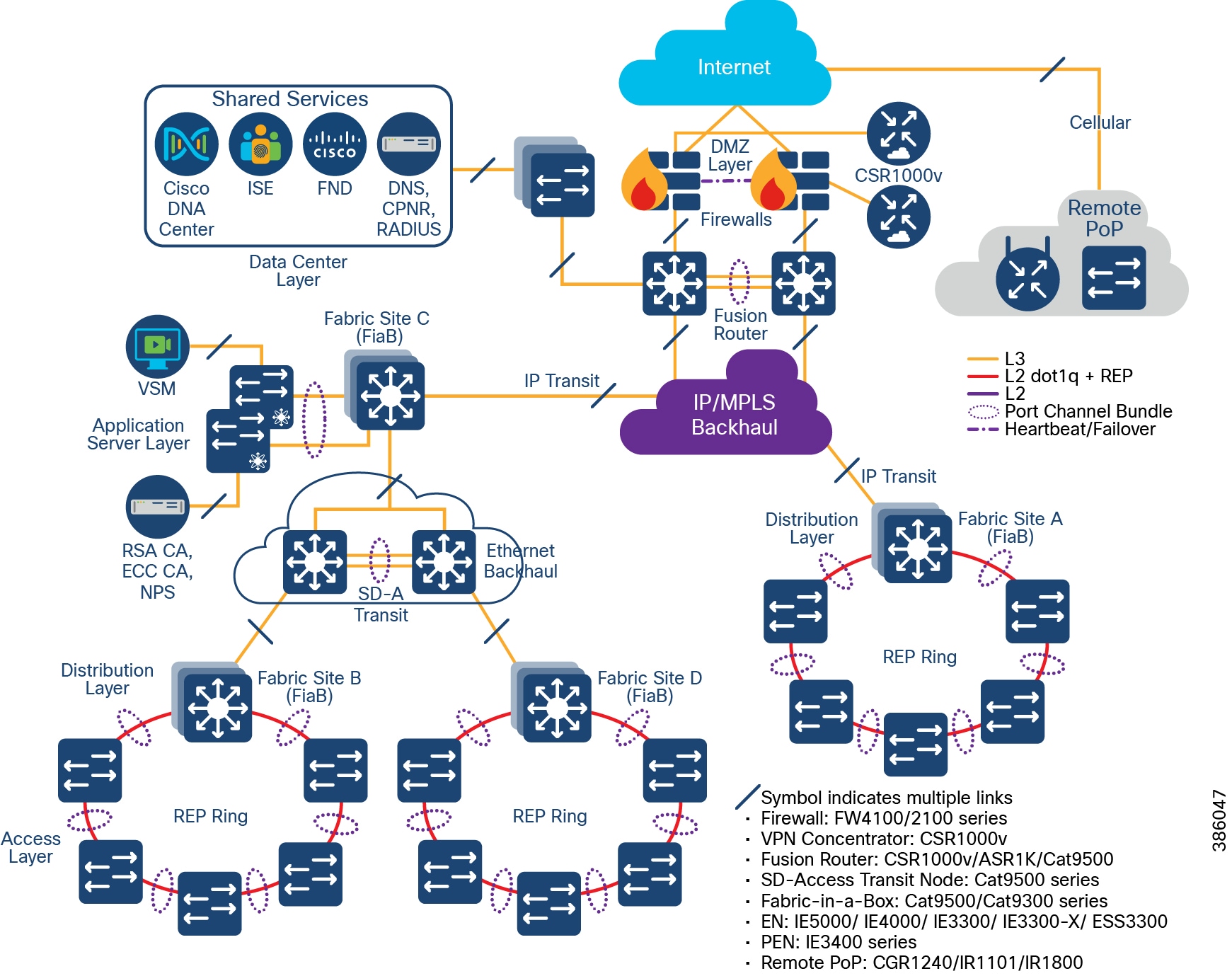

Multiple fabric sites across the city or along the roadway are interconnected by either SD-Access Transit or IP Transit to give a multi-site/distributed topology. A CCI Network deployment can have IP Transit or SD-Access Transit or both. The CCI Network Design with IP Transit illustrates a CCI Network design with only IP Transit, whereas The CCI Network Design having both SD-Access and IP Transit shows a CCI Network design with both SD-Access transit and IP-Transit.

A fusion router interconnects the fabric and all fabric sites with the shared services and Internet.

The application servers are hosted in an exclusive fabric site for end-to-end segmentation. The Internet breakout is centralized across all the fabric sites and passes through the firewall at the DMZ. The Cisco DNA Center needs to have Internet access for regular cloud updates. Important design considerations such as redundancy, load balancing, and fast convergence are to be ensured at every layer/critical node/critical link of the network. This will ensure uninterrupted service and optimal usage of the network resources.

Upcoming sections in this document elaborate each of these components. For more information, please refer to the Campus LAN and Wireless LAN Design Guide at the following URL:

The CCI Network Design with IP Transit

CCI Network Diagram with IP Transit shows the CCI Network design with IP Transit. Multiple network sites (PoP locations) are interconnected by an IP/MPLS backbone configured by SD-Access as IP Transit. IP Transit Network elaborates on IP Transit.

Figure 9 CCI Network Diagram with IP Transit

The CCI Network Design having both SD-Access and IP Transit

CCI Network Having Both SD-Access Transit and IP Transit shows the CCI Network design having both SD-Access and IP Transit. The network sites that have a campus like connectivity (high speed, low latency, and Jumbo MTU support) with Cisco DNA Center are interconnected with SD-Access Transit. The network sites that have a WAN like IP/MPLS backbone are interconnected with IP Transit. A core device called a Fusion Router interconnects shared services and Internet to all fabric sites in the network, regardless of their backhaul.

Figure 10 CCI Network Having Both SD-Access Transit and IP Transit

Underlay Network

In order to set up an SD-Access-managed network, all managed devices need to be connected with a routed underlay network, thus being IP reachable from the Cisco DNA Center. This underlay network can be configured manually or with the help of the Cisco DNA Center LAN Automation feature. Note that Cisco DNA Center LAN automation has a maximum limit of two hops from the configured seed devices and does not support Cisco Industrial Ethernet (IE) Switches. Because the CCI network has Cisco Industrial Ethernet (IE) switches and most CCI network deployments will have more than two hops, manual underlay configuration is recommended for CCI.

The SD-Access design recommendation is that the underlay should preferably be an IS-IS routed network. While other routing protocols can be used, IS-IS provides unique operational advantages such as neighbor establishment without IP protocol dependencies, peering capability using loopback addresses, and agnostic treatment of IPv4, IPv6, and non-IP traffic. It also deploys both a unicast and multicast routing configuration in the underlay, aiding traffic delivery efficiency for services built on top. However, other routing protocols such as Enhanced Interior Gateway Routing Protocol (EIGRP) and Open Shortest Path First (OSPF) can also be deployed, but these may require additional configuration.

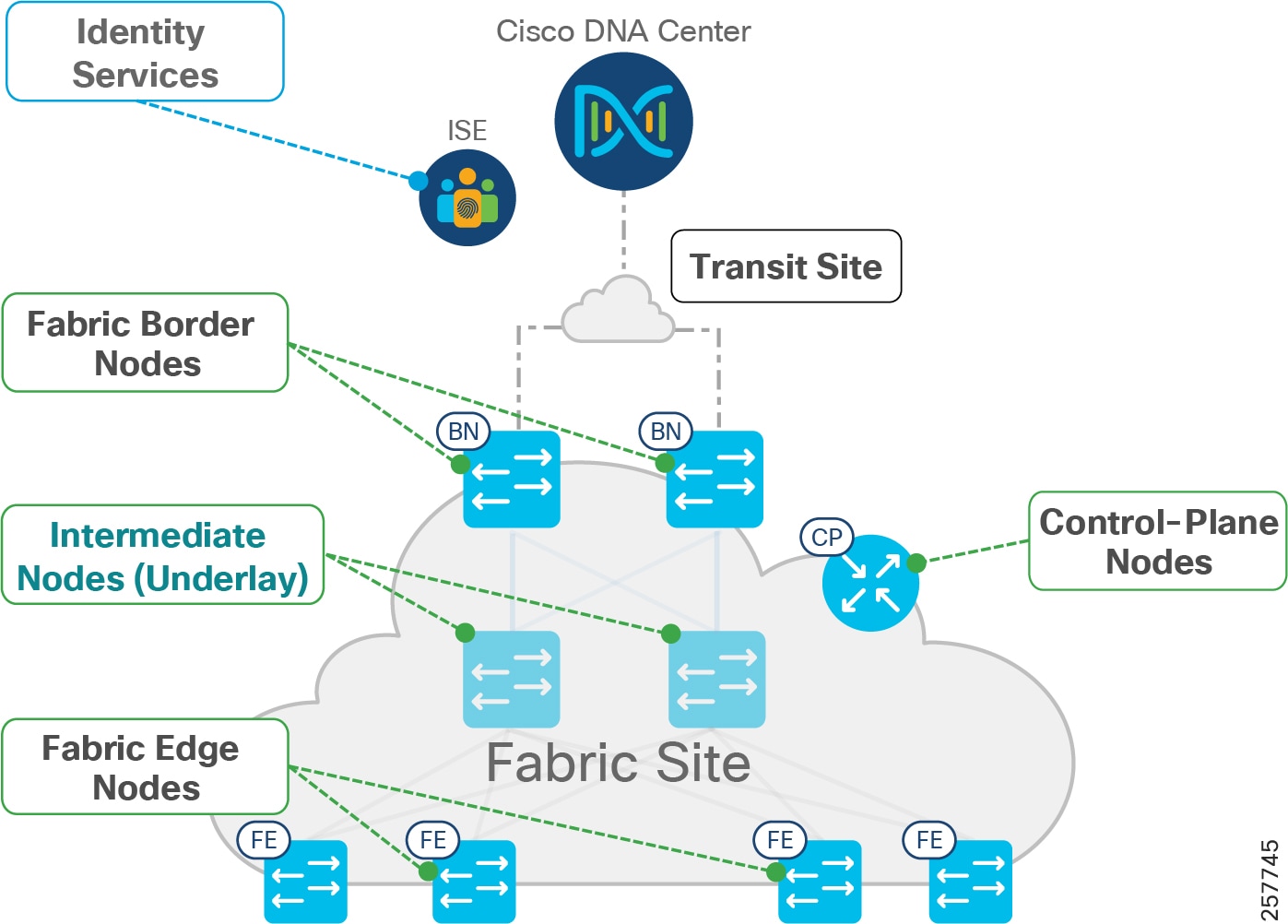

Underlay connectivity spans across the fabrics, covering Fabric Border Node (BN), Fabric Control Plane (CP) node, Intermediate nodes, and Fabric Edges (FE). Underlay also connects the Cisco DNA Center, Cisco ISE, and the fusion router. However, all endpoint subnets are part of the overlay network.

Note: The underlay network for the SD Access fabric requires increased MTU to accommodate additional overlay fabric encapsulation header bytes. Hence, you must increase the default MTU to9100bytesto ensure that Ethernet jumbo frames can be transported without fragmentation inside the fabric.

Refer to the SD-Access Design and Deployment Guides for further underlay design and deployment details.

Overlay Network

An SD-Access fabric creates virtualized networks (VNs) on top of the physical underlay network, called overlay. These VNs can span the entire fabric and remain completely isolated from each other. The entire overlay traffic, including data plane and control plane, are contained fully within each VN. The boundaries for the fabric are the BN and FE nodes. BN is the ingress and egress point to the fabric, FE is the entry point for wired clients, and Fabric Wi-Fi AP is the entry point for Wi-Fi wireless clients.

The VNs are realized by virtual routing and forwarding (VRF) instances and each VN appears as a separate instance for connectivity to the external network. SD-Access overlay can be either Layer 2 overlay or Layer 3. For the CCI network, Layer 3 overlay is chosen as the default option. The Layer 3 overlay allows multiple IP networks as part of each VN. Overlapping IP address space across different Layer 3 overlays is not recommended in the CCI network for administrative convenience and to avoid the need for network address translation (NAT) for shared services that span across VNs.

Within the SD-Access fabric, the user and control data are encapsulated and transported using the overlay network. The encapsulation header carries the virtual network and SGT information, which is used for traffic segmentation within the overlay network.

Segmentation allows granular data plane isolation between groups of endpoints within a VN and allows simple-to-manage group-based policies for selective access. The SGTs also aid scalable deployment of policy avoiding cumbersome IP-based policies.

VNs provide macro-segmentation by isolation of both data and control plane, whereas segmentation with SGT provides micro-segmentation by selective separation of groups within a VN.

By default, no communication between VNs is possible. If communication is needed across VNs, a fusion router outside the fabric can be employed with appropriate “route-leaking” configuration for selective inter-VN traffic communication; however, communication within a VN (same or different SGT) is routed within the fabric.

Following the SD-Access design recommendations, minimizing the number of IP subnets is advised to simplify the Dynamic Host Configuration Protocol (DHCP) management. The IP subnets can be stretched across a fabric site without any flooding concerns, unlike large Layer 2 networks. IP subnets should be sized according to the services that they support across the fabric. However, based on the deployment needs of enabling optional broadcast feature, the subnet size can be limited. In this context, a “service” may be a use case: for example, how many IPv4 Closed Circuit Television (CCTV) cameras am I going to deploy across my entire city (now and into the future), and how many back-end servers in my DC do I need to support them?

Fabric Data Plane and Control Plane

This section provides a detailed explanation of how the fabric data and control plane work. All of this is automated by SDA and largely hidden from the administrator; therefore, this section can be skipped unless the reader wishes to go very deep.

Within the SD-Access fabric, SD-Access configures the overlay with fabric data plane by using Virtual Extensible LAN (VXLAN). RFC 7348 defines the use of VXLAN as a way to overlay a Layer 2 network on top of a Layer 3 network. VXLAN encapsulates and transports Layer 2 frames across the underlay using UDP/IP over Layer 3 overlay. Each overlay network is called a VXLAN segment and is identified by a VXLAN Network Identifier (VNI). The VXLAN header carries VNI and SGT needed for macro- and micro-segmentation. Each VN maps to a VNI, which, in turn, maps to a VRF in the Layer 3 overlay.

Along with VXLAN data plane, SD-Access uses Location/IP Separation Protocol (LISP) as control plane. From a data plane perspective, each VNI maps to a LISP Instance ID. LISP helps to resolve endpoint-to-location mapping. LISP does perform routing based on End Point Identifier (EID) and Routing Locator (RLOC) IP addresses. An EID could be either an endpoint IP address or MAC. An RLOC is part of underlay routing domain, which is typically the Loopback address of the FE node to which the EID is attached. The RLOC represents the physical location of the endpoint. The combination of EID and RLOC gives device ID and location; thus, the device can be reached even if it moves to a different location with no IP change. The RLOC interface is the only routable address that is required to establish connectivity between endpoints of the same or different subnets.

Within the SD-Access fabric, LISP provides control plane forwarding information; therefore, no other routing table is needed. To communicate external to the SD-Access fabric, at the border each VN maps to a VRF instance. Outside the fabric path, isolation techniques such as VRF-Lite or MPLS may be used to maintain the isolation between VRFs. EIDs can be redistributed into a routing protocol such as Border Gateway Protocol (BGP), EIGRP, or OSPF for use in extending the virtual networks.

To provide forwarding information, LISP map server, located on the CP node, maintains EID (host IP/MAC) to RLOC mapping in its map-server. The local node queries the control plane to fetch the destination EID route.

Fabric Border

Fabric Roles and Terminology depicts different fabric roles and terminology in Cisco SD-Access design. Fabric Border (BN) is the entry and exit gateway between the SD-Access fabric site and networks external to the fabric site. Depending on the types of outside networks it connects to, BN nodes can be configured in three different roles: Internal Border (IB), External Border (EB), and Anywhere Border (AB). The IB connects the fabric site to known areas internal to the organization such as the data center (DC) and application services. The EB connects a fabric site to a transit as an exit path for the fabric site to outside world, including other fabric sites and the Internet. AB, however, connects the fabric site to both internal and external locations of the organization. The aggregation point for the exiting traffic from the fabric should be planned as the border; traffic exiting the border and doubling back to the actual aggregation point results in sub-optimal routing. In CCI, each PoP site border is configured with EB role connecting to a transit site and HQ/DC fabric site border is configured with AB role to provide connectivity to internal and external locations.

Figure 11 Fabric Roles and Terminology

In general, the fabric BN is responsible for network virtualization interworking and SGT propagation from the fabric to the rest of the network. The specific functionality of the BN includes:

- Gateway for the fabric to reach the world outside the fabric

- Advertising EID subnets of the fabric to networks outside the fabric for them to communicate with the hosts of the fabric, via BGP

- Mapping LISP instances to VRF instances to preserve the virtualization

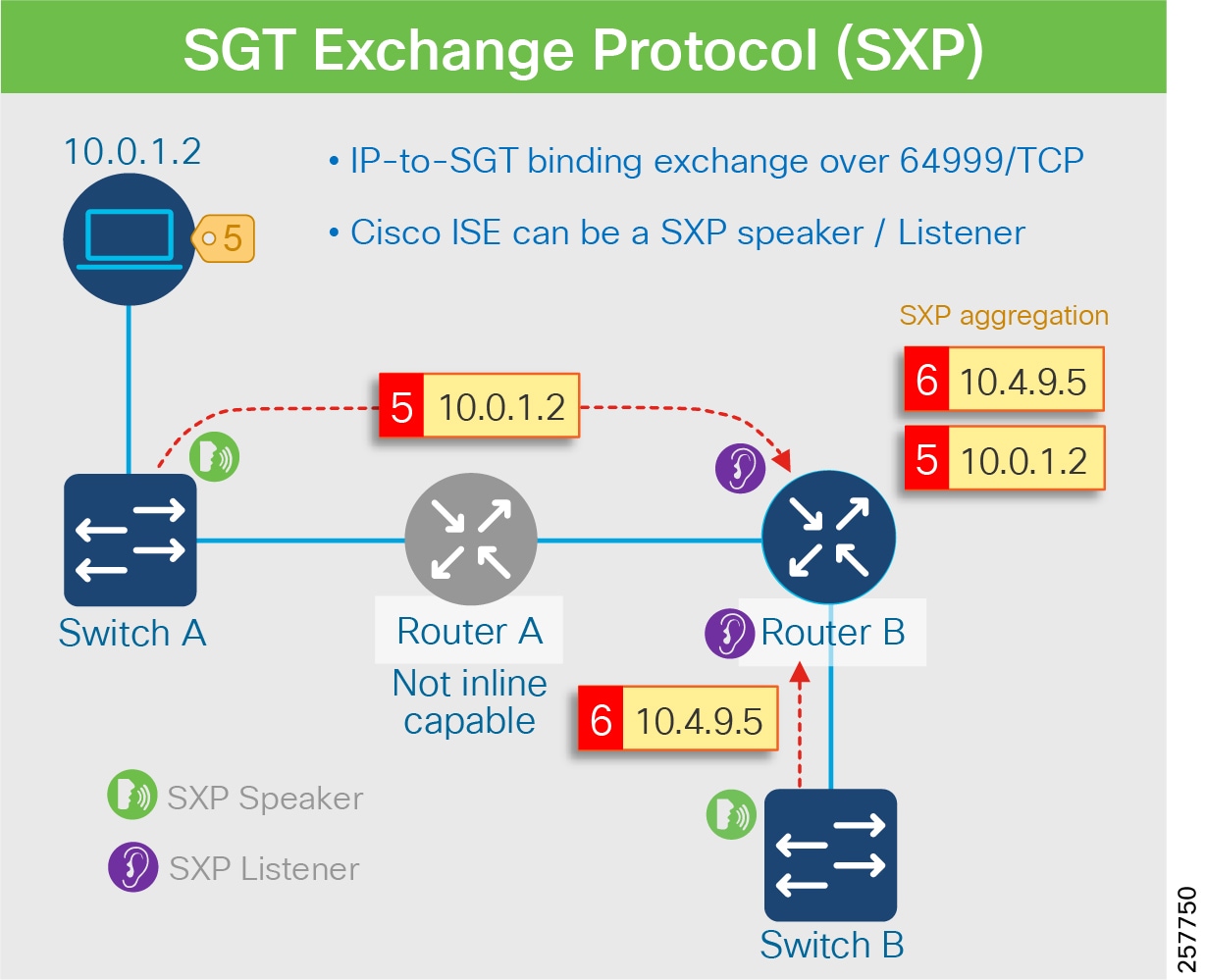

- Propagating SGT to the external network either by transporting tags using SGT Exchange Protocol (SXP) to Cisco TrustSec-aware devices or using inline tagging in the packet

The EID prefixes appear only on the routing tables at the border; throughout the rest of the fabric, the EID information is accessed using the fabric control plane (CP).

Fabric Edge

Fabric edge nodes (FEs) are access layer devices that provide Layer 3 network connectivity to end-hosts or clients addressed as endpoints. The fundamental functions of FE nodes include endpoint registration, mapping endpoints to virtual networks, and segmentation and application/QoS policy enforcement.

Endpoints are mapped to VN by assigning the endpoints to a VLAN associated to a LISP instance. This mapping of endpoints to VLANs can be done statically (in the Cisco DNA Center user interface) or dynamically (using 802.1X and MAB). Along with the VLAN, an SGT is also assigned, which is used to provide segmentation and policy enforcement at the FE node.

Once a new endpoint is detected by the FE node, it is added to a local host tracking database EID-Table. The FE node also issues a map-registration message to the LISP map-server on the control plane node to populate the Host Tracking Database (HTDB).

On receipt of a packet at the FE node, a search is made in its local host tracking database (LISP map-cache) to get the RLOC associated with the destination EID. In case of a miss, it queries the map-server on the control plane node to get the RLOC. In case of a failure to resolve the destination RLOC, the packet is sent to the default fabric border. The border forwards the traffic using its global routing table.

If the RLOC is obtained, the FE node uses the RLOC associated with the destination IP address to encapsulate the traffic with VXLAN headers. Similarly, VXLAN traffic received at a destination RLOC is de-encapsulated by the destination FE.

If traffic is received at the FE node for an endpoint not locally connected, a LISP solicit-map-request is sent to the sending FE node to trigger a new map request; this addresses the case where the endpoint may be present on a different FE switch.

Fabric-in-a-Box (FiaB)

For smaller fabric sites, such as a CCI PoP, all three fabric functions (Border, Control, and Edge) can be hosted in the same physical network device; this is known as “Fabric in a Box” (FiaB).

In the current release of CCI, the FiaB model is recommended based on the size of the network and size of the traffic to be supported from a fabric site. For size calculations, see CCI Network Access Layer Dimensioning.

Extended Nodes and Policy Extended Nodes

Extended Node

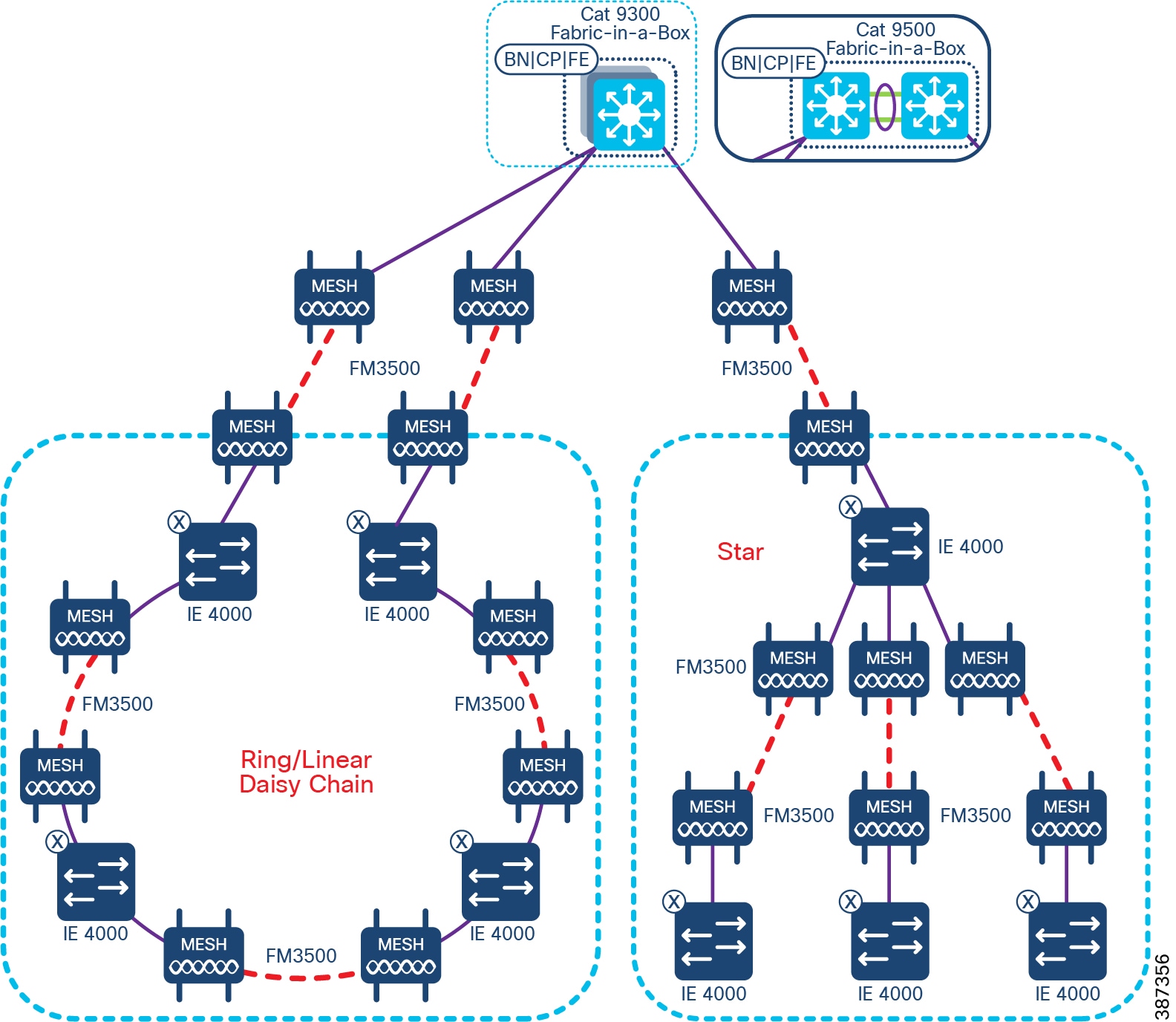

The SD-Access fabric can be extended with the help of extended nodes. Extended nodes are access layer Ruggedized Ethernet switches that are connected directly to the Fabric Edge/FiaB. The list of DNA Center 2.2.3-supported extended node devices used in CCI network include the Cisco IE4000 series, the Cisco IE5000 series switches the Cisco IE3300 series switches and the Cisco ESS3300 switches.

Cisco IE3400 series switches can be configured as Policy Extended Node (PEN) being a superset of Extended Node. Refer to the “ Policy Extended Node ” section below for more details on IE3400 switches role in CCI PoP. These Ruggedized Ethernet switches are connected to the Fabric Edge or FiaB in a daisy-chained linear, star, and ring topologies for Ethernet access network high availability. Refer to the section “Ethernet Access Network” in this document, for more details on Ethernet daisy-chained, linear, star and ring topologies design in CCI.

Extended nodes support VN based macro-segmentation in the Ethernet access ring. These devices do not natively support fabric technology. Therefore, policy enforcement for the traffic generated from the extended node devices is done by SD-Access at the Fabric Edge.

The daisy-chained ENs do all of the endpoint onboarding connected to its ports, but policy is applied only to traffic passing through the FE/FiaB nodes. The extended nodes support 802.1X or MAB based Closed Authentication for Host Onboarding in Cisco DNA Center Fabric provisioning.

The rationale for recommending ring topology with REP for Cisco Industrial Ethernet (IE) switches to provide Ethernet access is discussed in “Ethernet Access Network”. Both ends of REP ring are terminated at FE/FiaB, such that all Cisco Industrial Ethernet (IE) switches in the ring and FiaB are part of closed REP segment.

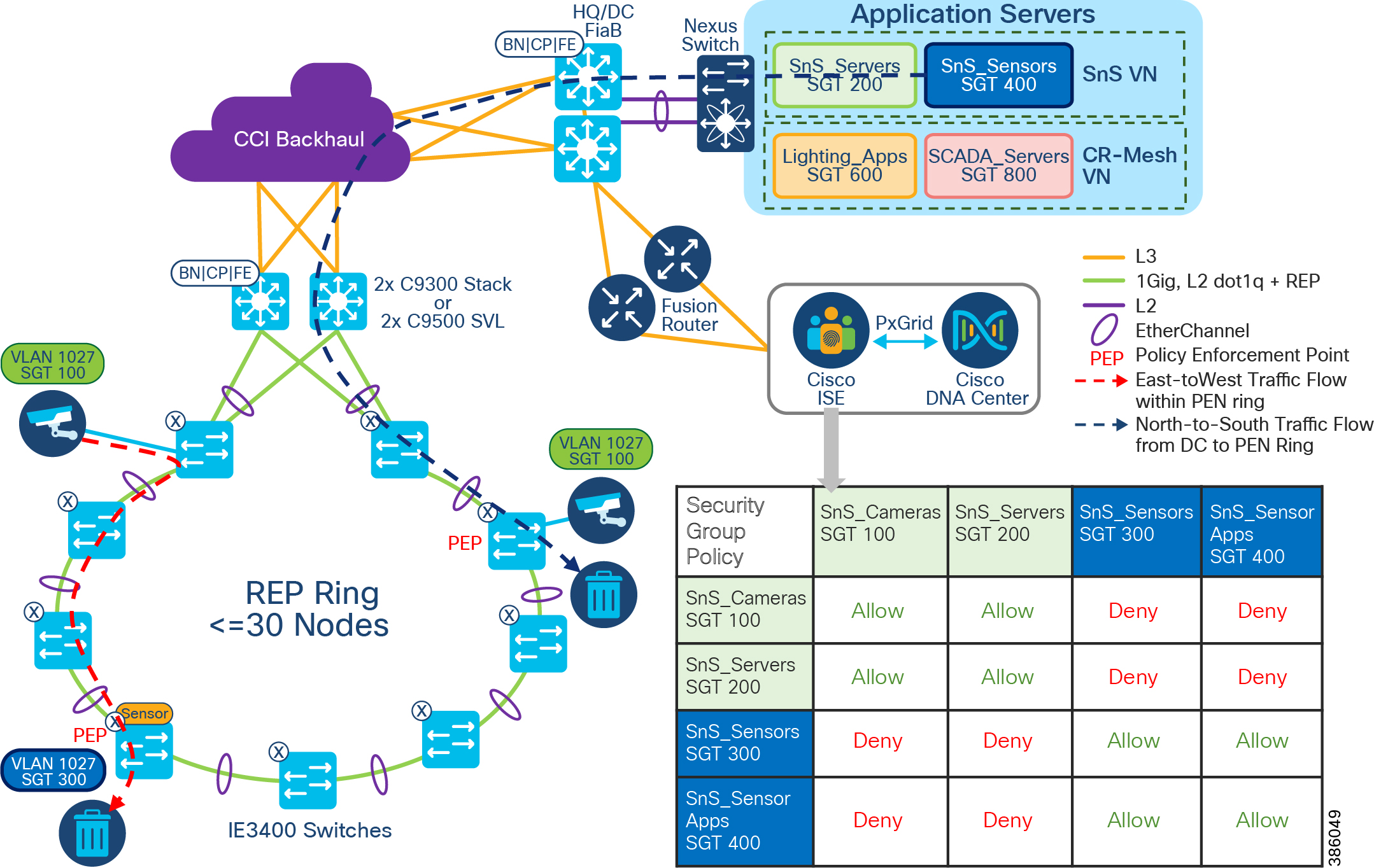

Policy Extended Node

Cisco DNA Center 2.2.3 also supports “Policy Extended Node” which is a construct at Ethernet access ring capable of doing group based micro-segmentation for improved Ethernet access ring security. Cisco IE3400 series switches support this functionality with Network Advantage and DNA Advantage licenses. IE3400 switches must have Network Advantage and DNA advantage licenses to operate as Policy Extended Node. The policy extended nodes are capable of doing Scalable Group Tag (SGT) based inline tagging and enforcing SGACL based security policies for device to device communication within a VN or domain.

Cisco TrustSec (CTS) architecture consists of authentication, authorization and services modules like guest access, device profiling etc., TrustSec is an umbrella term and it covers anything to do with endpoint’s identity, in terms of IEEE 802.1X (dot1x), profiling technologies, guest services, Scalable Group based Access (SGA) and MACSec (802.1AE). CTS simplifies the provisioning and management of secure access to network services and applications. Compared to access control mechanisms that are based on network topology, Cisco TrustSec defines policies using logical policy groupings, so secure access is consistently maintained even as resources are moved in mobile and virtualized networks.

CTS classification is done by Cisco ISE and policy enforcement is done on Cisco switching, routing, wireless LAN, and firewall products. By classifying traffic based on the contextual identity of the endpoint versus its IP address, Cisco TrustSec enables more flexible access controls for dynamic networking environments. At the point of network access, a Cisco TrustSec policy group called a Security Group Tag (SGT) is assigned to an endpoint, typically based on that endpoint’s user, device, and location attributes. The SGT denotes the endpoint’s access entitlements, and all traffic from the endpoint will carry the SGT information.

The PEN supports CTS and 802.1X or MAB based Closed Authentication for host onboarding along with dynamic VLAN and SGT attributes assignment for endpoints, in Cisco DNA Center Fabric provisioning. It requires the policy extended nodes to communicate with ISE to authenticate and authorize the endpoints for downloading the right VLANs and SGT attributes.

A feature comparison of Extended Node and Policy Extended Node is shown in Table 1.

Endpoints

The clients or user devices that connect to the Fabric Edge Node are called Endpoints; supported downstream switches are Extended Nodes or Policy Extended Nodes. In the case of CCI Network, wired and wireless clients connect directly or indirectly via APs or gateways to access switches that are either ENs or PENs. For uniformity in this document, we refer to all of the wired and wireless clients as “Endpoints.”

Transit Network

Fabric domain is a single fabric network entity consisting of one or more isolated and independent fabric sites. Multiple fabric sites can be connected with a transit network. Depending on the characteristics of the intermediate network interconnecting the fabric sites and Cisco DNA Center, the transit network can either be SD-Access Transit or IP Transit. Typically, an IP-based Transit connects a fabric site to an external network whereas SD-Access Transit connects one or more native fabric sites.

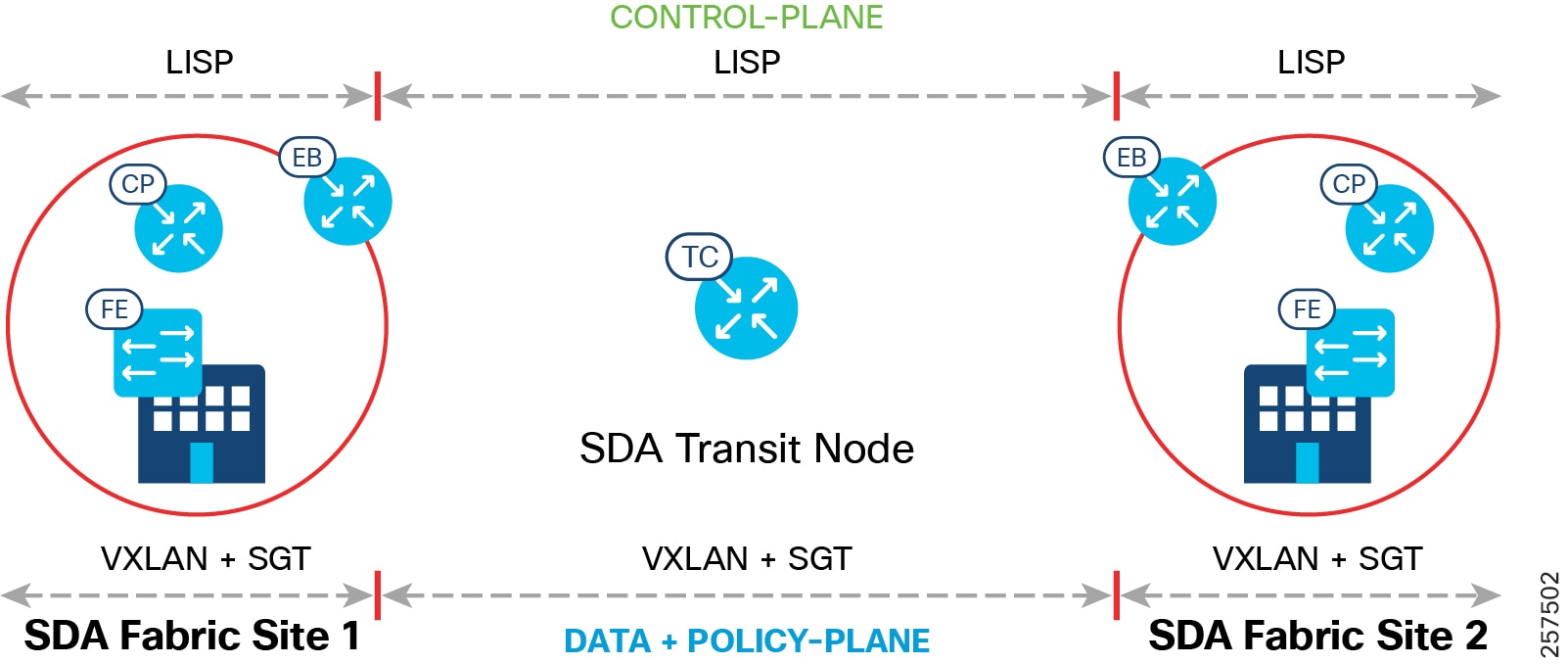

SD-Access Transit Network

The key consideration for using SD-Access transit is that the network between the fabric sites and the Cisco DNA Center should be created with campus-like connectivity. The connections should be high-bandwidth and low latency (less than 10ms) and should accommodate jumbo MTUs (9100 bytes). These are best suited when dark fiber is available between fabric sites. The larger MTU size is needed to accommodate an increase in packet size due to VXLAN encapsulation, therefore, avoiding fragmentation and reassembly.

An SD-Access Transit consists of a domain-wide control plane node dedicated to the transit functionality, connecting to a network that has connectivity to the native SD-Access (LISP, VXLAN, and CTS) fabric sites that are to be interconnected as part of the larger fabric domain. Aggregate/summary route information is populated by each of the borders connected to the SD-Access Transit control plane node using LISP.

SD-Access Transit carries SGT and VN information, with native SD-Access VXLAN encapsulation, inherently enabling policy and segmentation between fabric sites; in that way, segmentation is maintained across the fabric sites in a seamless manner.

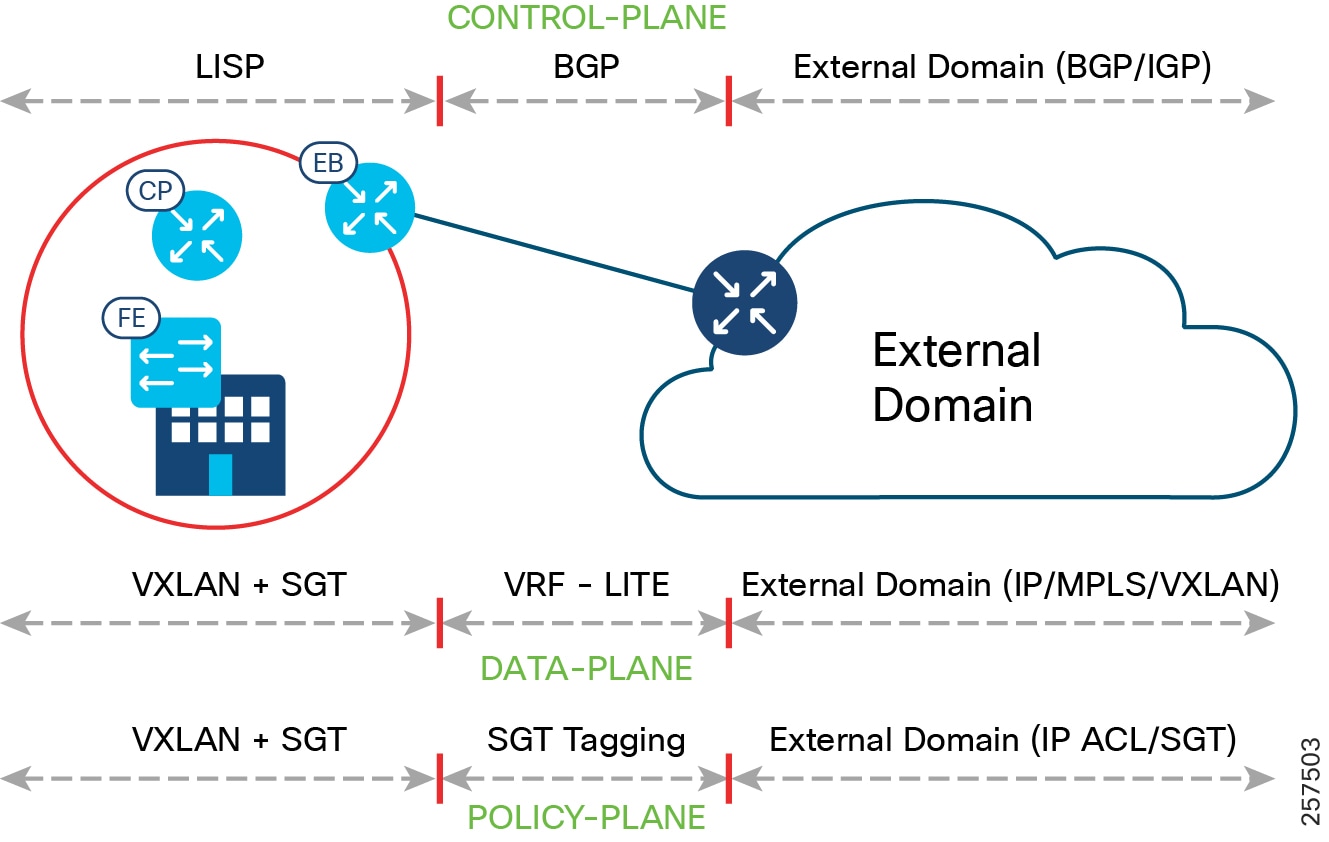

End-to-end configuration of SD-Access Transit is automated by the Cisco DNA Center. The control, data, and policy plane mapping across the SD-Access Transit is shown in SD-Access Transit Data, Control, and Policy Plane Mapping. Two SD-Access Transit Control (TC) plane nodes are required, but these are for control plane signaling only and do not have to be in the data plane path.

Note: SD-Access Transit Control Plane functionality can be co-located in the WAN aggregation or border routers or it can also be deployed in a separate pair of switches or routers in CCI deployments.

Figure 12 SD-Access Transit Data, Control, and Policy Plane Mapping

IP Transit Network

IP Transit is the choice when the fabric sites are connected using an IP network that doesn't comply to the desired network specification of SD-Access Transit, such as latency and MTU. This is often the choice when the fabric sites are connected via public WAN circuits.

Unlike SD-Access Transit, the configurations of intermediate nodes connecting fabric sites in IP-Transit are manual and not automated by Cisco DNA Center.

IP Transits offer IP connectivity without native SD-Access encapsulation and functionality, potentially requiring additional VRF and SGT mapping for stitching together the macro- and micro-segmentation needs between sites. Traffic between sites will use the existing control and data plane of the IP Transit area. Thus, the ability to extend segmentation across IP transit depends on the external network.

Unlike SD-Access transit, no dedicated node does IP Transit functionality. Instead, the traditional IP handover functionality is performed by the fabric border node. Border nodes hand off the traffic to the directly connected external domain (BGP with VRF-LITE or BGP with MPLS VRF). BGP is the supported routing protocol between the border and external network. The router connecting to the border at the HQ site is also configured for fusion router functionality with selective route leaking. Fusion router is explained in the next section below. The list of VNs that need to communicate with the external network are selected at the border IP Transit interface.

The list of VNs that need to communicate with the external world are selected at the border IP Transit interface.

As discussed previously, IP Transit is outside of the fabric domain, therefore SXP is used to re-apply the correct markings (VXLAN and SGT) that are stripped off during the transit.

The control, data, and policy plane mapping from the SD-Access fabric to the external domain is shown in IP Transit Data, Control, and Policy Plane Mapping. Multiple fabric sites can interconnect via external network using IP Transit.

Figure 13 IP Transit Data, Control, and Policy Plane Mapping

Fusion Router

Most of the networks will need to connect to the Internet and shared services such as DHCP, DNS, and the Cisco DNA Center. Some networks may also have a need for restricted inter-VN communication. Inter-VN communication is not allowed and not possible within a Fabric Network.

To accommodate the above requirements at the border of the fabric, a device called a fusion router (FR) or fusion firewall is deployed. The border interface connecting to FR is an IP Transit. The FR/fusion firewall is manually configured to do selective VRF route leaking of prefixes between the SD-Access virtual networks and the external networks. The FR governs the access policy using ACLs, between the VRFs and the Global Routing Table (GRT). Use of the firewall as a FR gives an additional layer of security and monitoring of traffic between virtual networks.

Access Networks

CCI is versatile and modular, allowing it to support different kinds of access networks. Different CCI solutions such as Smart Lighting, Smart Parking, Safety and Security, and Connected Roadways have different access networks needs and can seamlessly use CCI as a common network infrastructure.

The list of access networks included in this release are:

- CCI Ethernet access network solution

- CCI Wi-Fi 802.11 access network solution

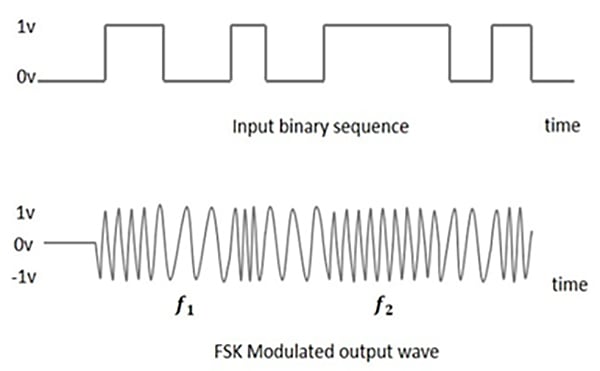

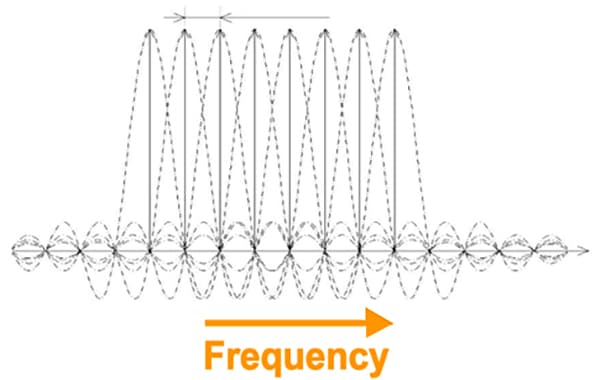

- CCI CR-Mesh (802.154g/e) access network solution (Wi-SUN certified)

- CCI LoRaWAN access network solution

Note: The physical installation of access networking around or on the street/roadway is very different than that of a typical enterprise network; extra care should be taken with respect to environment conditions and rating of equipment (and associated enclosures), as well as the physical security of the network equipment: for example, is it pole-mounted high enough out of reach? Is the enclosure securely locked?

Solution Components

The components of the CCI network are listed in this chapter. Several device models can be used at each layer of the network. The suitable platform of devices for each role in the network and the corresponding CVD-validated software versions are presented in CCI Network Components. To find a list of supported devices, refer to the SD-Access 2.x product compatibility matrix at the following URL:

https://www.cisco.com/c/dam/en/us/td/docs/Website/enterprise/sda_compatibility_matrix/index.html

The exact suitable model can be chosen from the suggested platform list to suit specific deployment requirements such as size of the network, cabling and power options, and access requirements. The components for various CCI general solutions are listed in their respective sections.

Note: In addition to the compatibility matrix, it is recommended to research any product vulnerabilities discovered since publication, via https://tools.cisco.com/security/center/publicationListing.x. This is especially important for ISE and the FlexVPN headend.

** Refer to the URL below for the list of Cisco Catalyst 9500 standard and high performance series of switches that support SVL:

Table 3 Cisco Ultra-Reliable Wireless Backhaul (CURWB) Components

* The Train Radio is not part of the trackside infrastructure. The FM 4500 resides on the train to communicate with the FM 3500 on the trackside.

CCI Switched Ethernet Access Network (PoPs)

This chapter discusses design for CCI Ethernet Access Network for endpoint connectivity.

Ethernet access is provided by connecting Cisco Industrial Ethernet (IE) Ethernet switches to Fabric Edge/FiaB. The Cisco Industrial Ethernet series switches are modular and scalable with various options for 10/10/1000Mbps copper/fiber ports with PoE/PoE+ support. A snapshot of the Cisco Industrial Ethernet (IE) switch portfolio is given in CCI Network Access, Distribution, and Core Layer Portfolio Comparison. The distance covered and number of access ports provided by a single hop of Cisco Industrial Ethernet (IE) Ethernet switch can be highly limiting. Daisy chaining of ENs or PENs provides flexibility for customers to extend the fabric connectivity. Daisy chaining of ENs and PENs in a linear, star and ring topologies are supported. However, multi-hop ring network with REP ring technology is preferred in IoT applications due to distance covered, redundancy, and resiliency features.

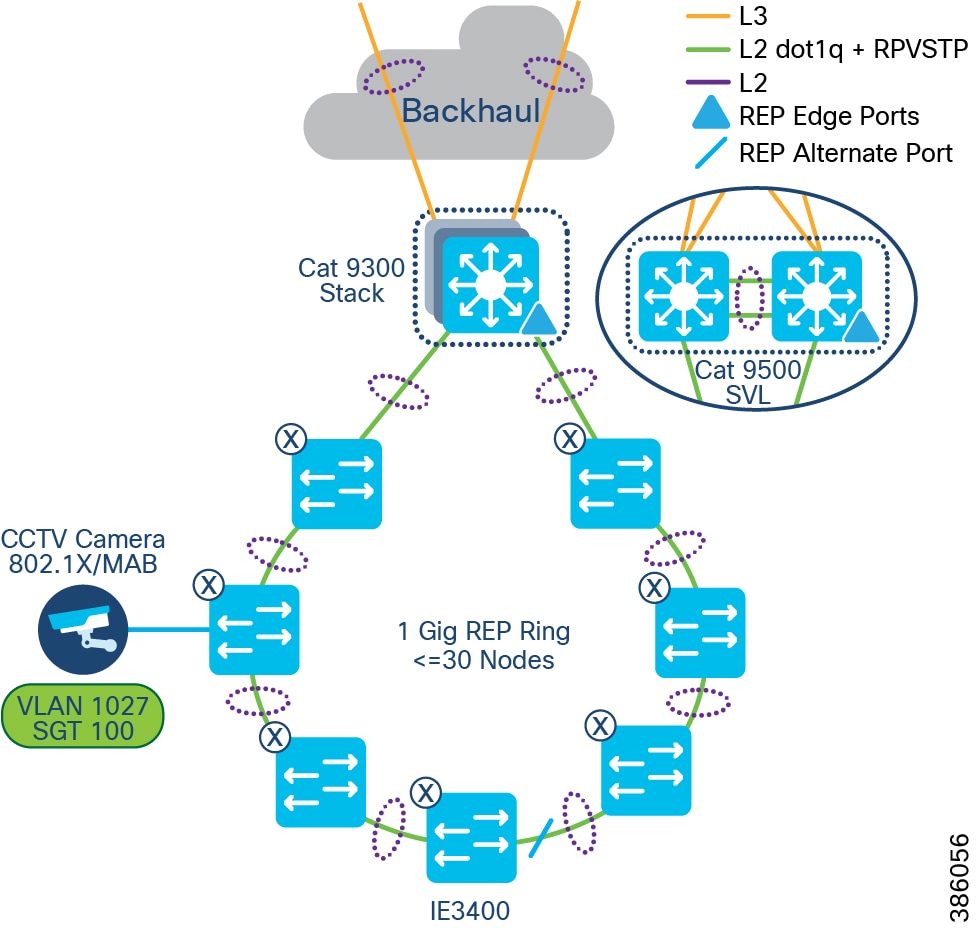

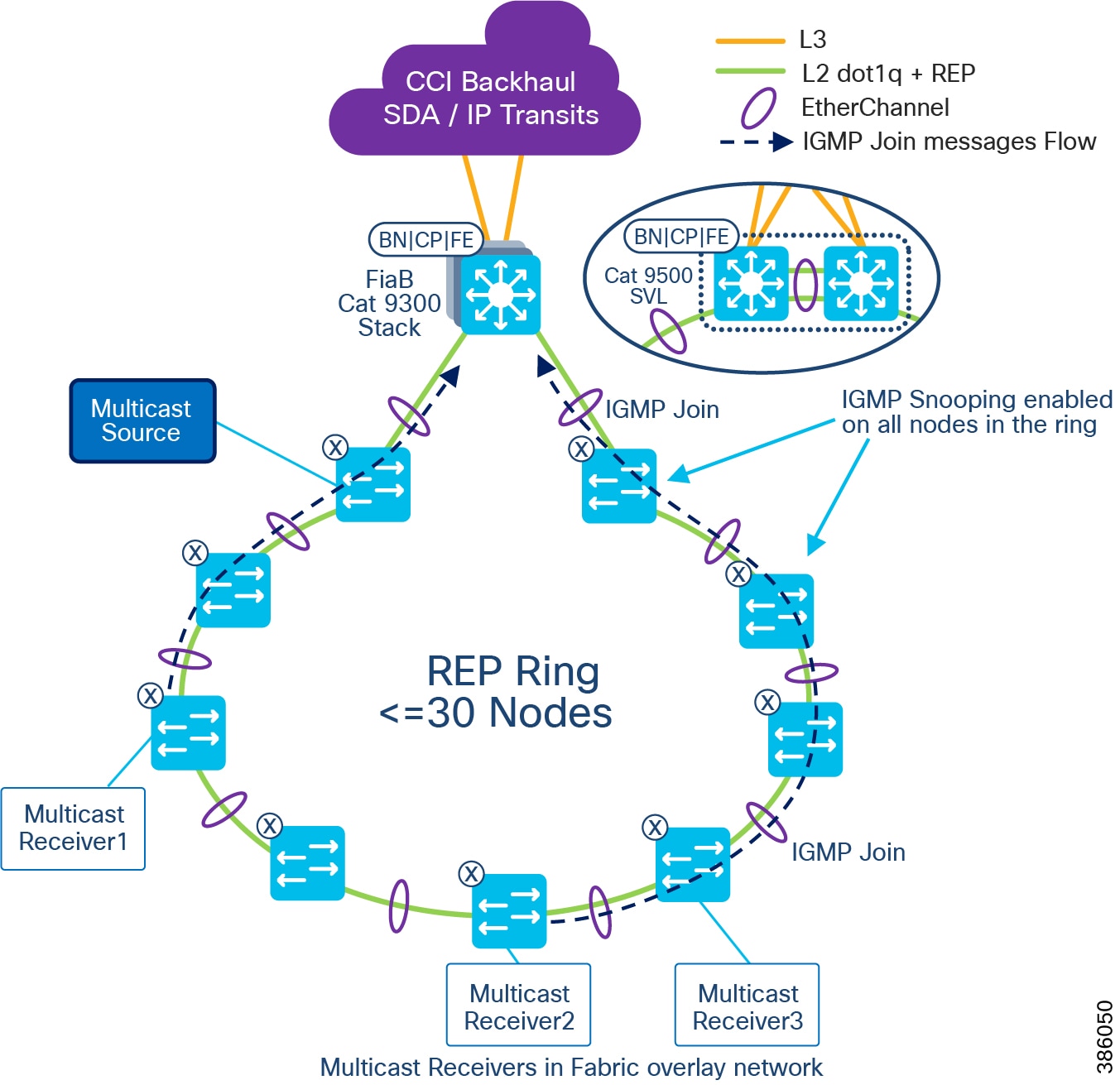

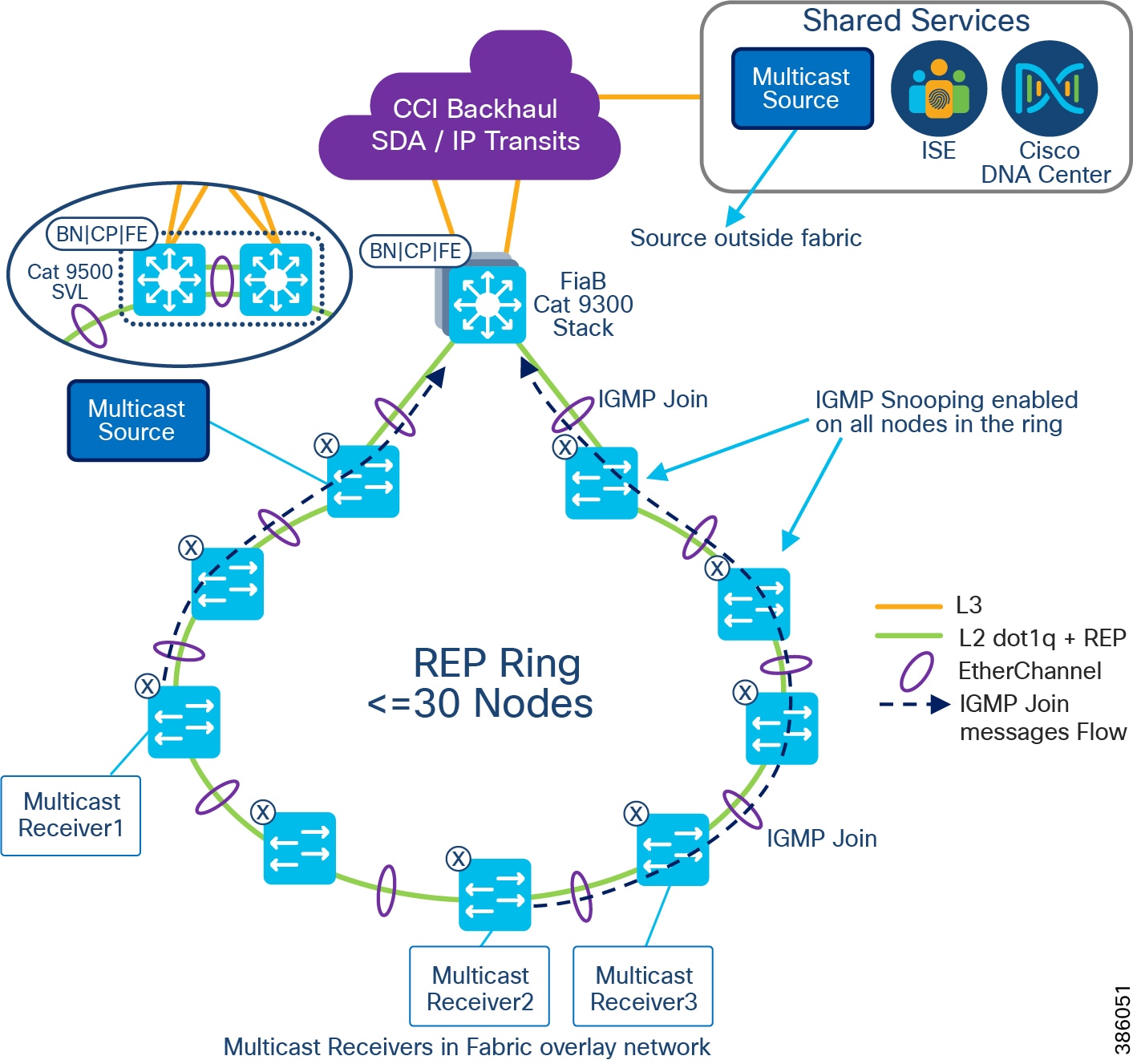

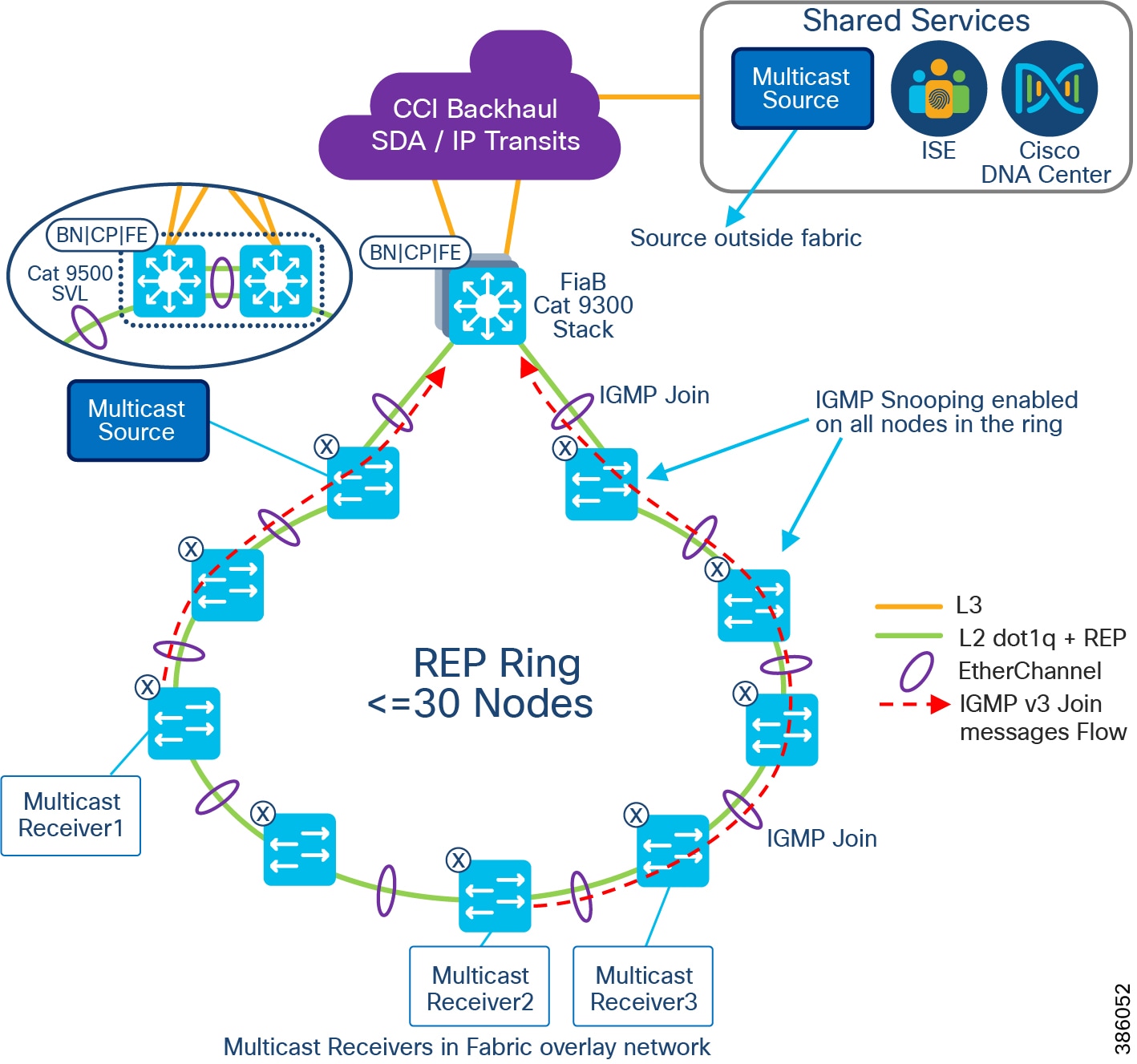

The recommended Ethernet access network topology for CCI is a REP ring formed by Cisco Industrial Ethernet (IE) switches connected back-to-back that terminates both ends of the ring on a stack of Fabric Edge devices. Considering the Ethernet access ring of <30 and multiple such rings in the CCI deployments.

Daisy chaining Linear and Star Topology Design

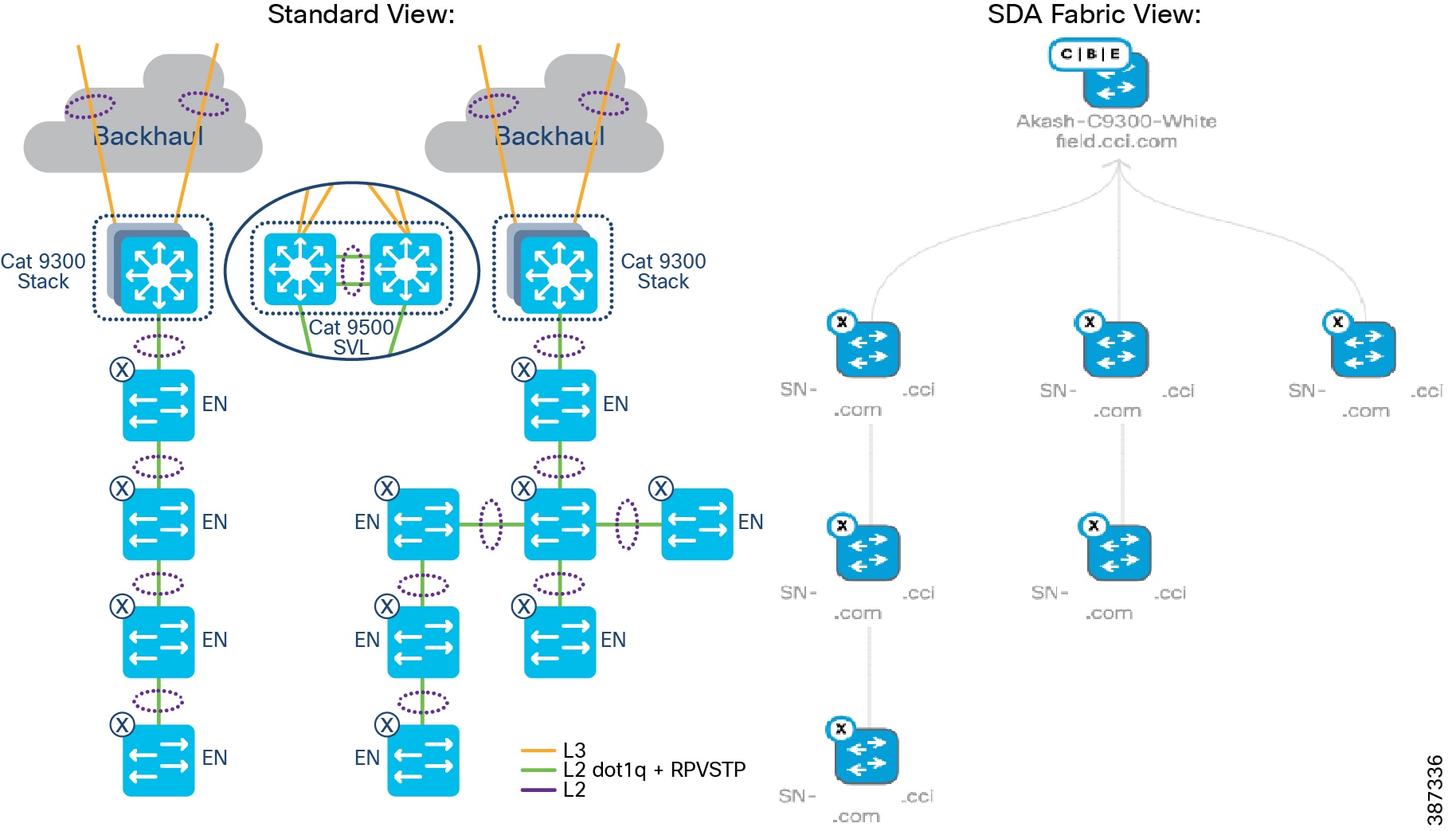

As part of a CCI setup, Extended nodes (ENs) and Policy Extended Nodes (PENs) can be connected to a stack of C9300 or C9500 switches in a StackWise Virtual (SVL) configuration to operate as Fabric-in-a-Box (FiaB).

The Cisco Digital Network Architecture Center (DNAC) release 2.2.2.3 extended the connection capability of ENs and PENs from a Fabric Edge or FiaB to also connect one EN to EN or PEN to PEN in a daisy chain configuration. Customers can now build topologies connecting to those endpoints regardless of where in the network they are deployed from 2.2.2.3 onwards.

The following switches can be daisy chained to form linear and/or star topologies:

- Cisco IE 4000 series

- Cisco IE 5000 series

- Cisco IE 3300 series including 10G uplink switches

- Cisco ESS 3300 switches with the role of EN

Example topologies are shown in EN Daisy chain in a linear and star topology.

Figure 14 EN Daisy chain in a linear and star topology

Daisy chained linear and star topologies extend fabric connectivity for endpoints connected to these switches in CCI PoP sites. Cisco IE 3400 series switches with the role of PENs in SD-Access Fabric are daisy chained to form linear and/or star topologies as shown in EN Daisy chain in a linear and star topology.

The Cisco DNA Center release 2.2.3.3 auto-configures PENs in the daisy chain with appropriate SGT, CTS, and SGACL policy configurations. Daisy chained PENs extend the micro-segmentation and policy automation along with SGACL policy enforcement on the destination PEN in the daisy chain. All nodes in the daisy chain are configured with default Rapid Per VLAN Spanning Tree Protocol (RPVSTP).

Figure 15 PEN Daisy chain in a linear and star topology

Design Considerations

When daisy-chaining EN or PEN devices, the following sequence occurs during PnP:

1.![]() Auto-configuration will happen in the first EN/PEN Device connected to the Fabric edge/FiaB through auto install startup VLAN.

Auto-configuration will happen in the first EN/PEN Device connected to the Fabric edge/FiaB through auto install startup VLAN.

2.![]() The interface connected towards the first EN or PEN is applied with trunk configuration (Switch interface template) and Port channel.

The interface connected towards the first EN or PEN is applied with trunk configuration (Switch interface template) and Port channel.

3.![]() Auto configuration of the initial EN or PEN devices with "No Authentication" template is supported.

Auto configuration of the initial EN or PEN devices with "No Authentication" template is supported.

4.![]() The next set of EN or PEN auto configuration and port channel configurations is pushed from the EN or PEN that was previously provisioned.

The next set of EN or PEN auto configuration and port channel configurations is pushed from the EN or PEN that was previously provisioned.

5.![]() Auto configuration of the next set of EN or PEN devices with "No Authentication" template is supported.

Auto configuration of the next set of EN or PEN devices with "No Authentication" template is supported.

Note: If using the “Open Authentication”, "Close Authentication”, or "Low Impact" templates the Port channels must be created manually. When using “No Authentication”, the Port Channels are created automatically. Additionally, when choosing “No Authentication” at the fabric site level, endpoints will not be authenticated unless explicitly configured using the Host Onboarding workflow.

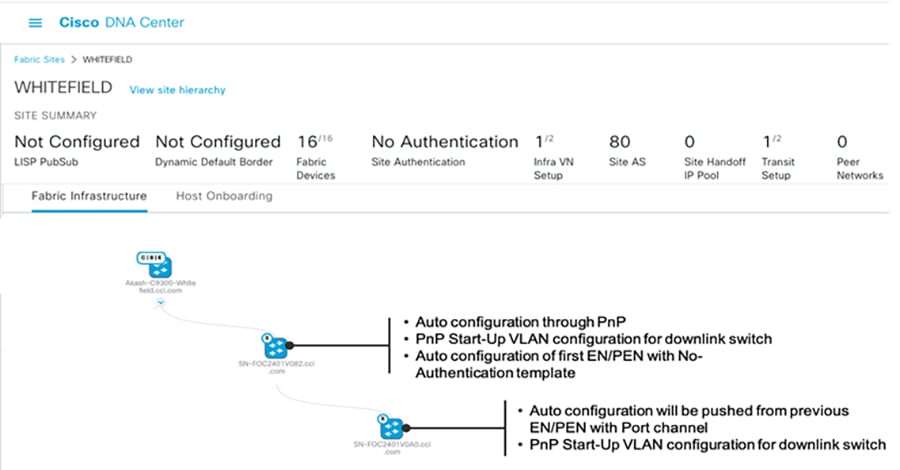

Topology View of a Linear daisy chain of ENs in a fabric illustrates a topology view and onboarding process of ENs in a CCI PoP.

Figure 16 Topology View of a Linear daisy chain of ENs in a fabric

Limitations and Restrictions of Daisy Chaining

- A mix of PENs and ENs in a single daisy chain is not supported. A daisy chain must be all PENs or ENs.

- PENs and ENs can only connect to one Fabric Edge or FiaB. Dual-homing of one EN to two different Fabric Edges or FiaB is not supported.

- The default maximum size of an EN daisy chain is 18 nodes. This is limited by the default STP max-age timer value of 20 on the STP root bridge. A maximum of 38 ENs or PENs can be provisioned in a single daisy chain by changing the STP max-age timer value to 40 on the FiaB as the STP root bridge.

- Only new REP ring (Greenfield) deployments are supported; an existing daisy chain topology if any (may have been configured using Day N templates) in a PoP cannot migrated using Cisco DNA Center daisy-chaining feature.

- It is recommended to make the Fabric Edge or FiaB the root bridge of the EN spanning tree network. If it is not, the size of other daisy chains of extended nodes connected to same Fabric Edge or FiaB is limited.

- The total number MAC addresses supported is 32,000 for C9300 and C9500 switches used as Fabric Edge or FiaB.

Ring Topology

In this topology, the Cisco Industrial Ethernet (IE) switches are connected to the Fabric Edge or FiaB in a ring form. A default STP ring topology of IE switches can be configured using Cisco DNA Center for deployments where stringent ring failure convergence is not a requirement for endpoints connected to the ring. This section discusses STP and REP access ring topologies in the CCI design.

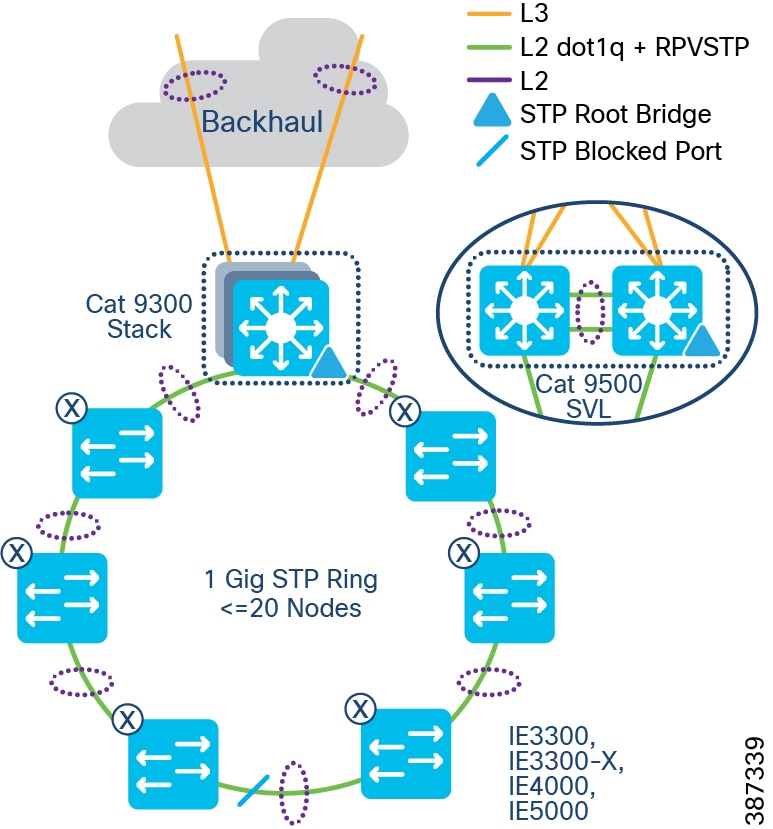

Spanning Tree Protocol (STP) Ring

In Spanning Tree Protocol (STP) topology, the Cisco Industrial Ethernet (IE) switches are connected to the Fabric Edge or FiaB in a ring form, as shown in STP ring of Extended Nodes. An STP ring of ENs or PENs topology can be formed by connecting two linear daisy chains of ENs or PENs onboarded using the Cisco DNA Center. An STP ring of ENs/PENs thus formed will have Rapid Per VLAN Spanning Tree Protocol (RPVSTP) enabled for loop avoidance.

Note: An STP ring of ENs or PENs is formed by interconnecting two linear daisy chains of ENs or PENs using physical cabling needed to close the loop. Manually configure the port channel on the interconnecting interfaces forming the loop or use the Day-N templates.

Figure 17 STP ring of Extended Nodes

STP Ring Design Considerations

- Configuring the STP blocked port in the middle of the ring using Port Priority configuration for blocked port election is recommended.

- The default maximum size of an Extended node Daisy Chain is 18 nodes. This is because the default STP diameter configuration is 20 switches in a STP topology. You can change the maximum to 38 ENs or PENs in a single ring by changing the STP maximum-age timer value to 40 on the FiaB as the root bridge.

- Making the Fabric Edge/FiaB the root bridge of the Spanning Tree network of ENs or PENs is recommended. When an EN or PEN is the Spanning Tree Root Bridge, the size of other daisy chains of extended nodes connected to the same Fabric Edge/FiaB is limited.

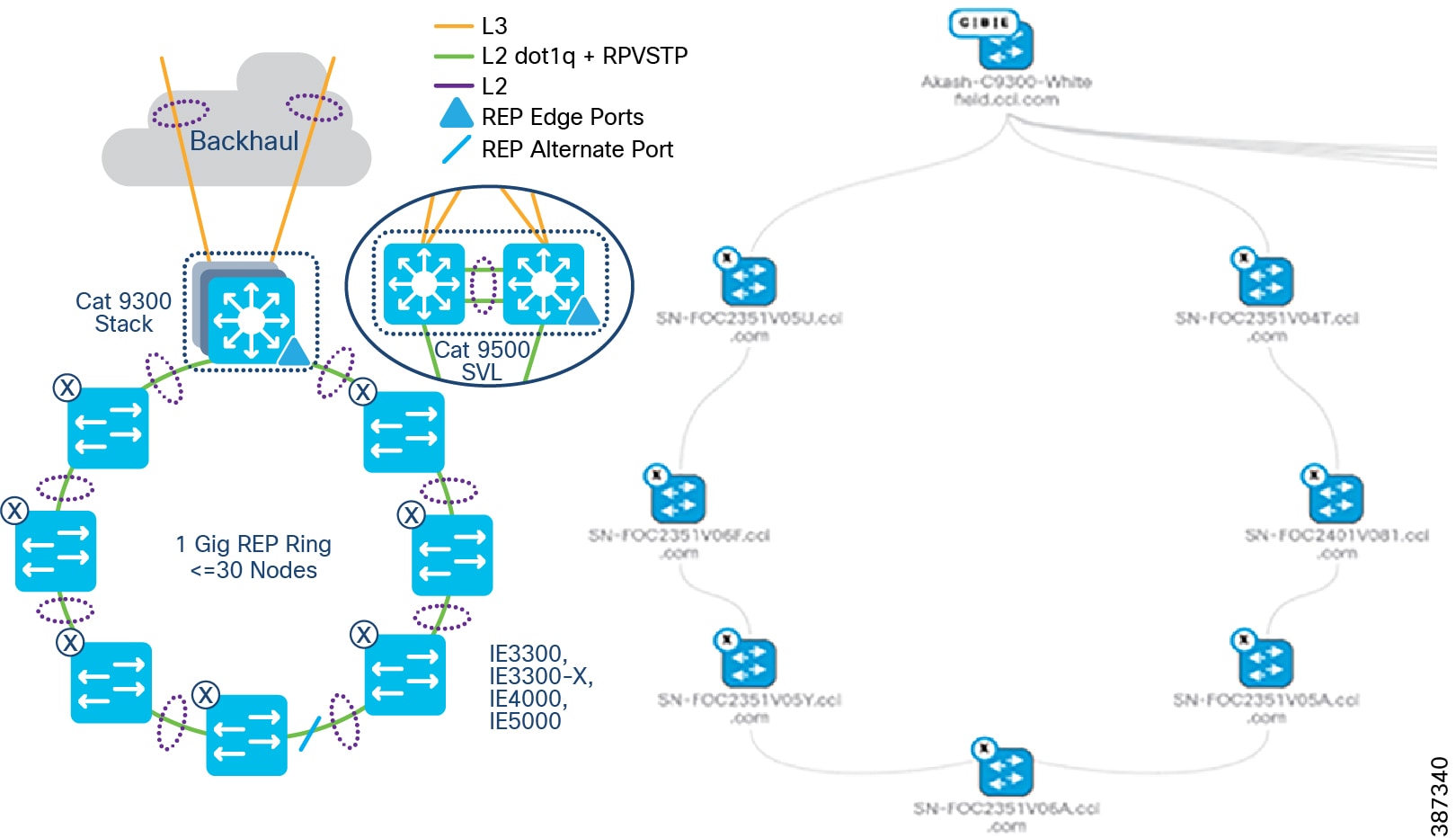

Resilient Ethernet Protocol (REP) Ring

Resilient Ethernet Protocol (REP) is the preferred resiliency protocol for IoT applications. All configurations of the Cisco Industrial Ethernet (IE) switches, including the REP configuration in the ring, can be zero-touch provisioned (ZTP) using Cisco DNA Center. REP automatically selects the preferred alternate port. Manually changing the preferred alternate port impacts recovery time in a REP ring failures and is not recommended.

The preferred alternate port selected by REP is blocked during normal operation of the ring. In the case of a REP segment failure, the preferred alternate port is automatically enabled by REP, making an alternate path for the disconnected segment. When the failed REP segment recovers, that port is again made the preferred alternate port and blocked by REP. In this way recovery occurs with minimal convergence time. In CCI, the desired REP convergence time for a 30 node REP ring is less than 100ms, which is achievable based on the verified results.

Note: A mixed ring of IE4000/IE5000/IE3300/IE3300-X/ESS3300 and IE3400 is not recommended and a mixed ring of EN and PEN nodes is not supported.

Two uplinks of a Cisco Industrial Ethernet (IE) switch are connected to two access ports on the Fabric Edge (FE), preferably terminating on two different switch members of the FiaB stack. The two ports that the Cisco IE switch is connected to are auto configured into a port channel by the Cisco DNA Center and marked as EN ports, or PEN ports for IE3400 switches. The Cisco DNA Center also configures these ports as trunk ports allowing all VLANs. Based on the VLAN of the traffic entering the EN port of the FE, it is tagged with appropriate Security Group Tag (SGT) and Virtual Network (VN), and the segmentation policy is applied.

Note: CURWB Radios that connect to the Ethernet access ring can often require a maximum transmission unit (MTU) greater than 1500 bytes. Therefore, configuring a system-wide MTU of 2000 bytes on all IE switches in the ring to accommodate to higher MTU packets is recommended.

The REP primary and secondary edge ports are configured on FiaB on a stack of C9300 Series switches or C9500 switches StackWise Virtual, forming a closed ring of Cisco Industrial Ethernet (IE) switches. This allows detection of any REP segment failure, including the uplink ports of ENs or PENs on the FiaB Stack or C9500 StackWise Virtual, and initiates convergence. Provisioning REP as a closed ring topology in CCI as shown in CCI Access Network REP Ring Topology for network high availability and improved traffic convergence in case of link failures within the REP segment is recommended.

Figure 18 CCI Access Network REP Ring Topology

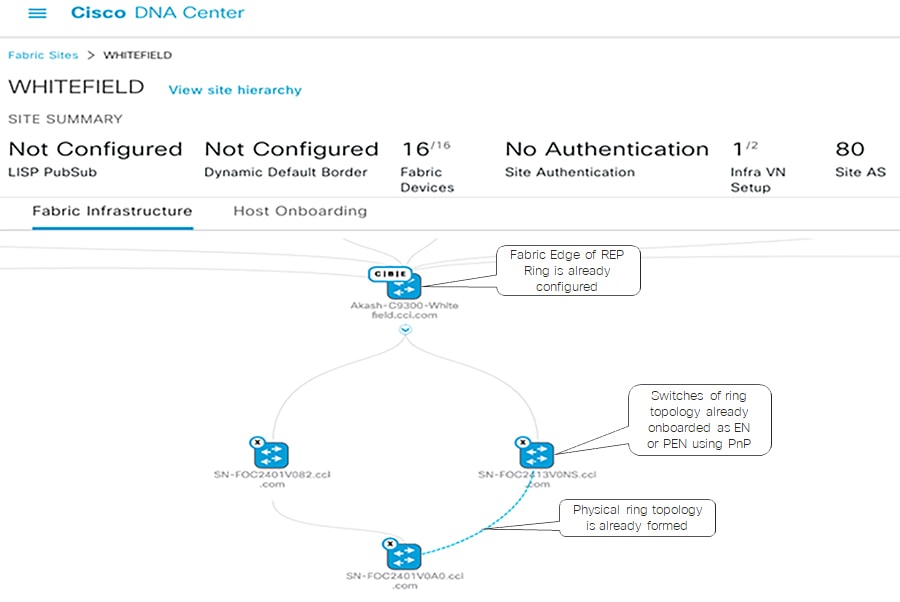

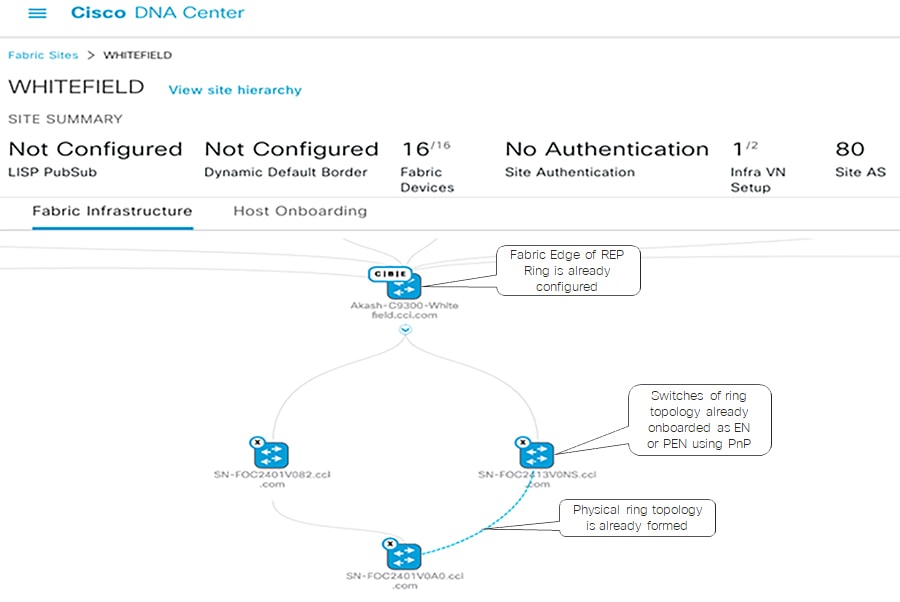

Provisioning the REP Ring using Cisco DNA Center REP Workflow

Cisco DNA Center release 2.2.3.3 REP configuration workflow feature extends the cascading of multiple EN or PEN switches in a ring topology. The ring topology is set up though physical connection between two ENs or PENs and switches that are onboarded into the Cisco DNA Center fabric through Plug and Play (PnP). The Cisco DNA Center REP automation workflow feature considers FiaB as a REP edge device to form a REP ring from two ENs or PENs connected to the same Fabric Edge or FiaB.

Cisco DNA Center REP ring configuration Prerequisites shows the prerequisites for the REP ring configuration using Cisco DNA Center REP automation feature.

Figure 19 Cisco DNA Center REP ring configuration Prerequisites

The detailed step-by-step instructions to configure REP ring using workflow for the Extended or Policy Extended Nodes ring are discussed in the CCI Implementation Guide.

REP Ring Design Considerations, Limitations, and Restrictions

- Only new REP ring (Greenfield) deployments are supported; I.e, an existing REP ring topology if any (may have been configured using Day N templates) in a CCI PoP cannot migrated to a new REP ring configuration using Cisco DNA Center REP automation feature.

- Considering the Fabric Edge (FE) as the root bridge, then a maximum of 20 nodes including FE is supported without tuning the STP timer. To support 30 nodes in a REP ring, configure the REP ring by changing the STP maximum-age timer value to 40 on the STP root bridge before starting the REP configuration workflow.

- A mix of EN and PEN in a daisy chain or REP ring is not supported.

- To insert or delete an EN or PEN Node in the existing REP Ring delete the REP Ring. A switch connected in a REP Ring cannot be deleted from the fabric until the REP Ring that it is a part of it is deleted.

- Multiple rings within a REP ring is not supported; a ring of rings is not supported.

Provisioning the REP Ring using Cisco DNA Center REP Workflow

Cisco DNA Center release 2.2.3.3 REP configuration workflow feature extends the cascading of multiple EN or PEN switches in a ring topology. The ring topology is set up though physical connection between two ENs or PENs and switches that are onboarded into the Cisco DNA Center fabric through Plug and Play (PnP). The Cisco DNA Center REP automation workflow feature considers FiaB as a REP edge device to form a REP ring from two ENs or PENs connected to the same Fabric Edge or FiaB.

Cisco DNA Center REP ring configuration Prerequisites shows the prerequisites for the REP ring configuration using Cisco DNA Center REP automation feature.

Figure 20 Cisco DNA Center REP ring configuration Prerequisites

The detailed step-by-step instructions to configure REP ring using workflow for the Extended or Policy Extended Nodes ring are discussed in the CCI Implementation Guide.

REP Ring Design Considerations, Limitations, and Restrictions

- Only new REP ring (Greenfield) deployments are supported; I.e, an existing REP ring topology if any (may have been configured using Day N templates) in a CCI PoP cannot migrated to a new REP ring configuration using Cisco DNA Center REP automation feature.

- Considering the Fabric Edge (FE) as the root bridge, then a maximum of 20 nodes including FE is supported without tuning the STP timer. To support 30 nodes in a REP ring, configure the REP ring by changing the STP maximum-age timer value to 40 on the STP root bridge before starting the REP configuration workflow.

- A mix of EN and PEN in a daisy chain or REP ring is not supported.

- To insert or delete an EN or PEN Node in the existing REP Ring delete the REP Ring. A switch connected in a REP Ring cannot be deleted from the fabric until the REP Ring that it is a part of it is deleted.

- Multiple rings within a REP ring is not supported; a ring of rings is not supported.

Note: Cisco DNA Center REP Ring Automation is a limited available feature. Please contact Cisco Sales team before using this feature in CCI deployments.

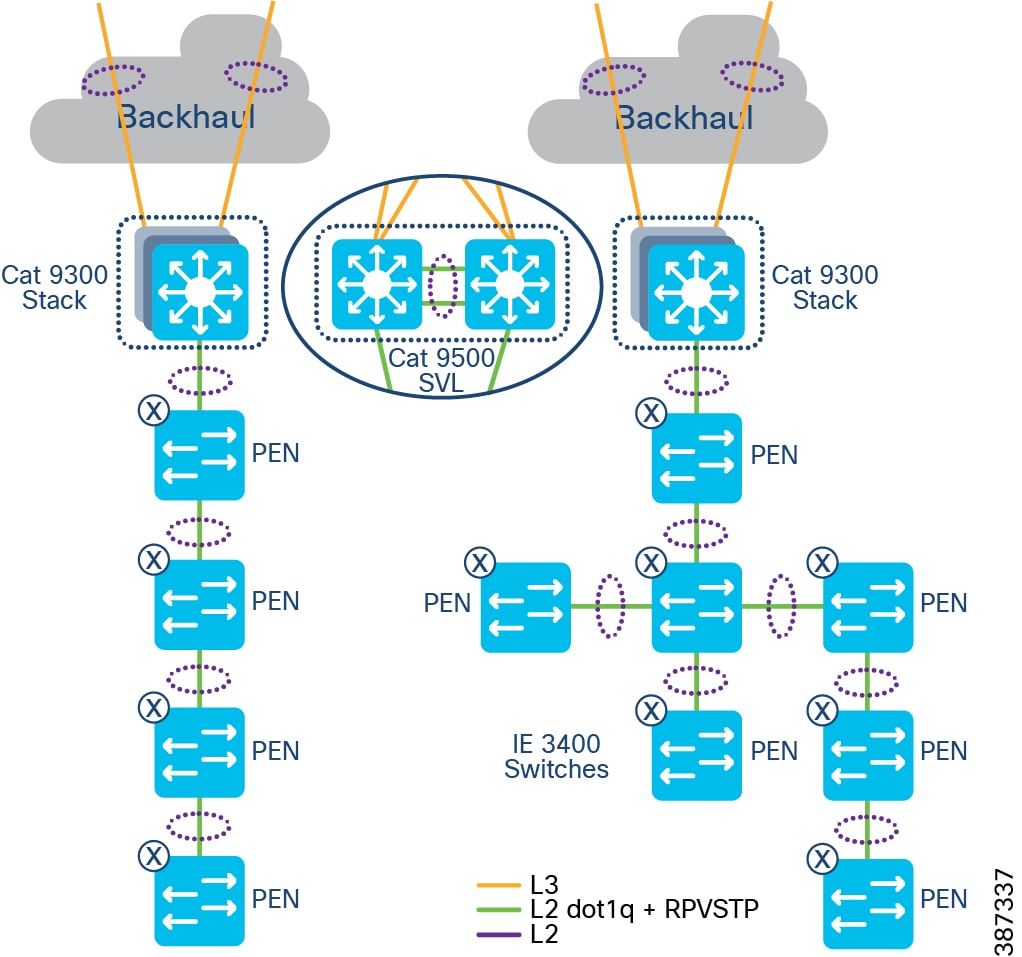

Policy Extended Node Ring

Additionally, an Ethernet access ring network consisting of all IE3400 Series switches only can be formed as a Policy Extended Node ring, as shown in CCl Policy Extended Node REP Ring Topology.

Endpoints or hosts onboarded in the Policy Extended Node in the ring will have the right VLAN and SGT tag attributes downloaded from ISE to enforce communication policy based on SGT for improved endpoint and ring security. Also, the Policy Extended Node in the ring support 802.1X/MAB based closed authentication for endpoints.

Figure 21 CCl Policy Extended Node REP Ring Topology

Cisco DNA Center REP workflow can be used to discover and provision all PEN Cisco Industrial Ethernet (IE) switches in the access ring. Refer to the section Provisioning the REP Ring using Cisco DNA Center REP Workflow. The detailed step-by-step instructions to configure daisy-chained ring topology and REP using workflow for the Extended Nodes or Policy Extended Node ring are covered in the CCI Implementation Guide.

Note: REP Fast feature is capable of reducing L2 convergence times, however REP Fast is only supported on IE3x00 and ESS3300 switches (not IE4000, IE5000 nor Catalyst 9000), and is also not supported on Port Channel interfaces – because of this, REP Fast is not suitable for inclusion in the CCI CVD. For more information on REP Fast please see https://www.cisco.com/c/en/us/products/collateral/switches/industrial-ethernet-switches/white-paper-c11-743432.html

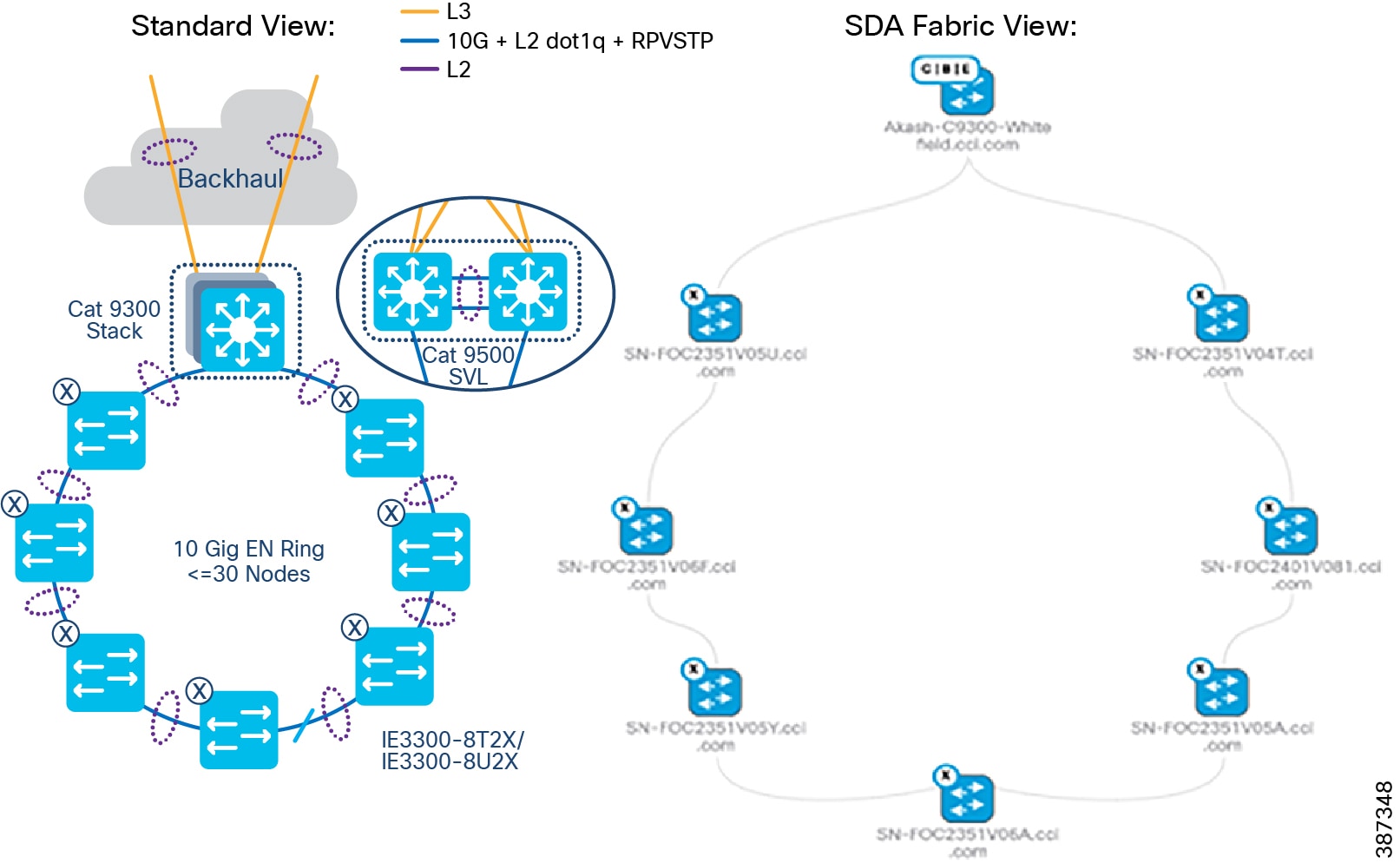

Ten Gigabit Ethernet Access Ring Design

Cisco Catalyst IE3300 Rugged Series switches deliver up to 10 Gigabit high-speed Ethernet connectivity in a compact form factor. They are designed for a wide range of industrial applications where hardened products are required. The platform is built to withstand harsh environments in manufacturing, energy, transportation, mining, smart cities, and oil and gas. The modular design of the Cisco Catalyst IE3300 Rugged Series offers the flexibility to expand to up to 26 ports of Gigabit Ethernet or up to 24 ports of Gigabit Ethernet and 2 ports of 10 Gigabit (10G) Ethernet with a range of expansion module options.

The Cisco IE3300 10G series with expansion module is rated 480W for IEEE® 802.3af / 802.3at / 802.3bt (type 3 & type 4), shared across up to 24 ports. It is ideal for connecting high power over Ethernet (PoE) end devices such as PTZ IP cameras, phones, high power 802.11ac Wave 2 / 802.11ax wireless access points, sensors, and other devices.

Cisco IE330010Gig Series (aka IE3300-X) switches are available in the following SKUs:

- IE3300-8T2X-E/A – a Non-PoE switch SKU with either Network Essentials (E) or Network Advantage (A) license option

- IE3300-8U2X-E/A - a PoE switch (480W maximum PoE budget) SKU with either Network Essentials (E) or Network Advantage (A) license option

Refer to the following link for more details on Cisco Catalyst IE3300 Rugged Series switches.

https://www.cisco.com/c/en/us/products/collateral/switches/catalyst-ie3300-rugged-series/catalyst-ie3300-rugged-series-ds.html

In CCI, an Ethernet access ring in a point of presence (PoP) can deliver 10 Gigabit speed with up to 18 switches in a spanning tree protocol (STP) ring using the maximum-age timer default value 20 and fabric-in-a-box (FiaB) as the STP root bridge. Changing the maximum-age timer value to 30 allows a ring configuration of 30 switches. Refer to the Daisy chaining Linear and Star Topology Design for more details on the ring size. 10Gigabit Ethernet Access ring shows a 10Gigabit Ethernet access ring topology in a CCI PoP providing high-speed network connectivity to endpoints in the network. The ring is formed with one 10Gig interface in the Port Channel (PC) supporting one 10G interface for uplink PC and another 10G interface for downlink PC from each industrial Ethernet (IE) switch in the ring. Although the Cisco Catalyst IE5000 Series classic switches provide a 10Gig access ring, a IE3300-X 10Gig series switches for high-speed ring is recommended because it also supports the following advantages.

- The latest Catalyst-based IOX-XE software with edge-compute capabilities for hosting a Cyber Vision Network sensor application

- Higher PoE ports density and PoE power budget for access points and endpoints

–![]() IE-3300-8U2X Base module support - 8 x 1Gigabit Ethernet copper ports (up to 60W)

IE-3300-8U2X Base module support - 8 x 1Gigabit Ethernet copper ports (up to 60W)

–![]() IEM-3300-4MU Expansion module - 4 x Multigigabit Copper Ports operating in either 1G or 2.5G speed 4-pair Power-over-Ethernet (4PPoE) Type 4 (up to 90W).

IEM-3300-4MU Expansion module - 4 x Multigigabit Copper Ports operating in either 1G or 2.5G speed 4-pair Power-over-Ethernet (4PPoE) Type 4 (up to 90W).

Figure 22 10Gigabit Ethernet Access ring

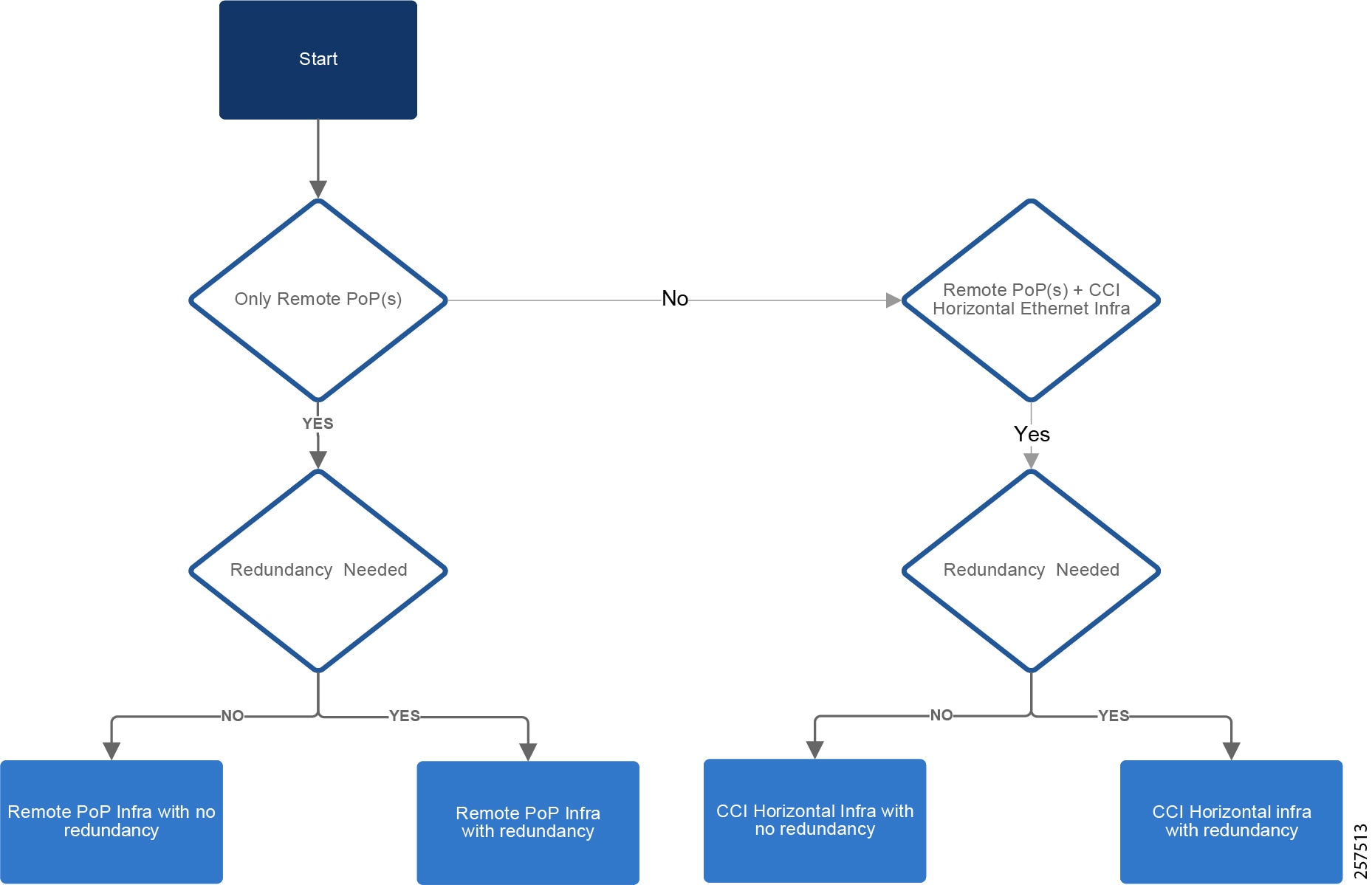

CCI Remote Point-of-Presence Design

This chapter covers CCI Remote Point-of-Presence (RPoP) design considerations to extend CCI macro segmentation and multiservice network capabilities to remote sites along with RPoP network, management and services high availability.

This chapter includes the following major topics:

- Remote Point-of-Presence Gateways

- Remote Point-of-Presence Design Considerations

- RPoP High Availability Design

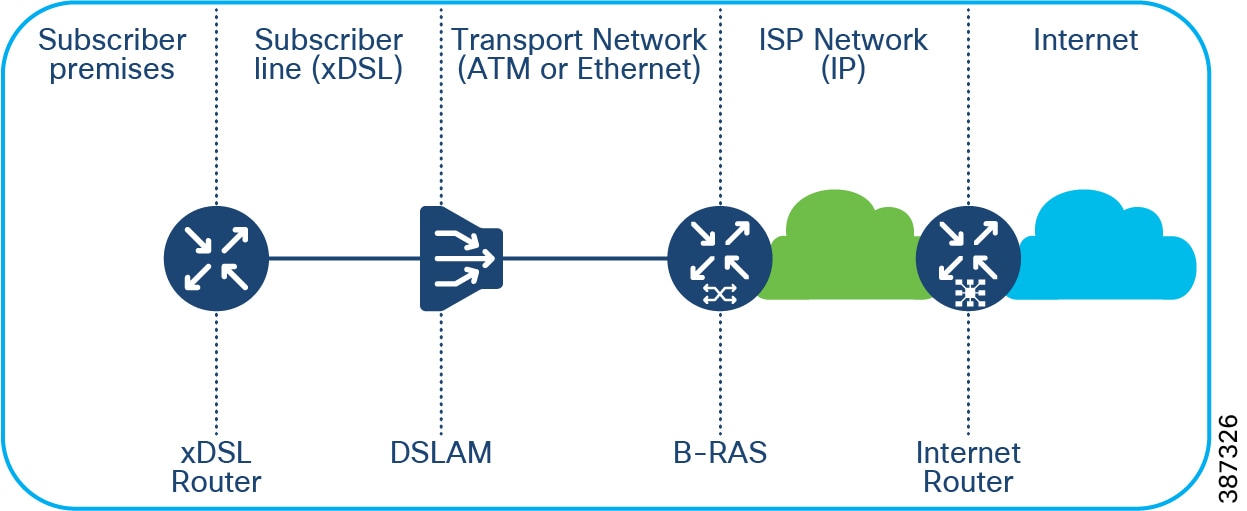

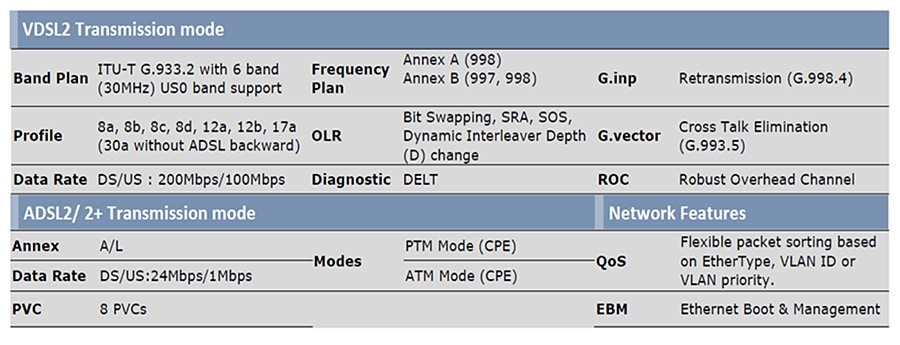

- RPoP DSL Backhaul Design

- Remote PoP Gateways Management

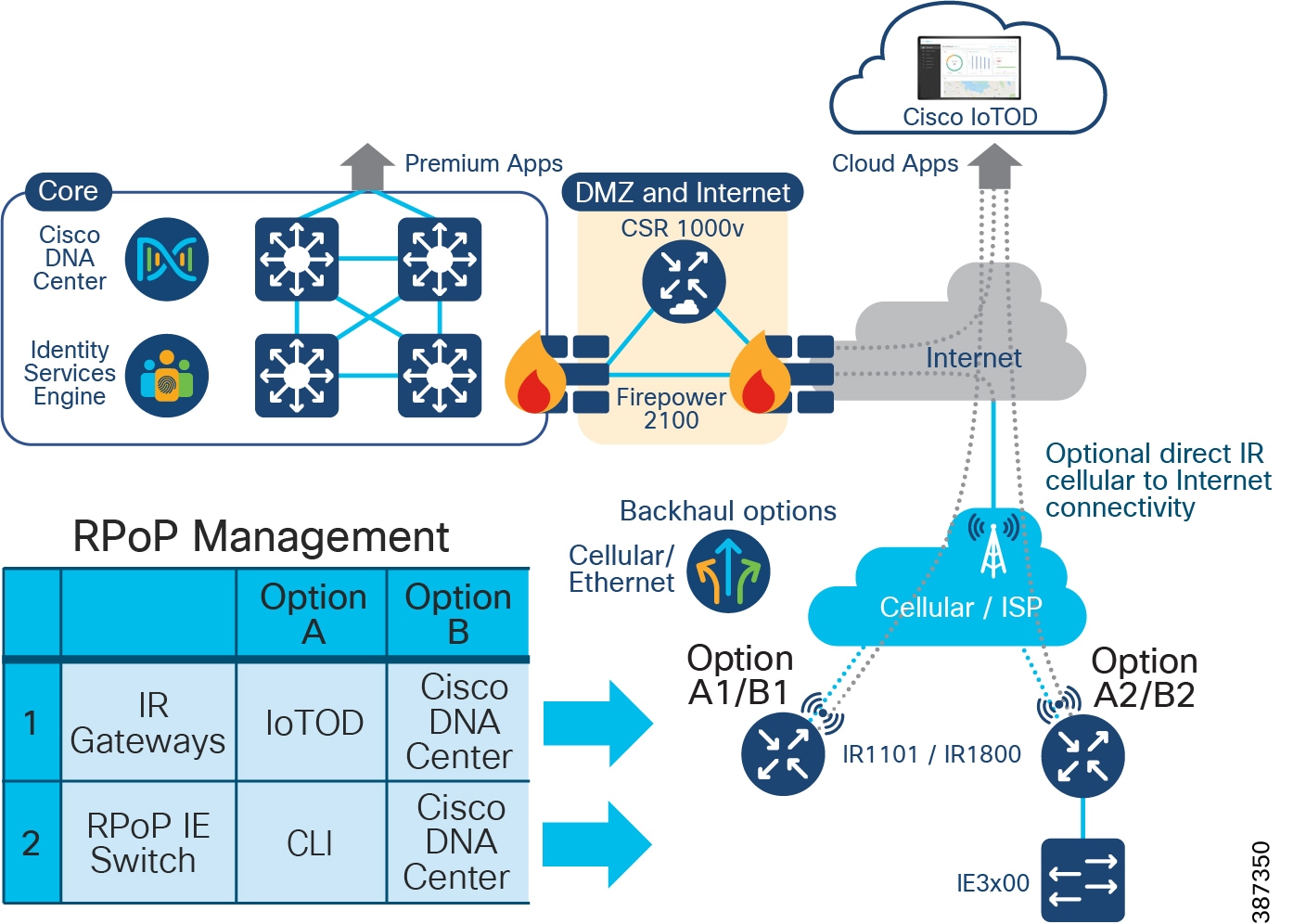

Remote Point-of-Presence Gateways

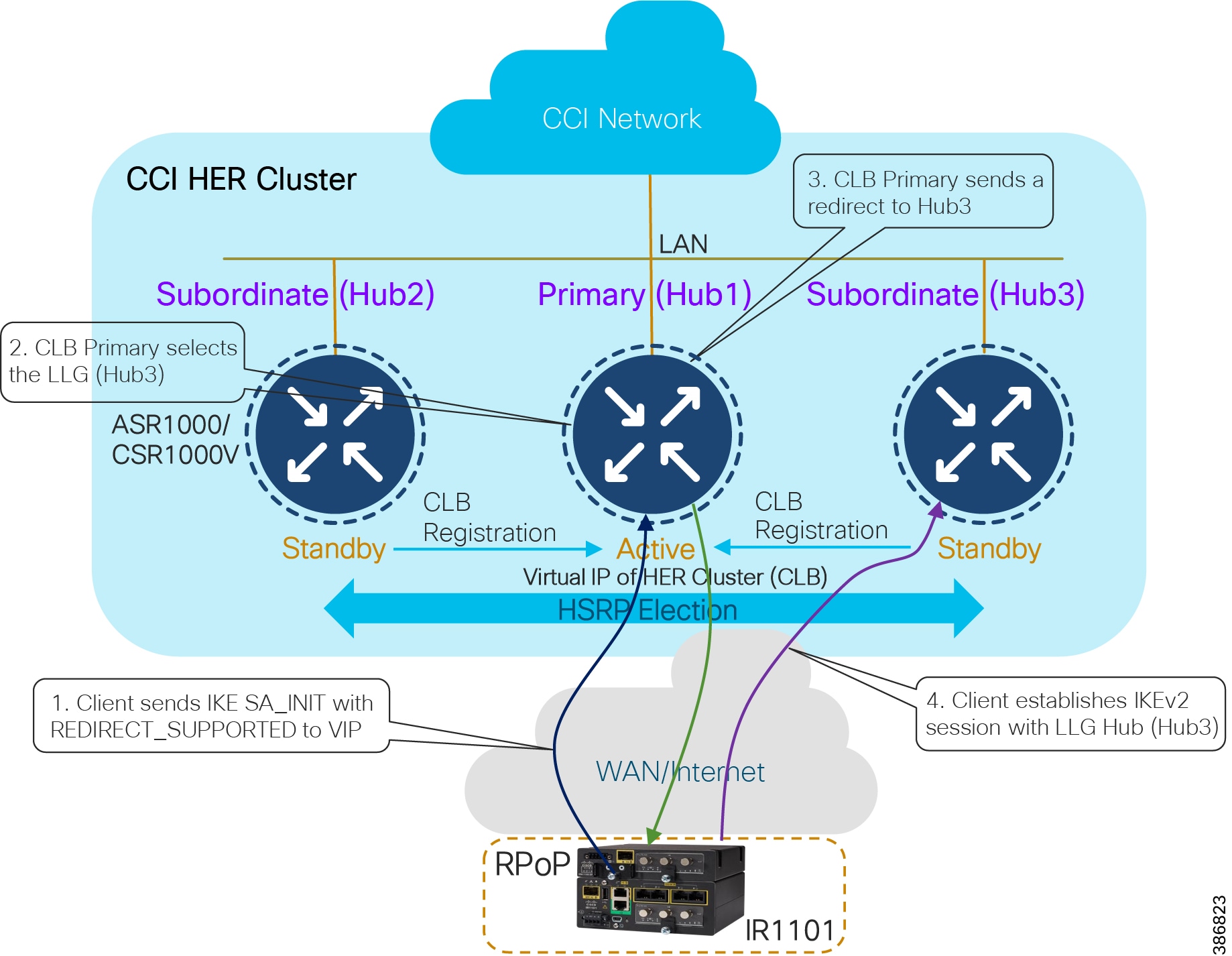

An RPoP is a Connected Grid Router (CGR) or Cisco Industrial Router (IR) and is typically connected to the Public Internet via a cellular connection, although any suitable connection can be used (such as xDSL or Ethernet), over which FlexVPN secure tunnels are established to the CCI HE in the DMZ.

This section covers the CCI Remote PoP gateway(s) that aggregates CCI services at RPoP(s) and extends the CCI multiservice network to RPoP endpoints. The RPoP router may provide enough local LAN connectivity, or an additional Cisco Industrial Ethernet (IE) switch may be required.

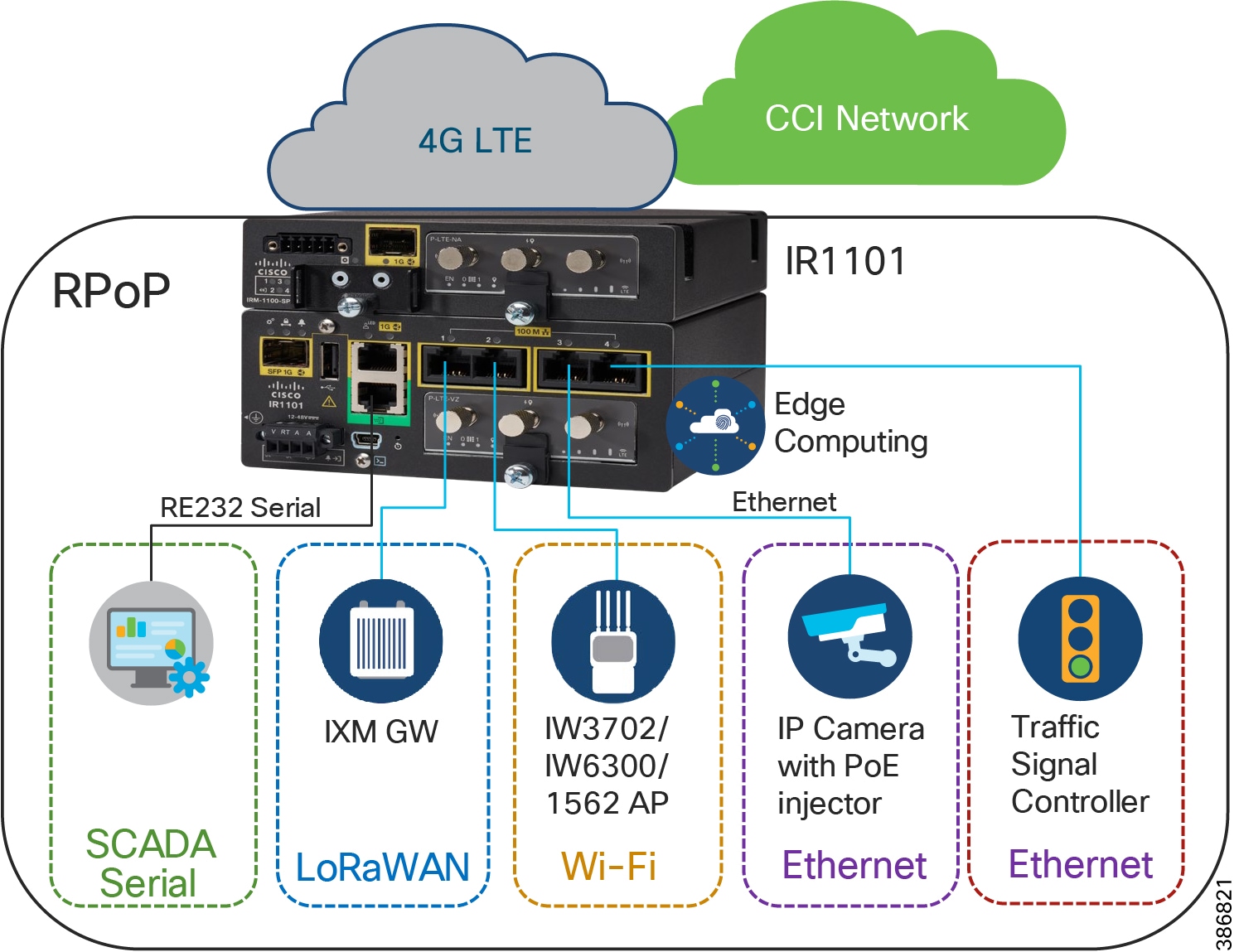

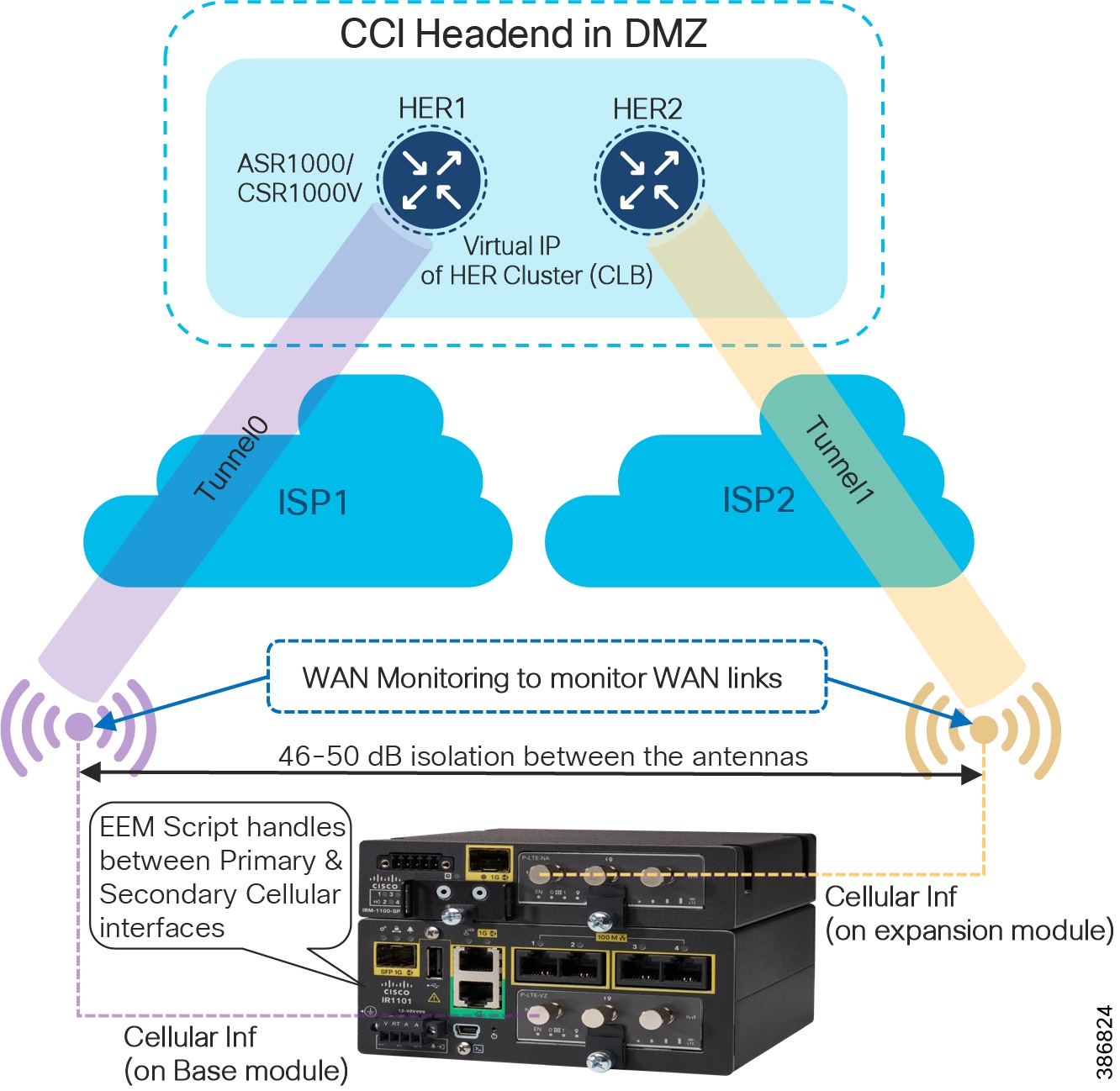

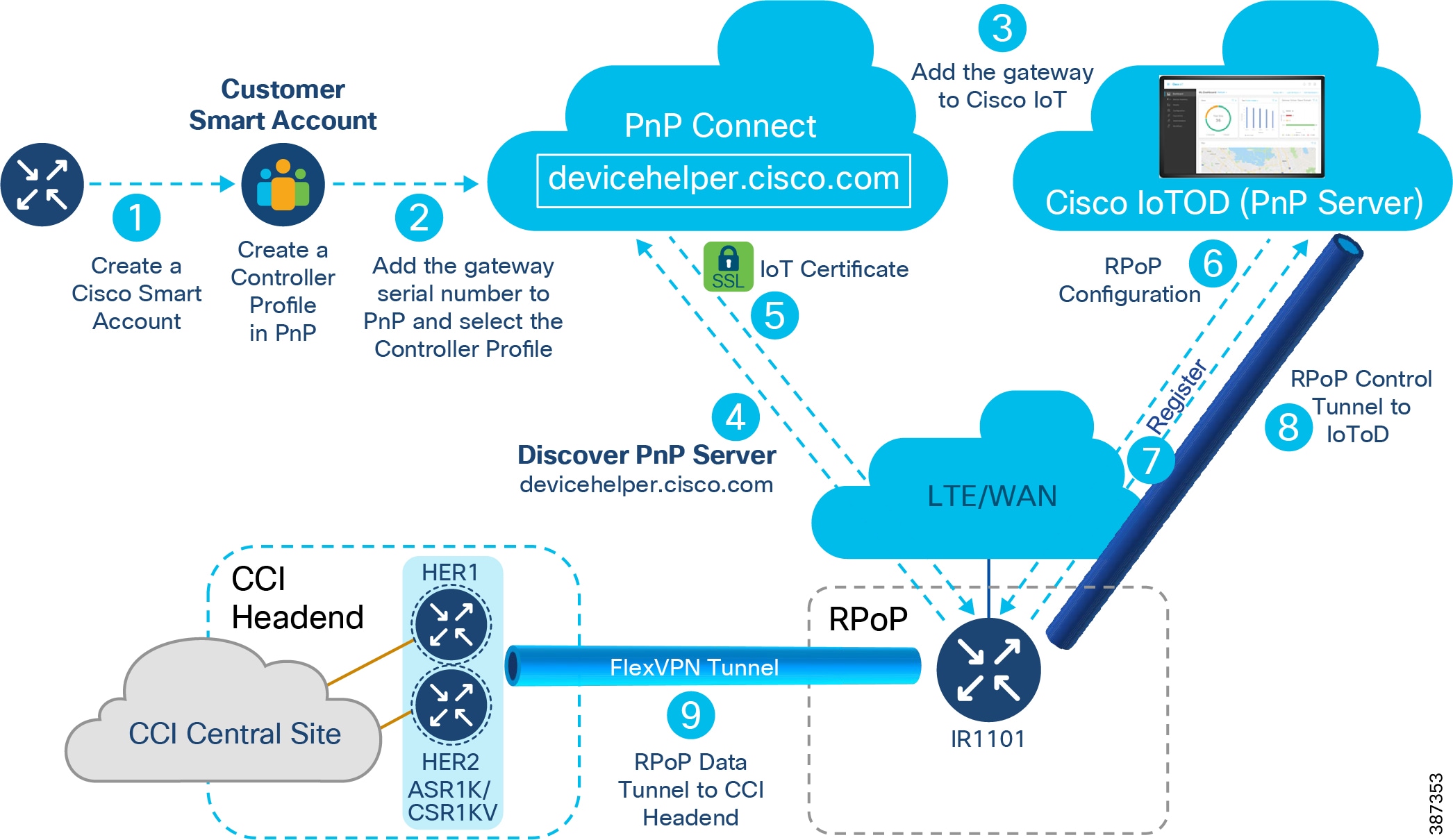

Cisco IR1101 as RPoP Gateway

Cisco IR1101 Integrated Services Router is a modular and ruggedized platform designed for remote asset management across multiple industrial vertical markets. As part of the CCI solution, the IR1101 can play the role of a CCI RPoP gateway aggregating remote site (RPoP) endpoints/assets and services and extending the CCI multiservice network to the RPoP along with network macro-segmentation.

For more details, refer to the IR1101 Industrial Integrated Services Router Hardware Installation Guide at the following URL:

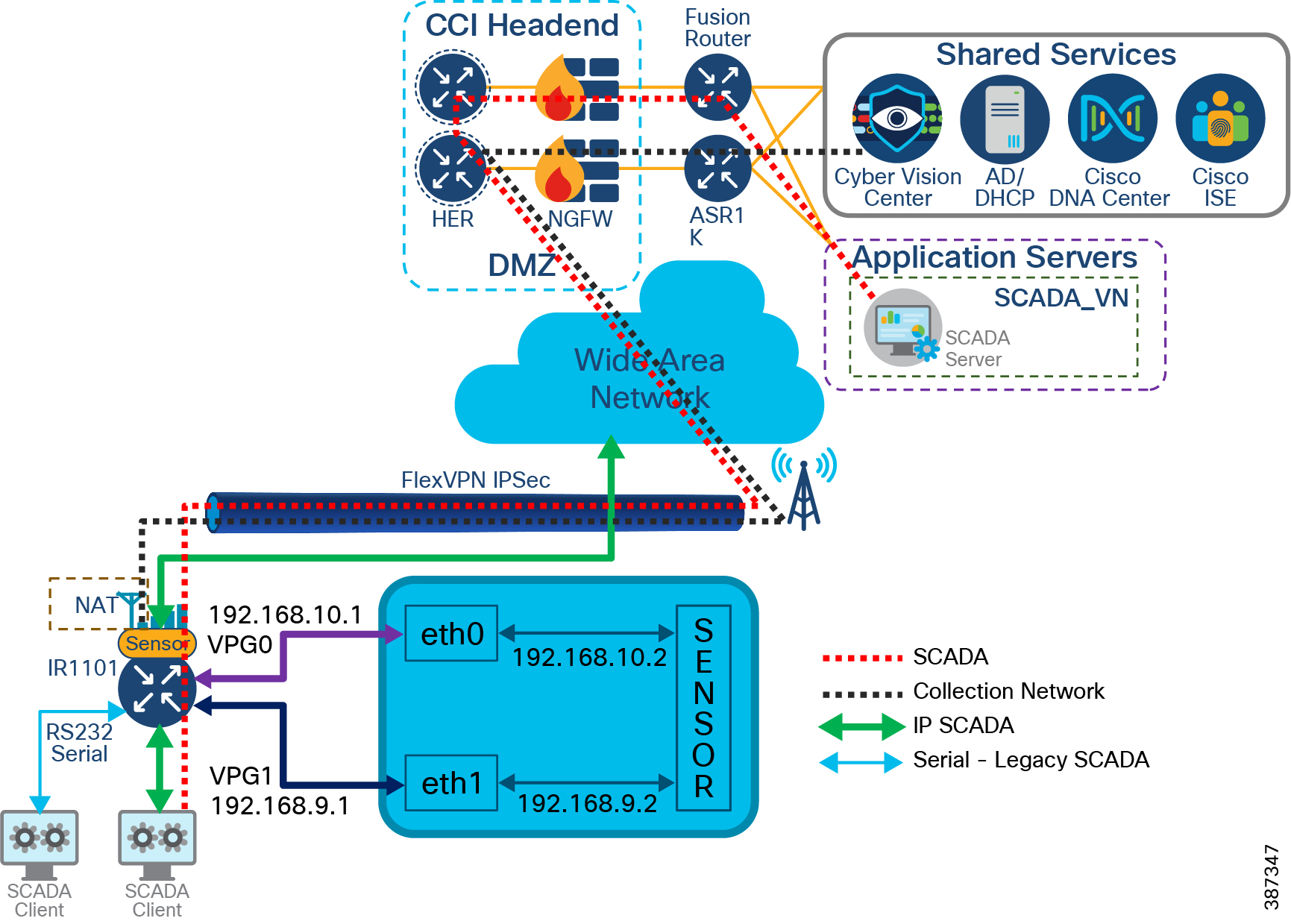

As shown in An Example Multiservice RPoPRPoP Macro-Segmentation Design , IR1101 is designed as a modular platform for supporting expansion modules with edge compute. IR1101 supports a variety of communication interfaces such as four FE ports, one combo WAN port, RS232 Serial port, and LTE modules. The cellular module is pluggable and a dual SIM card and IPv6 LTE data connection are supported. SCADA Raw sockets and protocol translation features are available.

The IR1101 provides investment protection. The base module of IR1101 provides a modular pluggable slot for inserting the pluggable LTE module (or) storage module. The expansion module, on the other hand, also comes with a modular pluggable slot for inserting the pluggable LTE module. Overall, two pluggable LTE modules could be inserted on IR1101 (with an expansion module), thus enabling cellular backhaul redundancy with Dual LTE deployments.

Using the expansion module, an additional fiber (SFP) port, an additional LTE port and an SSD local storage for applications could be added to the capability of IR1101.

For more details on IR1101 base and expansion modules, refer the following URL:

Cisco IR1800 as RPoP Gateway

Cisco Catalyst IR1800 Rugged Series Routers are secure, high-performance, 5G routers in a modular design that support private LTE, Wi-Fi6 and Gigabit Ethernet. In CCI, IR1800 series routers can be used as RPoP gateways providing better response time, and increase cost efficiencies with secure, reliable access to real-time data for various industry vertical use cases.

Ultra-modular design supports evolving business and technical needs, protecting your investment.

Supports multiple different modules, including public or private 4G/LTE and 5G, Wi-Fi 6, FirstNet certified public safety LTE, SSD, and advanced GNSS, thus providing a high level of flexibility to choose the desired configuration to suit individual deployments.

Refer to the following URL for more details on Cisco Catalyst IR1800 Rugged Series Routers:

- https://www.cisco.com/c/en/us/products/collateral/routers/catalyst-ir1800-rugged-series-routers/nb-06-cat-ir1800-rugged-ser-rout-ds-cte-en.html

Note: Cisco IR-1800 platform cannot be managed by IoTOD as it is not supported. It can only be managed by Cisco DNA Center.

Cisco CGR1240 as RPoP Gateway

The CGR 1000 Series Routers are ruggedized, modular platforms on which utilities and other industrial customers can build a highly secure, reliable, and scalable communication infrastructure. They support a variety of communications interfaces, such as Ethernet, serial, cellular, Radio-Frequency (RF) mesh, and Power Line Communications (PLC). In CCI, CGR1240 router can be used as Field Area Router and RPoP gateway with cellular backhaul for providing CR-Mesh access network in CCI PoP and RPoPs.

Refer to the section CR-Mesh Network for more details on CGR1240 in CCI and refer the CCI Remote PoP and IoT Gateways Portfolio Comparison for more details on other IRs as RPoP gateways.

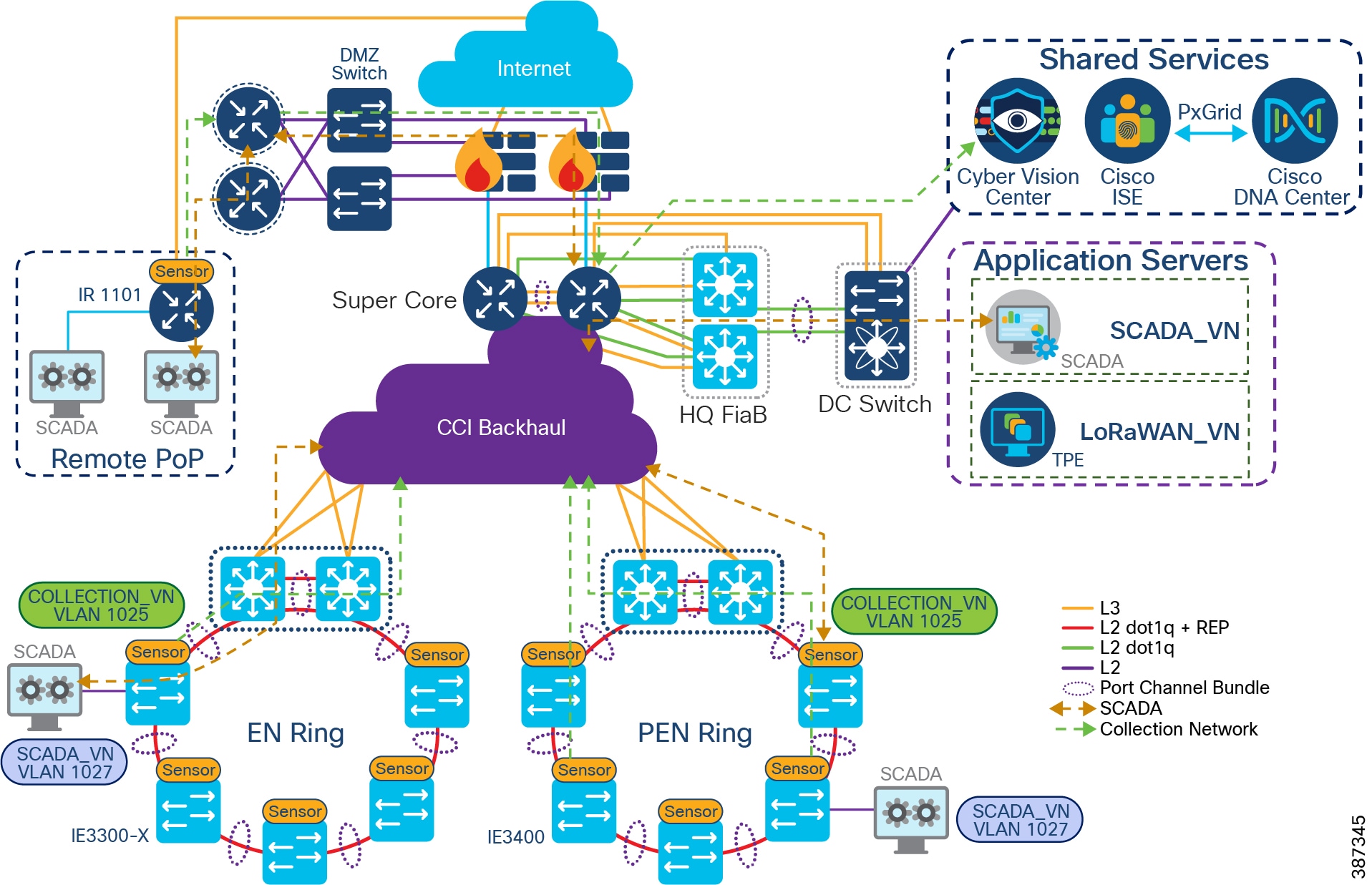

Remote Point-of-Presence Design Considerations

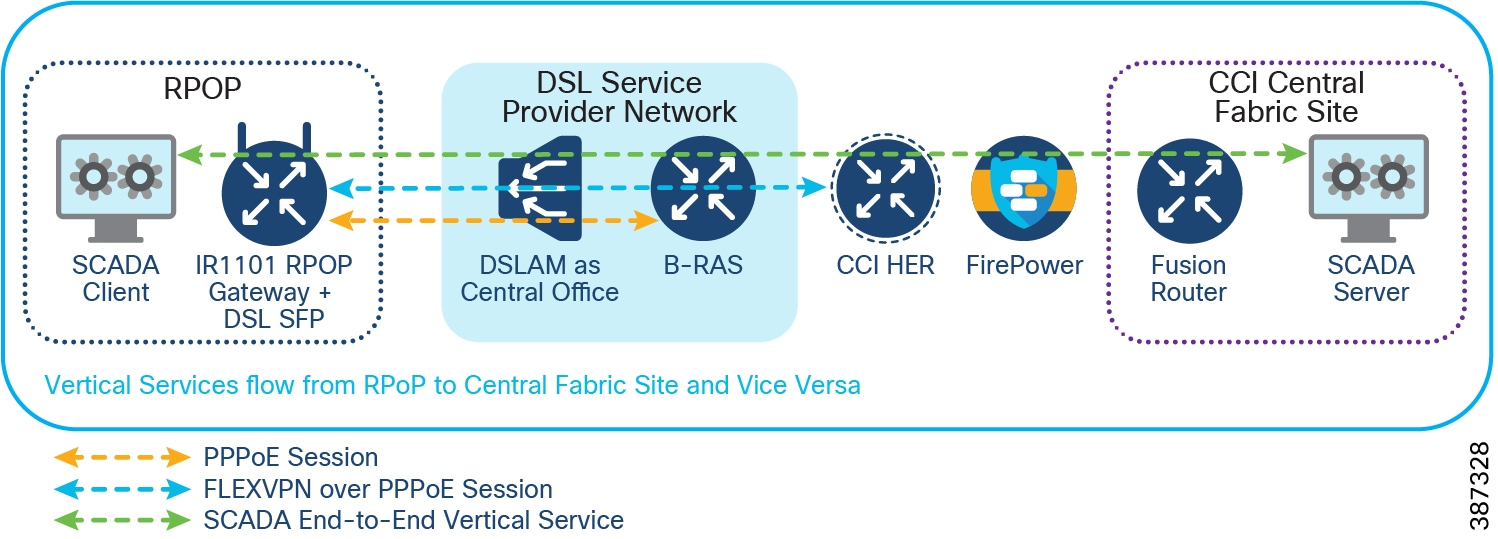

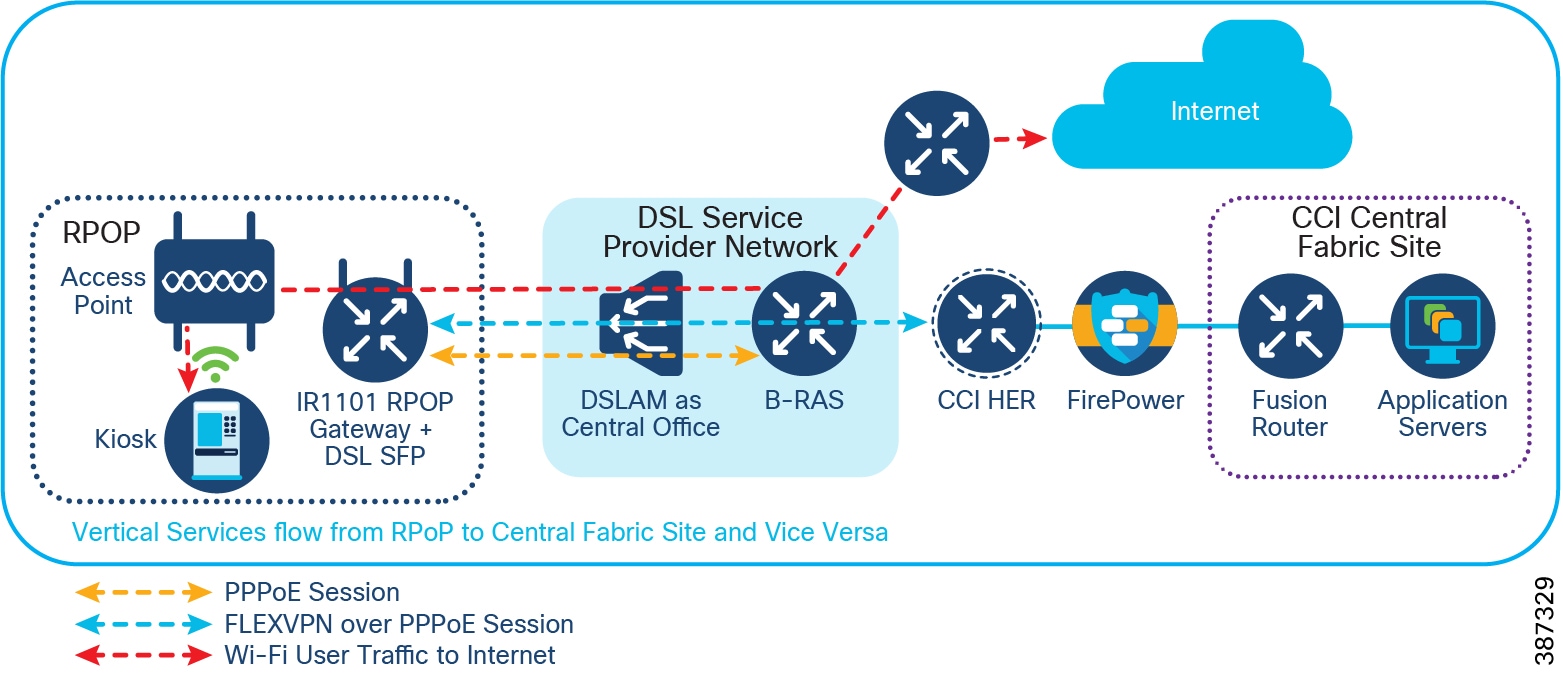

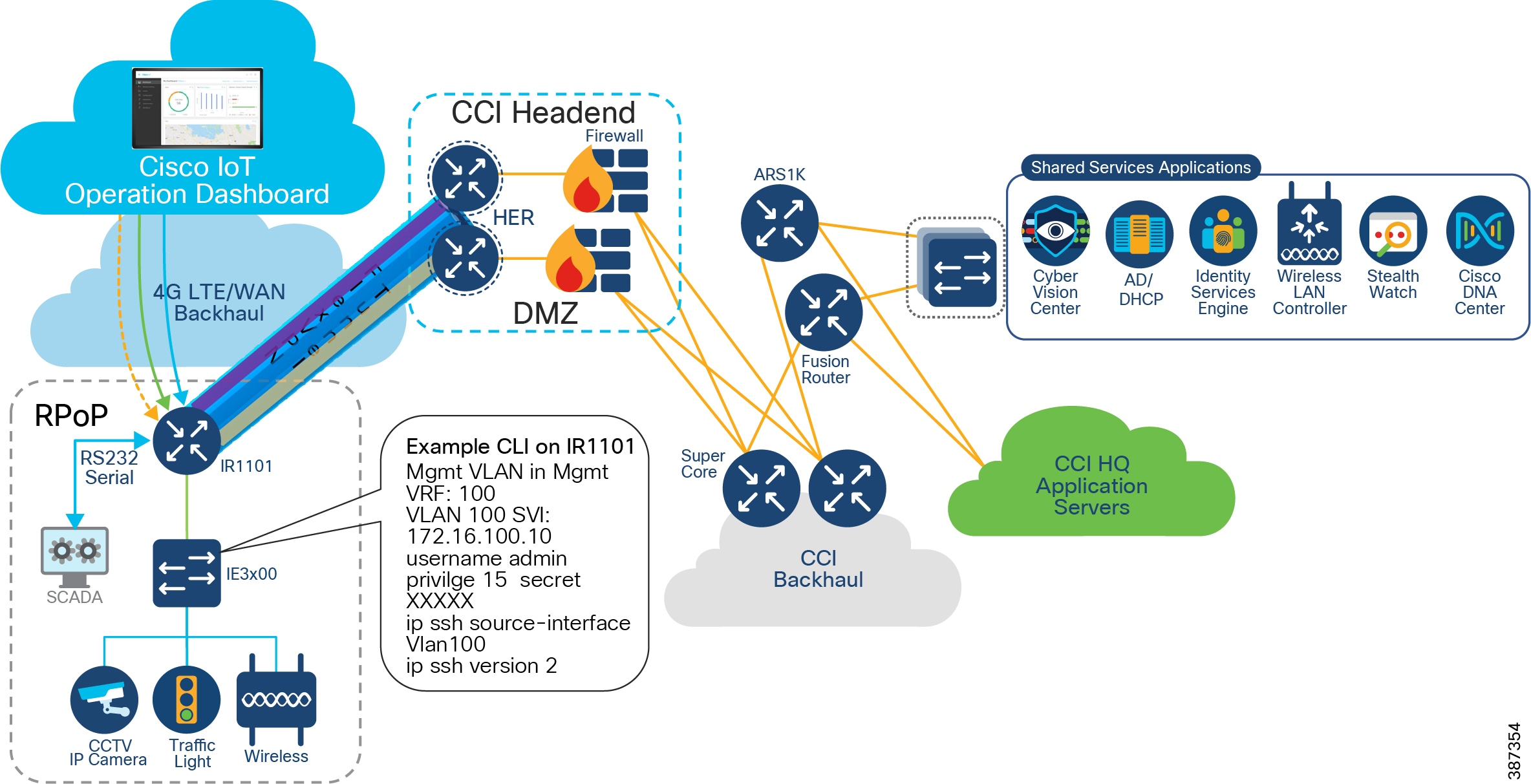

This section covers Cisco IR1101 as Remote PoP gateway design considerations in CCI. It discusses different services that RPoP offers with the capabilities of IR1101 and how the CCI multiservice network with macro-segmentation is extended to RPoP endpoints/assets via the CCI headend (HE) network in the DMZ.

RPoP Multiservice design in IR1101

As shown in RPoP Gateway with Macro-Segmentation Design, the IR1101 base module supports four FE (LAN) ports and a RS232 Serial port which helps connect various CCI vertical endpoints. Multi-VRF, VLAN, and VPN features support on IR1101 helps segment the network and services in the CCI RPoP by configuring and maintaining more than one routing and forwarding tables.

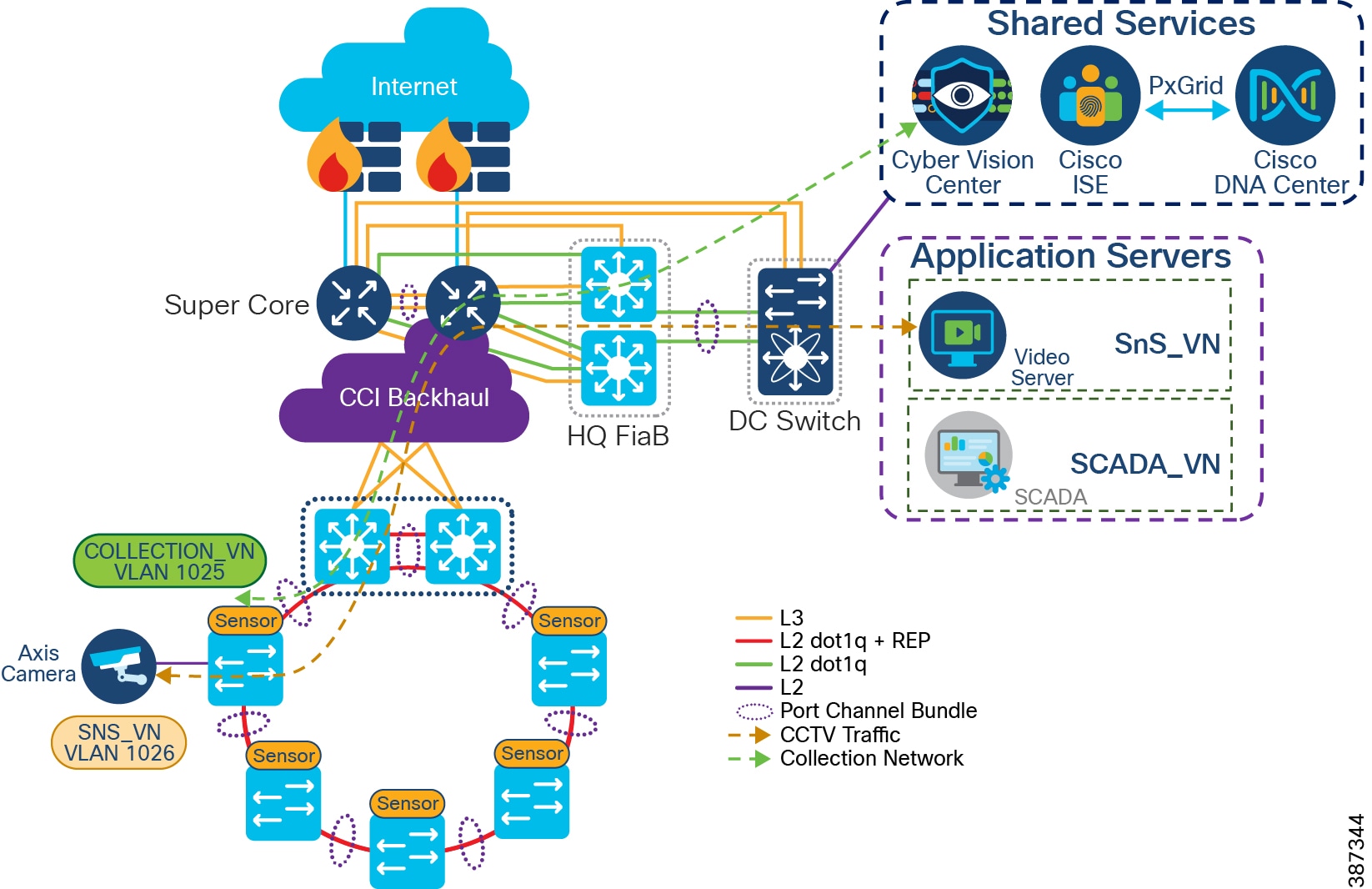

An Example Multiservice RPoP shows an IR1101 in the CCI RPoP with the support for the following services:

- Ethernet Connectivity: Separate LAN network Connectivity for CCTV Camera, IXM Gateway (LoRaWAN access network at RPoP), Wi-Fi Access Points (Wi-Fi access network at RPoP) and Traffic Signal Controller in Roadways & Intersection use cases

- SCADA: DNP3 Serial-to-DNP3/IP protocol translation for SCADA Serial RTU devices connectivity at RPoP

- Edge Computing: Analyses the most time-sensitive data at the network edge, close to where it is generated, and enables local actions, independent of backhaul or cloud connectivity. A highly secure, extensible environment for hosting applications ensures authenticity of applications.

A separate LAN network is created on the IR1101 for each of the services in separate Virtual Route Forwarding (VRF) routes. Each LAN network traffic is backhauled via a secure FlexVPN tunnel to the CCI headend network over a Cellular or DSL based public backhaul networks. An Example Multiservice RPoP shows an example multiservice RPoP in CCI.

Figure 23 An Example Multiservice RPoP

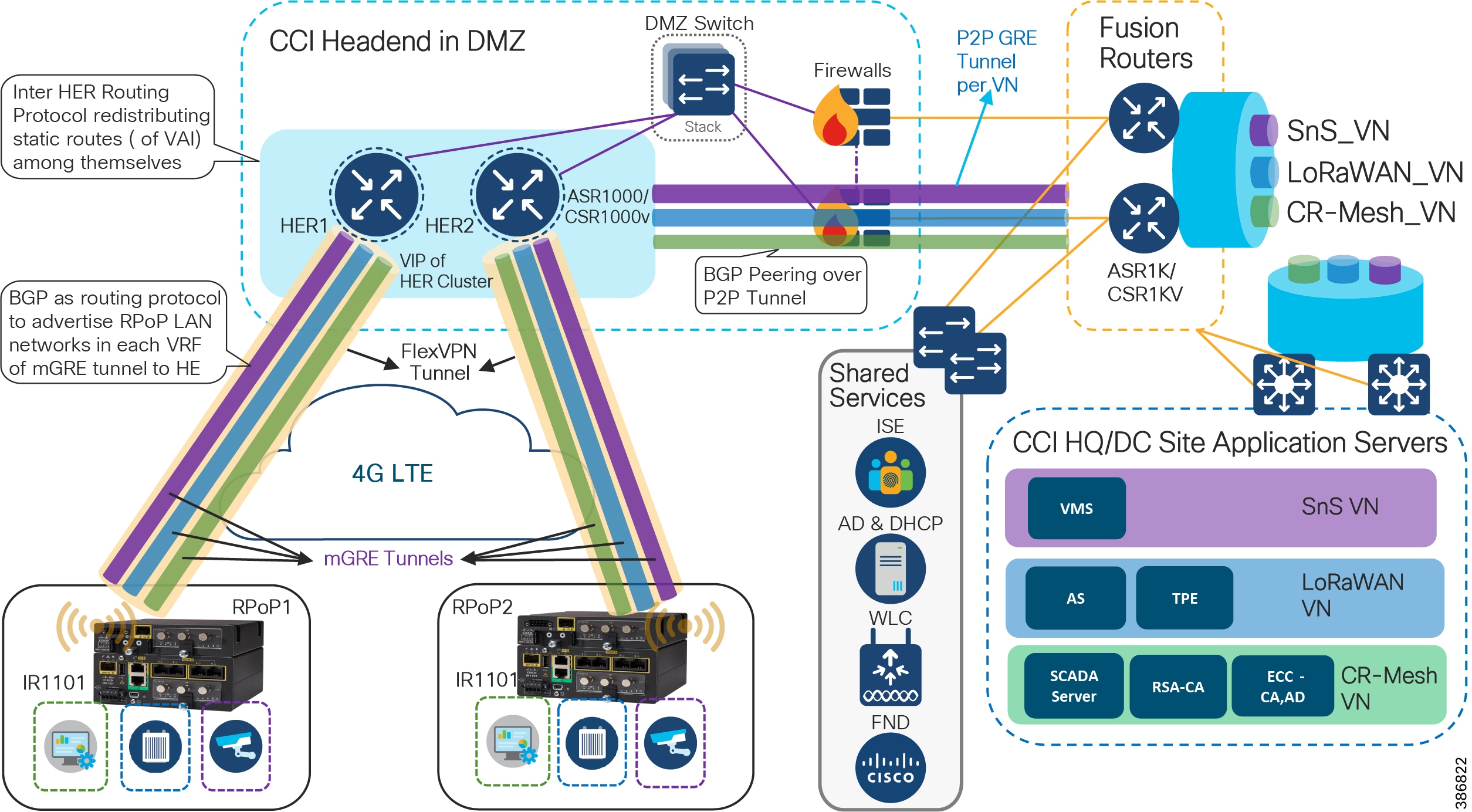

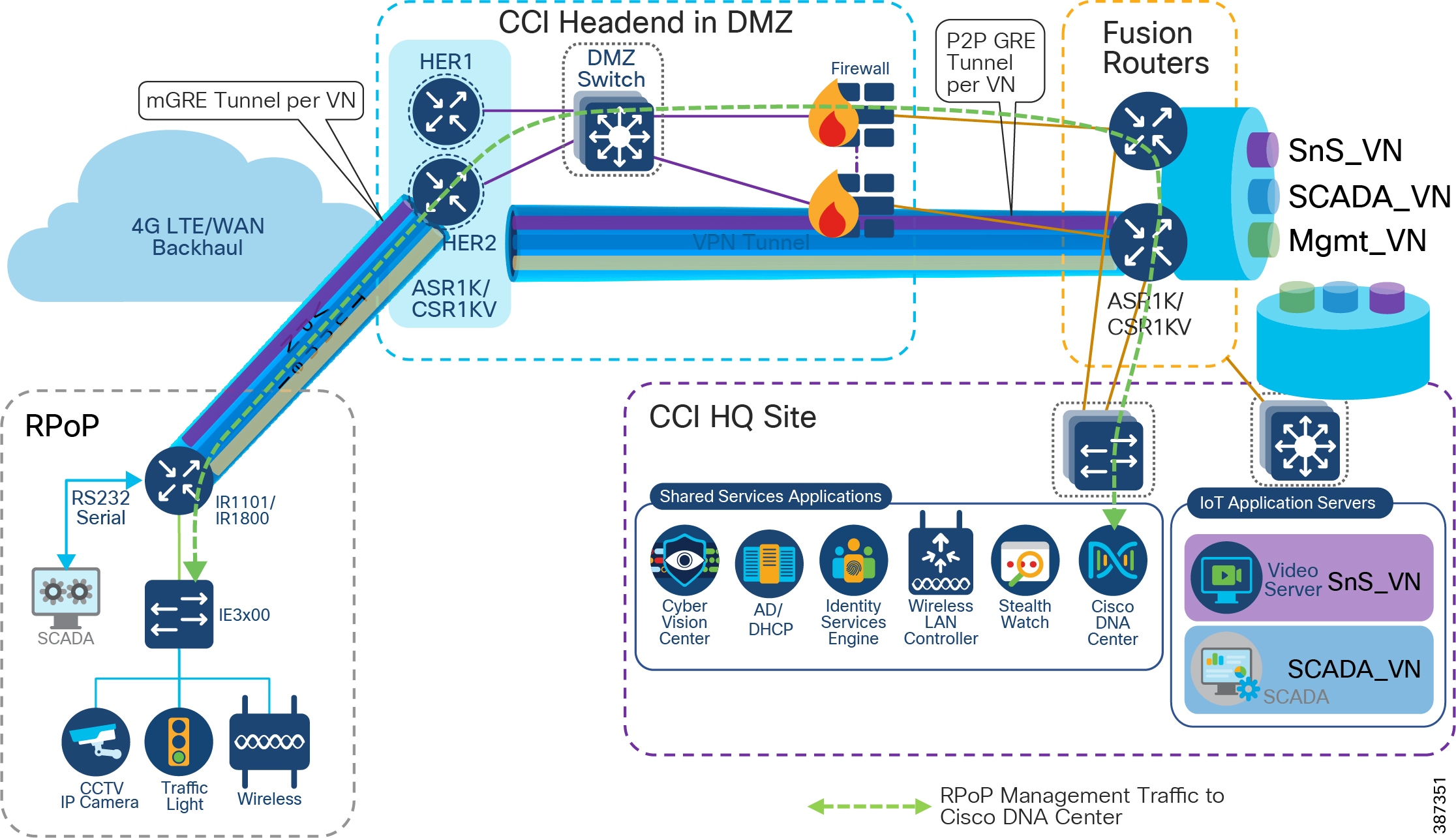

RPoP Macro-Segmentation Design

Network segmentation divides a larger network into smaller sub-networks that are isolated from each other for improved security and better access control and monitoring. CCI provides network macro-segmentation using SD-Access which is discussed in the section Security Segmentation Design. CCI RPoP offering multiservice requires each service to be isolated from the other for network security and also provide a CCI RPoP connectivity to rest of CCI network i.e CCI PoP sites and Application Servers in HQ/DC site.

This section discusses the design considerations for macro-segmenting the RPoP network and extend CCI services to RPoPs (IR1101s) connected via public Cellular network (or other backhaul) to the CCI headend (HE) in the DMZ.

Since CCI RPoP traffic can traverse any kind of public WAN, data should be encrypted with standards-based IPSec. This approach is advisable even if the WAN backhaul is a private network. An IPSec VPN can be built between the RPoP Gateway (IR1101) and the HER in the CCI HE. The CCI solution implements a sophisticated key generation and exchange mechanism for both link-layer and network-layer encryption. This significantly simplifies cryptographic key management and ensures that the hub-and-spoke encryption domain not only scales across thousands of field area routers, but also across thousands of RPoP gateways.

IP tunnels are a key capability for all RPoP use cases forwarding various traffic types over the backhaul WAN infrastructure. Various tunneling techniques may be used, but it is important to evaluate the individual technique OS support, performance, and scalability for the RPoP gateway (IR1101) and HER platforms.

The following is tunneling design guidance:

- FlexVPN Tunnel— FlexVPN is a flexible and scalable VPN solution based on IPSec and IKEv2. To secure CCI data communication with the headend across the WAN, FlexVPN is used. IKEv2 prefix injection is used to share tunnel source loopbacks.

- Communication with IR1101 in a RPoP is macro-segmented and securely transported as an overlay traffic through multipoint Generic Routing Encapsulation (mGRE) Tunnels. Next-hop resolution protocol (NHRP) is used to uniquely identify the macro-segments (VNs). It is recommended to combine mGRE, for segmentation, with a FlexVPN tunnel for secure backhaul to the HER.

- Routing for overlay traffic is done via iBGP (VRF Lite) between the RPoP routers and the HER, inside the mGRE; similarly between the HER and FR is done inside p2p GRE.

- RPoP Gateway with Macro-Segmentation Design depicts how CCI services are macro-segmented and extended to RPoPs via the CCI headend (HER) using Point-to-Point FlexVPN (between each IR1101 RPoP and the HER), and Multipoint GRE tunnels (from each IR1101 RPoP over the FlexVPN tunnel to the HER and from there to the Fusion Router).

Figure 24 RPoP Gateway with Macro-Segmentation Design

In CCI Headend Router Redundancy :

- CCI HQ/DC Site with Application Servers hosted in each VN for each CCI vertical service. CCI vertical services like Safety and Security (SnS_VN), LoRaWAN access based FlashNet street Lighting (LoRaWAN_VN), CR-Mesh access based Water SCADA (SCADA_VN or CR-Mesh_VN) etc., is macro-segmented in CCI SD-Access fabric with separate routing and forwarding (VRF) tables for each of the services.

- CCI Common Infrastructure or Shared Services consists of Cisco ISE, IoT FND, DHCP & Active Directory (AD) servers and WLC.

- CCI Fusion Routers (FR) connected to HQ/DC site via IP-Transit extends SD-Access fabric overlay VNs/VRFs created in fabric using Cisco DNA Center. FR provides access to non-fabric and shared services in CCI.

- The DMZ network portion of CCI communication headend, which includes:

–![]() A Cluster of ASR1000 Series or CSR1000v routers as Headend Routers (aka Hub Router for IP Tunnels)

A Cluster of ASR1000 Series or CSR1000v routers as Headend Routers (aka Hub Router for IP Tunnels)

–![]() Security FirePower/Firewalls in routed mode

Security FirePower/Firewalls in routed mode

- IR1101/IR1800s as Spoke routers in RPoP1 and RPoP2 connected to CCI headend via public cellular (LTE) WAN backhaul network.

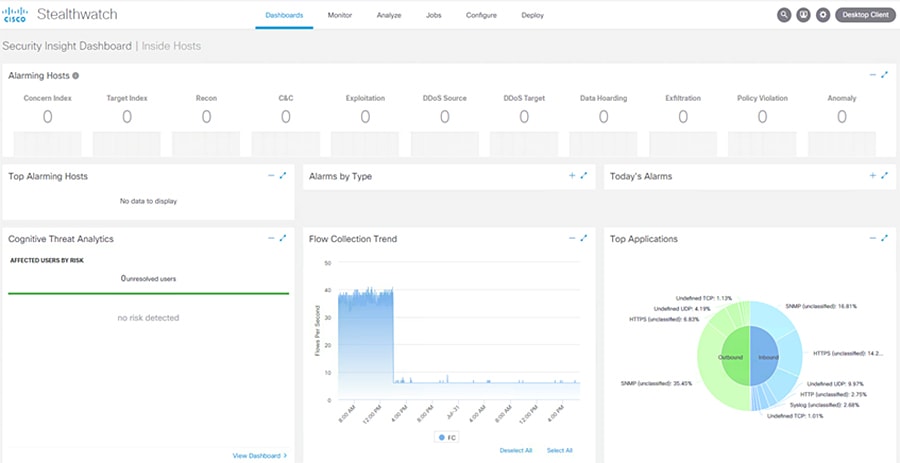

Design Considerations